- downgraded to python 3.10 to accomadate installing all dependencies

- by default installs all dev + extra dependencies

- option to install only dev dependencies by customizing .env file

separate makefile and build env:

- separate makefile for docker

- only show docker commands when docker detected in system

- only rebuild container on change

- use an unpriviliged user

builder image and base dev image:

- fully isolated environment inside container.

- all venv installed inside container shell and available as commands.

- ex: `docker run IMG jupyter notebook` to launch notebook.

- pure python based container without poetry.

- custom motd to add a message displayed to users when they connect to

container.

- print environment versions (git, package, python) on login

- display help message when starting container

# docs: updated `Supabase` notebook

- the title of the notebook was inconsistent (included redundant

"Vectorstore"). Removed this "Vectorstore"

- added `Postgress` to the title. It is important. The `Postgres` name

is much more popular than `Supabase`.

- added description for the `Postrgress`

- added more info to the `Supabase` description

# Update GPT4ALL integration

GPT4ALL have completely changed their bindings. They use a bit odd

implementation that doesn't fit well into base.py and it will probably

be changed again, so it's a temporary solution.

Fixes#3839, #4628

# Docs: compound ecosystem and integrations

**Problem statement:** We have a big overlap between the

References/Integrations and Ecosystem/LongChain Ecosystem pages. It

confuses users. It creates a situation when new integration is added

only on one of these pages, which creates even more confusion.

- removed References/Integrations page (but move all its information

into the individual integration pages - in the next PR).

- renamed Ecosystem/LongChain Ecosystem into Integrations/Integrations.

I like the Ecosystem term. It is more generic and semantically richer

than the Integration term. But it mentally overloads users. The

`integration` term is more concrete.

UPDATE: after discussion, the Ecosystem is the term.

Ecosystem/Integrations is the page (in place of Ecosystem/LongChain

Ecosystem).

As a result, a user gets a single place to start with the individual

integration.

this makes it so we dont throw errors when importing langchain when

sqlalchemy==1.3.1

we dont really want to support 1.3.1 (seems like unneccessary maintance

cost) BUT we would like it to not terribly error should someone decide

to run on it

# Add human message as input variable to chat agent prompt creation

This PR adds human message and system message input to

`CHAT_ZERO_SHOT_REACT_DESCRIPTION` agent, similar to [conversational

chat

agent](7bcf238a1a/langchain/agents/conversational_chat/base.py (L64-L71)).

I met this issue trying to use `create_prompt` function when using the

[BabyAGI agent with tools

notebook](https://python.langchain.com/en/latest/use_cases/autonomous_agents/baby_agi_with_agent.html),

since BabyAGI uses “task” instead of “input” input variable. For normal

zero shot react agent this is fine because I can manually change the

suffix to “{input}/n/n{agent_scratchpad}” just like the notebook, but I

cannot do this with conversational chat agent, therefore blocking me to

use BabyAGI with chat zero shot agent.

I tested this in my own project

[Chrome-GPT](https://github.com/richardyc/Chrome-GPT) and this fix

worked.

## Request for review

Agents / Tools / Toolkits

- @vowelparrot

# Fix bilibili api import error

bilibili-api package is depracated and there is no sync module.

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

Fixes#2673#2724

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@vowelparrot @liaokongVFX

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

# TextLoader auto detect encoding and enhanced exception handling

- Add an option to enable encoding detection on `TextLoader`.

- The detection is done using `chardet`

- The loading is done by trying all detected encodings by order of

confidence or raise an exception otherwise.

### New Dependencies:

- `chardet`

Fixes#4479

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

- @eyurtsev

---------

Co-authored-by: blob42 <spike@w530>

# Load specific file types from Google Drive (issue #4878)

Add the possibility to define what file types you want to load from

Google Drive.

```

loader = GoogleDriveLoader(

folder_id="1yucgL9WGgWZdM1TOuKkeghlPizuzMYb5",

file_types=["document", "pdf"]

recursive=False

)

```

Fixes ##4878

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

DataLoaders

- @eyurtsev

Twitter: [@UmerHAdil](https://twitter.com/@UmerHAdil) | Discord:

RicChilligerDude#7589

---------

Co-authored-by: UmerHA <40663591+UmerHA@users.noreply.github.com>

#docs: text splitters improvements

Changes are only in the Jupyter notebooks.

- added links to the source packages and a short description of these

packages

- removed " Text Splitters" suffixes from the TOC elements (they made

the list of the text splitters messy)

- moved text splitters, based on the length function into a separate

list. They can be mixed with any classes from the "Text Splitters", so

it is a different classification.

## Who can review?

@hwchase17 - project lead

@eyurtsev

@vowelparrot

NOTE: please, check out the results of the `Python code` text splitter

example (text_splitters/examples/python.ipynb). It looks suboptimal.

# Added another helpful way for developers who want to set OpenAI API

Key dynamically

Previous methods like exporting environment variables are good for

project-wide settings.

But many use cases need to assign API keys dynamically, recently.

```python

from langchain.llms import OpenAI

llm = OpenAI(openai_api_key="OPENAI_API_KEY")

```

## Before submitting

```bash

export OPENAI_API_KEY="..."

```

Or,

```python

import os

os.environ["OPENAI_API_KEY"] = "..."

```

<hr>

Thank you.

Cheers,

Bongsang

# Documentation for Azure OpenAI embeddings model

- OPENAI_API_VERSION environment variable is needed for the endpoint

- The constructor does not work with model, it works with deployment.

I fixed it in the notebook.

(This is my first contribution)

## Who can review?

@hwchase17

@agola

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# Add bs4 html parser

* Some minor refactors

* Extract the bs4 html parsing code from the bs html loader

* Move some tests from integration tests to unit tests

# Add generic document loader

* This PR adds a generic document loader which can assemble a loader

from a blob loader and a parser

* Adds a registry for parsers

* Populate registry with a default mimetype based parser

## Expected changes

- Parsing involves loading content via IO so can be sped up via:

* Threading in sync

* Async

- The actual parsing logic may be computatinoally involved: may need to

figure out to add multi-processing support

- May want to add suffix based parser since suffixes are easier to

specify in comparison to mime types

## Before submitting

No notebooks yet, we first need to get a few of the basic parsers up

(prior to advertising the interface)

It's currently not possible to change the `TEMPLATE_TOOL_RESPONSE`

prompt for ConversationalChatAgent, this PR changes that.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Update deployments doc with langcorn API server

API server example

```python

from fastapi import FastAPI

from langcorn import create_service

app: FastAPI = create_service(

"examples.ex1:chain",

"examples.ex2:chain",

"examples.ex3:chain",

"examples.ex4:sequential_chain",

"examples.ex5:conversation",

"examples.ex6:conversation_with_summary",

)

```

More examples: https://github.com/msoedov/langcorn/tree/main/examples

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Docs and code review fixes for Docugami DataLoader

1. I noticed a couple of hyperlinks that are not loading in the

langchain docs (I guess need explicit anchor tags). Added those.

2. In code review @eyurtsev had a

[suggestion](https://github.com/hwchase17/langchain/pull/4727#discussion_r1194069347)

to allow string paths. Turns out just updating the type works (I tested

locally with string paths).

# Pre-submission checks

I ran `make lint` and `make tests` successfully.

---------

Co-authored-by: Taqi Jaffri <tjaffri@docugami.com>

# Fix Homepage Typo

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested... not sure

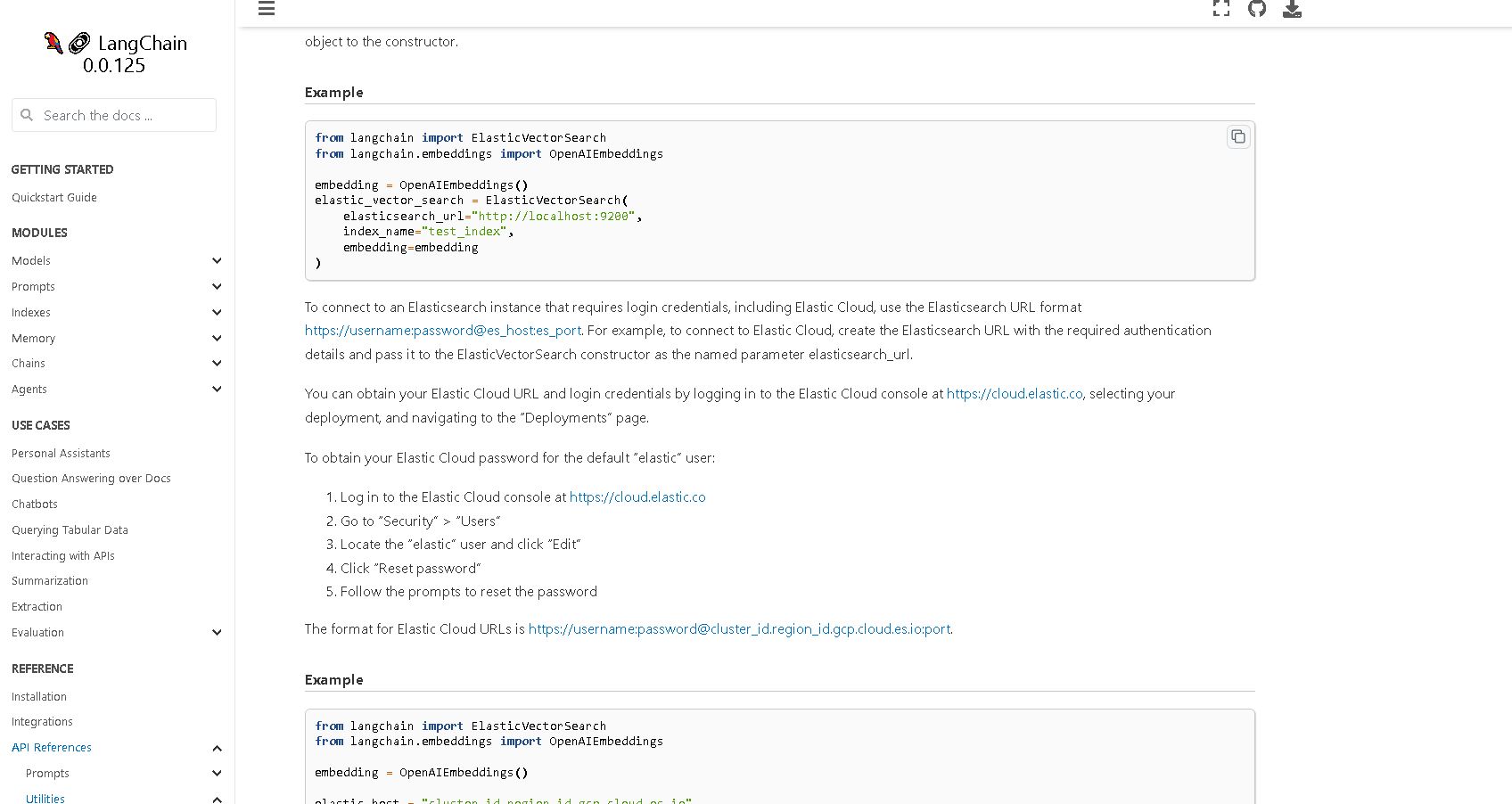

# Docs: improvements in the `retrievers/examples/` notebooks

Its primary purpose is to make the Jupyter notebook examples

**consistent** and more suitable for first-time viewers.

- add links to the integration source (if applicable) with a short

description of this source;

- removed `_retriever` suffix from the file names (where it existed) for

consistency;

- removed ` retriever` from the notebook title (where it existed) for

consistency;

- added code to install necessary Python package(s);

- added code to set up the necessary API Key.

- very small fixes in notebooks from other folders (for consistency):

- docs/modules/indexes/vectorstores/examples/elasticsearch.ipynb

- docs/modules/indexes/vectorstores/examples/pinecone.ipynb

- docs/modules/models/llms/integrations/cohere.ipynb

- fixed misspelling in langchain/retrievers/time_weighted_retriever.py

comment (sorry, about this change in a .py file )

## Who can review

@dev2049

# Remove unused variables in Milvus vectorstore

This PR simply removes a variable unused in Milvus. The variable looks

like a copy-paste from other functions in Milvus but it is really

unnecessary.

# Fix TypeError in Vectorstore Redis class methods

This change resolves a TypeError that was raised when invoking the

`from_texts_return_keys` method from the `from_texts` method in the

`Redis` class. The error was due to the `cls` argument being passed

explicitly, which led to it being provided twice since it's also

implicitly passed in class methods. No relevant tests were added as the

issue appeared to be better suited for linters to catch proactively.

Changes:

- Removed `cls=cls` from the call to `from_texts_return_keys` in the

`from_texts` method.

Related to:

https://github.com/hwchase17/langchain/pull/4653

# Remove unnecessary comment

Remove unnecessary comment accidentally included in #4800

## Before submitting

- no test

- no document

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

# Fixed typos (issues #4818 & #4668 & more typos)

- At some places, it said `model = ChatOpenAI(model='gpt-3.5-turbo')`

but should be `model = ChatOpenAI(model_name='gpt-3.5-turbo')`

- Fixes some other typos

Fixes#4818, #4668

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

Previously, the client expected a strict 'prompt' or 'messages' format

and wouldn't permit running a chat model or llm on prompts or messages

(respectively).

Since many datasets may want to specify custom key: string , relax this

requirement.

Also, add support for running a chat model on raw prompts and LLM on

chat messages through their respective fallbacks.

# Your PR Title (What it does)

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

# Fix subclassing OpenAIEmbeddings

Fixes#4498

## Before submitting

- Problem: Due to annotated type `Tuple[()]`.

- Fix: Change the annotated type to "Iterable[str]". Even though

tiktoken use

[Collection[str]](095924e02c/tiktoken/core.py (L80))

type annotation, but pydantic doesn't support Collection type, and

[Iterable](https://docs.pydantic.dev/latest/usage/types/#typing-iterables)

is the closest to Collection.

# fix: agenerate miss run_manager args in llm.py

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

Fixes # (issue)

fix: agenerate miss run_manager args in llm.py

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

ArxivAPIWrapper searches and downloads PDFs to get related information.

But I found that it doesn't delete the downloaded file. The reason why

this is a problem is that a lot of PDF files remain on the server. For

example, one size is about 28M.

So, I added a delete line because it's too big to maintain on the

server.

# Clean up downloaded PDF files

- Changes: Added new line to delete downloaded file

- Background: To get the information on arXiv's paper, ArxivAPIWrapper

class downloads a PDF.

It's a natural approach, but the wrapper retains a lot of PDF files on

the server.

- Problem: One size of PDFs is about 28M. It's too big to maintain on a

small server like AWS.

- Dependency: import os

Thank you.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Get the memory importance score from regex matched group

In `GenerativeAgentMemory`, the `_score_memory_importance()` will make a

prompt to get a rating score. The prompt is:

```

prompt = PromptTemplate.from_template(

"On the scale of 1 to 10, where 1 is purely mundane"

+ " (e.g., brushing teeth, making bed) and 10 is"

+ " extremely poignant (e.g., a break up, college"

+ " acceptance), rate the likely poignancy of the"

+ " following piece of memory. Respond with a single integer."

+ "\nMemory: {memory_content}"

+ "\nRating: "

)

```

For some LLM, it will respond with, for example, `Rating: 8`. Thus we

might want to get the score from the matched regex group.

The function _get_prompt() was returning the DEFAULT_EXAMPLES even if

some custom examples were given. The return FewShotPromptTemplate was

returnong DEFAULT_EXAMPLES and not examples

# The cohere embedding model do not use large, small. It is deprecated.

Changed the modules default model

Fixes#4694

Co-authored-by: rajib76 <rajib76@yahoo.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

**Feature**: This PR adds `from_template_file` class method to

BaseStringMessagePromptTemplate. This is useful to help user to create

message prompt templates directly from template files, including

`ChatMessagePromptTemplate`, `HumanMessagePromptTemplate`,

`AIMessagePromptTemplate` & `SystemMessagePromptTemplate`.

**Tests**: Unit tests have been added in this PR.

Co-authored-by: charosen <charosen@bupt.cn>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Removed usage of deprecated methods

Replaced `SQLDatabaseChain` deprecated direct initialisation with

`from_llm` method

## Who can review?

@hwchase17

@agola11

---------

Co-authored-by: imeckr <chandanroutray2012@gmail.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Fixed query checker for SQLDatabaseChain

When `SQLDatabaseChain`'s llm attribute was deprecated, the query

checker stopped working if `SQLDatabaseChain` is initialised via

`from_llm` method. With this fix, `SQLDatabaseChain`'s query checker

would use the same `llm` as used in the `llm_chain`

## Who can review?

@hwchase17 - project lead

Co-authored-by: imeckr <chandanroutray2012@gmail.com>

- Installation of non-colab packages

- Get API keys

# Added dependencies to make notebook executable on hosted notebooks

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@hwchase17

@vowelparrot

- Installation of non-colab packages

- Get API keys

- Get rid of warnings

# Cleanup and added dependencies to make notebook executable on hosted

notebooks

@hwchase17

@vowelparrot

The current example in

https://python.langchain.com/en/latest/modules/agents/plan_and_execute.html

has inconsistent reasoning step (observing 28 years and thinking it's 26

years):

```

Observation: 28 years

Thought:Based on my search, Gigi Hadid's current age is 26 years old.

Action:

{

"action": "Final Answer",

"action_input": "Gigi Hadid's current age is 26 years old."

}

```

Guessing this is model noise. Rerunning seems to give correct answer of

28 years.

Adds some basic unit tests for the ConfluenceLoader that can be extended

later. Ports this [PR from

llama-hub](https://github.com/emptycrown/llama-hub/pull/208) and adapts

it to `langchain`.

@Jflick58 and @zywilliamli adding you here as potential reviewers

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Improve the Chroma get() method by adding the optional "include"

parameter.

The Chroma get() method excludes embeddings by default. You can

customize the response by specifying the "include" parameter to

selectively retrieve the desired data from the collection.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Fix Telegram API loader + add tests.

I was testing this integration and it was broken with next error:

```python

message_threads = loader._get_message_threads(df)

KeyError: False

```

Also, this particular loader didn't have any tests / related group in

poetry, so I added those as well.

@hwchase17 / @eyurtsev please take a look on this fix PR.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

Add client methods to read / list runs and sessions.

Update walkthrough to:

- Let the user create a dataset from the runs without going to the UI

- Use the new CLI command to start the server

Improve the error message when `docker` isn't found

# Cassandra support for chat history

### Description

- Store chat messages in cassandra

### Dependency

- cassandra-driver - Python Module

## Before submitting

- Added Integration Test

## Who can review?

@hwchase17

@agola11

# Your PR Title (What it does)

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

Co-authored-by: Jinto Jose <129657162+jj701@users.noreply.github.com>

Adds the basic retrievers for Milvus and Zilliz. Hybrid search support

will be added in the future.

Signed-off-by: Filip Haltmayer <filip.haltmayer@zilliz.com>

# Fix DeepLake Overwrite Flag Issue

Fixes Issue #4682: essentially, setting overwrite to False in the

DeepLake constructor still triggers an overwrite, because the logic is

just checking for the presence of "overwrite" in kwargs. The fix is

simple--just add some checks to inspect if "overwrite" in kwargs AND

kwargs["overwrite"]==True.

Added a new test in

tests/integration_tests/vectorstores/test_deeplake.py to reflect the

desired behavior.

Co-authored-by: Anirudh Suresh <ani@Anirudhs-MBP.cable.rcn.com>

Co-authored-by: Anirudh Suresh <ani@Anirudhs-MacBook-Pro.local>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

Making headless an optional argument for

create_async_playwright_browser() and create_sync_playwright_browser()

By default no functionality is changed.

This allows for disabled people to use a web browser intelligently with

their voice, for example, while still seeing the content on the screen.

As well as many other use cases

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

This PR adds exponential back-off to the Google PaLM api to gracefully

handle rate limiting errors.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# docs: added `additional_resources` folder

The additional resource files were inside the doc top-level folder,

which polluted the top-level folder.

- added the `additional_resources` folder and moved correspondent files

to this folder;

- fixed a broken link to the "Model comparison" page (model_laboratory

notebook)

- fixed a broken link to one of the YouTube videos (sorry, it is not

directly related to this PR)

## Who can review?

@dev2049

# Add summarization task type for HuggingFace APIs

Add summarization task type for HuggingFace APIs.

This task type is described by [HuggingFace inference

API](https://huggingface.co/docs/api-inference/detailed_parameters#summarization-task)

My project utilizes LangChain to connect multiple LLMs, including

various HuggingFace models that support the summarization task.

Integrating this task type is highly convenient and beneficial.

Fixes#4720

This reverts commit 5111bec540.

This PR introduced a bug in the async API (the `url` param isn't bound);

it also didn't update the synchronous API correctly, which makes it

error-prone (the behavior of the async and sync endpoints would be

different)

- added an official LangChain YouTube channel :)

- added new tutorials and videos (only videos with enough subscriber or

view numbers)

- added a "New video" icon

## Who can review?

@dev2049

Fixes some bugs I found while testing with more advanced datasets and

queries. Includes using the output of PowerBI to parse the error and

give that back to the LLM.

# Add GraphQL Query Support

This PR introduces a GraphQL API Wrapper tool that allows LLM agents to

query GraphQL databases. The tool utilizes the httpx and gql Python

packages to interact with GraphQL APIs and provides a simple interface

for running queries with LLM agents.

@vowelparrot

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Only run linkchecker on direct changes to docs

This is a stop-gap that will speed up PRs.

Some broken links can slip through if they're embedded in doc-strings

inside the codebase.

But we'll still be running the linkchecker on master.

# Check poetry lock file on CI

This PR checks that the lock file is up to date using poetry lock

--check.

As part of this PR, a new lock file was generated.

# glossary.md renamed as concepts.md and moved under the Getting Started

small PR.

`Concepts` looks right to the point. It is moved under Getting Started

(typical place). Previously it was lost in the Additional Resources

section.

## Who can review?

@hwchase17

# Added support for streaming output response to

HuggingFaceTextgenInference LLM class

Current implementation does not support streaming output. Updated to

incorporate this feature. Tagging @agola11 for visibility.

Instead of halting the entire program if this tool encounters an error,

it should pass the error back to the agent to decide what to do.

This may be best suited for @vowelparrot to review.

### Adds a document loader for Docugami

Specifically:

1. Adds a data loader that talks to the [Docugami](http://docugami.com)

API to download processed documents as semantic XML

2. Parses the semantic XML into chunks, with additional metadata

capturing chunk semantics

3. Adds a detailed notebook showing how you can use additional metadata

returned by Docugami for techniques like the [self-querying

retriever](https://python.langchain.com/en/latest/modules/indexes/retrievers/examples/self_query_retriever.html)

4. Adds an integration test, and related documentation

Here is an example of a result that is not possible without the

capabilities added by Docugami (from the notebook):

<img width="1585" alt="image"

src="https://github.com/hwchase17/langchain/assets/749277/bb6c1ce3-13dc-4349-a53b-de16681fdd5b">

---------

Co-authored-by: Taqi Jaffri <tjaffri@docugami.com>

Co-authored-by: Taqi Jaffri <tjaffri@gmail.com>

# Improve video_id extraction in `YoutubeLoader`

`YoutubeLoader.from_youtube_url` can only deal with one specific url

format. I've introduced `YoutubeLoader.extract_video_id` which can

extract video id from common YT urls.

Fixes#4451

@eyurtsev

---------

Co-authored-by: Kamil Niski <kamil.niski@gmail.com>

# Added Tutorials section on the top-level of documentation

**Problem Statement**: the Tutorials section in the documentation is

top-priority. Not every project has resources to make tutorials. We have

such a privilege. Community experts created several tutorials on

YouTube.

But the tutorial links are now hidden on the YouTube page and not easily

discovered by first-time visitors.

**PR**: I've created the `Tutorials` page (from the `Additional

Resources/YouTube` page) and moved it to the top level of documentation

in the `Getting Started` section.

## Who can review?

@dev2049

NOTE:

PR checks are randomly failing

3aefaafcdb258819eadf514d81b5b3

# Respect User-Specified User-Agent in WebBaseLoader

This pull request modifies the `WebBaseLoader` class initializer from

the `langchain.document_loaders.web_base` module to preserve any

User-Agent specified by the user in the `header_template` parameter.

Previously, even if a User-Agent was specified in `header_template`, it

would always be overridden by a random User-Agent generated by the

`fake_useragent` library.

With this change, if a User-Agent is specified in `header_template`, it

will be used. Only in the case where no User-Agent is specified will a

random User-Agent be generated and used. This provides additional

flexibility when using the `WebBaseLoader` class, allowing users to

specify their own User-Agent if they have a specific need or preference,

while still providing a reasonable default for cases where no User-Agent

is specified.

This change has no impact on existing users who do not specify a

User-Agent, as the behavior in this case remains the same. However, for

users who do specify a User-Agent, their choice will now be respected

and used for all subsequent requests made using the `WebBaseLoader`

class.

Fixes#4167

## Before submitting

============================= test session starts

==============================

collecting ... collected 1 item

test_web_base.py::TestWebBaseLoader::test_respect_user_specified_user_agent

============================== 1 passed in 3.64s

===============================

PASSED [100%]

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested: @eyurtsev

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

[OpenWeatherMapAPIWrapper](f70e18a5b3/docs/modules/agents/tools/examples/openweathermap.ipynb)

works wonderfully, but the _tool_ itself can't be used in master branch.

- added OpenWeatherMap **tool** to the public api, to be loadable with

`load_tools` by using "openweathermap-api" tool name (that name is used

in the existing

[docs](aff33d52c5/docs/modules/agents/tools/getting_started.md),

at the bottom of the page)

- updated OpenWeatherMap tool's **description** to make the input format

match what the API expects (e.g. `London,GB` instead of `'London,GB'`)

- added [ecosystem documentation page for

OpenWeatherMap](f9c41594fe/docs/ecosystem/openweathermap.md)

- added tool usage example to [OpenWeatherMap's

notebook](f9c41594fe/docs/modules/agents/tools/examples/openweathermap.ipynb)

Let me know if there's something I missed or something needs to be

updated! Or feel free to make edits yourself if that makes it easier for

you 🙂

[RELLM](https://github.com/r2d4/rellm) is a library that wraps local

HuggingFace pipeline models for structured decoding.

RELLM works by generating tokens one at a time. At each step, it masks

tokens that don't conform to the provided partial regular expression.

[JSONFormer](https://github.com/1rgs/jsonformer) is a bit different, where it sequentially adds the keys then decodes each value directly

Currently, all Zapier tools are built using the pre-written base Zapier

prompt. These small changes (that retain default behavior) will allow a

user to create a Zapier tool using the ZapierNLARunTool while providing

their own base prompt.

Their prompt must contain input fields for zapier_description and

params, checked and enforced in the tool's root validator.

An example of when this may be useful: user has several, say 10, Zapier

tools enabled. Currently, the long generic default Zapier base prompt is

attached to every single tool, using an extreme number of tokens for no

real added benefit (repeated). User prompts LLM on how to use Zapier

tools once, then overrides the base prompt.

Or: user has a few specific Zapier tools and wants to maximize their

success rate. So, user writes prompts/descriptions for those tools

specific to their use case, and provides those to the ZapierNLARunTool.

A consideration - this is the simplest way to implement this I could

think of... though ideally custom prompting would be possible at the

Toolkit level as well. For now, this should be sufficient in solving the

concerns outlined above.

The error in #4087 was happening because of the use of csv.Dialect.*

which is just an empty base class. we need to make a choice on what is

our base dialect. I usually use excel so I put it as excel, if

maintainers have other preferences do let me know.

Open Questions:

1. What should be the default dialect?

2. Should we rework all tests to mock the open function rather than the

csv.DictReader?

3. Should we make a separate input for `dialect` like we have for

`encoding`?

---------

Co-authored-by: = <=>

**Problem statement:** the

[document_loaders](https://python.langchain.com/en/latest/modules/indexes/document_loaders.html#)

section is too long and hard to comprehend.

**Proposal:** group document_loaders by 3 classes: (see `Files changed`

tab)

UPDATE: I've completely reworked the document_loader classification.

Now this PR changes only one file!

FYI @eyurtsev @hwchase17

### Refactor the BaseTracer

- Remove the 'session' abstraction from the BaseTracer

- Rename 'RunV2' object(s) to be called 'Run' objects (Rename previous

Run objects to be RunV1 objects)

- Ditto for sessions: TracerSession*V2 -> TracerSession*

- Remove now deprecated conversion from v1 run objects to v2 run objects

in LangChainTracerV2

- Add conversion from v2 run objects to v1 run objects in V1 tracer

fixes a syntax error mentioned in

#2027 and #3305

another PR to remedy is in #3385, but I believe that is not tacking the

core problem.

Also #2027 mentions a solution that works:

add to the prompt:

'The SQL query should be outputted plainly, do not surround it in quotes

or anything else.'

To me it seems strange to first ask for:

SQLQuery: "SQL Query to run"

and then to tell the LLM not to put the quotes around it. Other

templates (than the sql one) do not use quotes in their steps.

This PR changes that to:

SQLQuery: SQL Query to run

## Change Chain argument in client to accept a chain factory

The `run_over_dataset` functionality seeks to treat each iteration of an

example as an independent trial.

Chains have memory, so it's easier to permit this type of behavior if we

accept a factory method rather than the chain object directly.

There's still corner cases / UX pains people will likely run into, like:

- Caching may cause issues

- if memory is persisted to a shared object (e.g., same redis queue) ,

this could impact what is retrieved

- If we're running the async methods with concurrency using local

models, if someone naively instantiates the chain and loads each time,

it could lead to tons of disk I/O or OOM

# Provide get current date function dialect for other DBs

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@eyurtsev

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

# Cosmetic in errors formatting

Added appropriate spacing to the `ImportError` message in a bunch of

document loaders to enhance trace readability (including Google Drive,

Youtube, Confluence and others). This change ensures that the error

messages are not displayed as a single line block, and that the `pip

install xyz` commands can be copied to clipboard from terminal easily.

## Who can review?

@eyurtsev

# Adds testing options to pytest

This PR adds the following options:

* `--only-core` will skip all extended tests, running all core tests.

* `--only-extended` will skip all core tests. Forcing alll extended

tests to be run.

Running `py.test` without specifying either option will remain

unaffected. Run

all tests that can be run within the unit_tests direction. Extended

tests will

run if required packages are installed.

## Before submitting

## Who can review?

[Text Generation

Inference](https://github.com/huggingface/text-generation-inference) is

a Rust, Python and gRPC server for generating text using LLMs.

This pull request add support for self hosted Text Generation Inference

servers.

feature: #4280

---------

Co-authored-by: Your Name <you@example.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Enhance the prompt to make the LLM generate right date for real today

Fixes # (issue)

Currently, if the user's question contains `today`, the clickhouse

always points to an old date. This may be related to the fact that the

GPT training data is relatively old.

### Add Invocation Params to Logged Run

Adds an llm type to each chat model as well as an override of the dict()

method to log the invocation parameters for each call

---------

Co-authored-by: Ankush Gola <ankush.gola@gmail.com>

# Your PR Title (What it does)

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoader Abstractions

- @eyurtsev

LLM/Chat Wrappers

- @hwchase17

- @agola11

Tools / Toolkits

- @vowelparrot

-->

# Add `tiktoken` as dependency when installed as `langchain[openai]`

Fixes#4513 (issue)

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@vowelparrot

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

### Add on_chat_message_start to callback manager and base tracer

Goal: trace messages directly to permit reloading as chat messages

(store in an integration-agnostic way)

Add an `on_chat_message_start` method. Fall back to `on_llm_start()` for

handlers that don't have it implemented.

Does so in a non-backwards-compat breaking way (for now)

# Make BaseStringMessagePromptTemplate.from_template return type generic

I use mypy to check type on my code that uses langchain. Currently after

I load a prompt and convert it to a system prompt I have to explicitly

cast it which is quite ugly (and not necessary):

```

prompt_template = load_prompt("prompt.yaml")

system_prompt_template = cast(

SystemMessagePromptTemplate,

SystemMessagePromptTemplate.from_template(prompt_template.template),

)

```

With this PR, the code would simply be:

```

prompt_template = load_prompt("prompt.yaml")

system_prompt_template = SystemMessagePromptTemplate.from_template(prompt_template.template)

```

Given how much langchain uses inheritance, I think this type hinting

could be applied in a bunch more places, e.g. load_prompt also return a

`FewShotPromptTemplate` or a `PromptTemplate` but without typing the

type checkers aren't able to infer that. Let me know if you agree and I

can take a look at implementing that as well.

@hwchase17 - project lead

DataLoaders

- @eyurtsev

Rebased Mahmedk's PR with the callback refactor and added the example

requested by hwchase plus a couple minor fixes

---------

Co-authored-by: Ahmed K <77802633+mahmedk@users.noreply.github.com>

Co-authored-by: Ahmed K <mda3k27@gmail.com>

Co-authored-by: Davis Chase <130488702+dev2049@users.noreply.github.com>

Co-authored-by: Corey Zumar <39497902+dbczumar@users.noreply.github.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

We're fans of the LangChain framework thus we wanted to make sure we

provide an easy way for our customers to be able to utilize this

framework for their LLM-powered applications at our platform.

# Parameterize Redis vectorstore index

Redis vectorstore allows for three different distance metrics: `L2`

(flat L2), `COSINE`, and `IP` (inner product). Currently, the

`Redis._create_index` method hard codes the distance metric to COSINE.

I've parameterized this as an argument in the `Redis.from_texts` method

-- pretty simple.

Fixes#4368

## Before submitting

I've added an integration test showing indexes can be instantiated with

all three values in the `REDIS_DISTANCE_METRICS` literal. An example

notebook seemed overkill here. Normal API documentation would be more

appropriate, but no standards are in place for that yet.

## Who can review?

Not sure who's responsible for the vectorstore module... Maybe @eyurtsev

/ @hwchase17 / @agola11 ?

# Fix minor issues in self-query retriever prompt formatting

I noticed a few minor issues with the self-query retriever's prompt

while using it, so here's PR to fix them 😇

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoader Abstractions

- @eyurtsev

LLM/Chat Wrappers

- @hwchase17

- @agola11

Tools / Toolkits

- @vowelparrot

-->

# Add option to `load_huggingface_tool`

Expose a method to load a huggingface Tool from the HF hub

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Refactor the test workflow

This PR refactors the tests to run using a single test workflow. This

makes it easier to relaunch failing tests and see in the UI which test

failed since the jobs are grouped together.

## Before submitting

## Who can review?

Thanks to @anna-charlotte and @jupyterjazz for the contribution! Made

few small changes to get it across the finish line

---------

Signed-off-by: anna-charlotte <charlotte.gerhaher@jina.ai>

Signed-off-by: jupyterjazz <saba.sturua@jina.ai>

Co-authored-by: anna-charlotte <charlotte.gerhaher@jina.ai>

Co-authored-by: jupyterjazz <saba.sturua@jina.ai>

Co-authored-by: Saba Sturua <45267439+jupyterjazz@users.noreply.github.com>

# Add action to test with all dependencies installed

PR adds a custom action for setting up poetry that allows specifying a

cache key:

https://github.com/actions/setup-python/issues/505#issuecomment-1273013236

This makes it possible to run 2 types of unit tests:

(1) unit tests with only core dependencies

(2) unit tests with extended dependencies (e.g., those that rely on an

optional pdf parsing library)

As part of this PR, we're moving some pdf parsing tests into the

unit-tests section and making sure that these unit tests get executed

when running with extended dependencies.

# ODF File Loader

Adds a data loader for handling Open Office ODT files. Requires

`unstructured>=0.6.3`.

### Testing

The following should work using the `fake.odt` example doc from the

[`unstructured` repo](https://github.com/Unstructured-IO/unstructured).

```python

from langchain.document_loaders import UnstructuredODTLoader

loader = UnstructuredODTLoader(file_path="fake.odt", mode="elements")

loader.load()

loader = UnstructuredODTLoader(file_path="fake.odt", mode="single")

loader.load()

```

Any import that touches langchain.retrievers currently requires Lark.

Here's one attempt to fix. Not very pretty, very open to other ideas.

Alternatives I thought of are 1) make Lark requirement, 2) put

everything in parser.py in the try/except. Neither sounds much better

Related to #4316, #4275

Fixed two small bugs (as reported in issue #1619 ) in the filtering by

metadata for `chroma` databases :

- ```langchain.vectorstores.chroma.similarity_search``` takes a

```filter``` input parameter but do not forward it to

```langchain.vectorstores.chroma.similarity_search_with_score```

- ```langchain.vectorstores.chroma.similarity_search_by_vector```

doesn't take this parameter in input, although it could be very useful,

without any additional complexity - and it would thus be coherent with

the syntax of the two other functions.

Co-authored-by: Davis Chase <130488702+dev2049@users.noreply.github.com>

Currently, MultiPromptChain instantiates a ChatOpenAI LLM instance for

the default chain to use if none of the prompts passed match. This seems

like an error as it means that you can't use your choice of LLM, or

configure how to instantiate the default LLM (e.g. passing in an API key

that isn't in the usual env variable).

Fixes#4153

If the sender of a message in a group chat isn't in your contact list,

they will appear with a ~ prefix in the exported chat. This PR adds

support for parsing such lines.

# Add support for Qdrant nested filter

This extends the filter functionality for the Qdrant vectorstore. The

current filter implementation is limited to a single-level metadata

structure; however, Qdrant supports nested metadata filtering. This

extends the functionality for users to maximize the filter functionality

when using Qdrant as the vectorstore.

Reference: https://qdrant.tech/documentation/filtering/#nested-key

---------

Signed-off-by: Aivin V. Solatorio <avsolatorio@gmail.com>

This pr makes it possible to extract more metadata from websites for

later use.

my usecase:

parsing ld+json or microdata from sites and store it as structured data

in the metadata field

- added `Wikipedia` retriever. It is effectively a wrapper for

`WikipediaAPIWrapper`. It wrapps load() into get_relevant_documents()

- sorted `__all__` in the `retrievers/__init__`

- added integration tests for the WikipediaRetriever

- added an example (as Jupyter notebook) for the WikipediaRetriever

# Minor Wording Documentation Change

```python

agent_chain.run("When's my friend Eric's surname?")

# Answer with 'Zhu'

```

is change to

```python

agent_chain.run("What's my friend Eric's surname?")

# Answer with 'Zhu'

```

I think when is a residual of the old query that was "When’s my friends

Eric`s birthday?".

# Add PDF parser implementations

This PR separates the data loading from the parsing for a number of

existing PDF loaders.

Parser tests have been designed to help encourage developers to create a

consistent interface for parsing PDFs.

This interface can be made more consistent in the future by adding

information into the initializer on desired behavior with respect to splitting by

page etc.

This code is expected to be backwards compatible -- with the exception

of a bug fix with pymupdf parser which was returning `bytes` in the page

content rather than strings.

Also changing the lazy parser method of document loader to return an

Iterator rather than Iterable over documents.

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoader Abstractions

- @eyurtsev

LLM/Chat Wrappers

- @hwchase17

- @agola11

Tools / Toolkits

- @vowelparrot

-->

# Add MimeType Based Parser

This PR adds a MimeType Based Parser. The parser inspects the mime-type

of the blob it is parsing and based on the mime-type can delegate to the sub

parser.

## Before submitting

Waiting on adding notebooks until more implementations are landed.

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@hwchase17

@vowelparrot

# Update Writer LLM integration

Changes the parameters and base URL to be in line with Writer's current

API.

Based on the documentation on this page:

https://dev.writer.com/reference/completions-1

# Fix grammar in Text Splitters docs

Just a small fix of grammar in the documentation:

"That means there two different axes" -> "That means there are two

different axes"

Add a notebook in the `experimental/` directory detailing:

- How to capture traces with the v2 endpoint

- How to create datasets

- How to run traces over the dataset

Ensure compatibility with both SQLAlchemy v1/v2

fix the issue when using SQLAlchemy v1 (reported at #3884)

`

langchain/vectorstores/pgvector.py", line 168, in

create_tables_if_not_exists

self._conn.commit()

AttributeError: 'Connection' object has no attribute 'commit'

`

Ref Doc :

https://docs.sqlalchemy.org/en/14/changelog/migration_20.html#migration-20-autocommit

Handle duplicate and incorrectly specified OpenAI params

Thanks @PawelFaron for the fix! Made small update

Closes#4331

---------

Co-authored-by: PawelFaron <42373772+PawelFaron@users.noreply.github.com>

Co-authored-by: Pawel Faron <ext-pawel.faron@vaisala.com>

### Description

Add `similarity_search_with_score` method for OpenSearch to return

scores along with documents in the search results

Signed-off-by: Naveen Tatikonda <navtat@amazon.com>

fix: solve the infinite loop caused by 'add_memory' function when run

'pause_to_reflect' function

run steps:

'add_memory' -> 'pause_to_reflect' -> 'add_memory': infinite loop

This PR adds:

* Option to show a tqdm progress bar when using the file system blob loader

* Update pytest run configuration to be stricter

* Adding a new marker that checks that required pkgs exist

- Update the load_tools method to properly accept `callbacks` arguments.

- Add a deprecation warning when `callback_manager` is passed

- Add two unit tests to check the deprecation warning is raised and to

confirm the callback is passed through.

Closes issue #4096

This commit adds support for passing binary_location to the SeleniumURLLoader when creating Chrome or Firefox web drivers.

This allows users to specify the Browser binary location which is required when deploying to services such as Heroku

This change also includes updated documentation and type hints to reflect the new binary_location parameter and its usage.

fixes#4304

Today, when running a chain without any arguments, the raised ValueError

incorrectly specifies that user provided "both positional arguments and

keyword arguments".

This PR adds a more accurate error in that case.

Related: #4028, I opened a new PR because (1) I was unable to unstage

mistakenly committed files (I'm not familiar with git enough to resolve

this issue), (2) I felt closing the original PR and opening a new PR

would be more appropriate if I changed the class name.

This PR creates HumanInputLLM(HumanLLM in #4028), a simple LLM wrapper

class that returns user input as the response. I also added a simple

Jupyter notebook regarding how and why to use this LLM wrapper. In the

notebook, I went over how to use this LLM wrapper and showed example of

testing `WikipediaQueryRun` using HumanInputLLM.

I believe this LLM wrapper will be useful especially for debugging,

educational or testing purpose.

- Added the `Wikipedia` document loader. It is based on the existing

`unilities/WikipediaAPIWrapper`

- Added a respective ut-s and example notebook

- Sorted list of classes in __init__

- made notebooks consistent: titles, service/format descriptions.

- corrected short names to full names, for example, `Word` -> `Microsoft

Word`

- added missed descriptions

- renamed notebook files to make ToC correctly sorted

Hello

1) Passing `embedding_function` as a callable seems to be outdated and

the common interface is to pass `Embeddings` instance

2) At the moment `Qdrant.add_texts` is designed to be used with

`embeddings.embed_query`, which is 1) slow 2) causes ambiguity due to 1.

It should be used with `embeddings.embed_documents`

This PR solves both problems and also provides some new tests

- Update the RunCreate object to work with recent changes

- Add optional Example ID to the tracer

- Adjust default persist_session behavior to attempt to load the session

if it exists

- Raise more useful HTTP errors for logging

- Add unit testing

- Fix the default ID to be a UUID for v2 tracer sessions

Broken out from the big draft here:

https://github.com/hwchase17/langchain/pull/4061

- confirm creation

- confirm functionality with a simple dimension check.

The test now is calling OpenAI API directly, but learning from

@vowelparrot that we’re caching the requests, so that it’s not that

expensive. I also found we’re calling OpenAI api in other integration

tests. Please lmk if there is any concern of real external API calls. I

can alternatively make a fake LLM for this test. Thanks

This implements a loader of text passages in JSON format. The `jq`

syntax is used to define a schema for accessing the relevant contents

from the JSON file. This requires dependency on the `jq` package:

https://pypi.org/project/jq/.

---------

Signed-off-by: Aivin V. Solatorio <avsolatorio@gmail.com>

This commit adds support for passing additional arguments to the

`SeleniumURLLoader ` when creating Chrome or Firefox web drivers.

Previously, only a few arguments such as `headless` could be passed in.

With this change, users can pass any additional arguments they need as a

list of strings using the `arguments` parameter.

The `arguments` parameter allows users to configure the driver with any

options that are available for that particular browser. For example,

users can now pass custom `user_agent` strings or `proxy` settings using

this parameter.

This change also includes updated documentation and type hints to

reflect the new `arguments` parameter and its usage.

fixes#4120

This PR updates the `message_line_regex` used by `WhatsAppChatLoader` to

support different date-time formats used in WhatsApp chat exports;

resolves#4153.

The new regex handles the following input formats:

```terminal

[05.05.23, 15:48:11] James: Hi here

[11/8/21, 9:41:32 AM] User name: Message 123

1/23/23, 3:19 AM - User 2: Bye!

1/23/23, 3:22_AM - User 1: And let me know if anything changes

```

Tests have been added to verify that the loader works correctly with all

formats.

expand is not an allowed parameter for the method

confluence.get_all_pages_by_label, since it doesn't return the body of

the text but just metadata of documents

Co-authored-by: Andrea Biondo <a.biondo@reply.it>

The forward ref annotations don't get updated if we only iimport with

type checking

---------

Co-authored-by: Abhinav Verma <abhinav_win12@yahoo.co.in>

`run_manager` was not being passed downstream. Not sure if this was a

deliberate choice but it seems like it broke many agent callbacks like

`agent_action` and `agent_finish`. This fix needs a proper review.

Co-authored-by: blob42 <spike@w530>

Bump threshold to 1.4 from 1.3. Change import to be compatible

Resolves#4142 and #4129

---------

Co-authored-by: ndaugreal <ndaugreal@gmail.com>

Co-authored-by: Jeremy Lopez <lopez86@users.noreply.github.com>

Having dev containers makes its easier, faster and secure to setup the

dev environment for the repository.

The pull request consists of:

- .devcontainer folder with:

- **devcontainer.json :** (minimal necessary vscode extensions and

settings)

- **docker-compose.yaml :** (could be modified to run necessary services

as per need. Ex vectordbs, databases)

- **Dockerfile:**(non root with dev tools)

- Changes to README - added the Open in Github Codespaces Badge - added

the Open in dev container Badge

Co-authored-by: Jinto Jose <129657162+jj701@users.noreply.github.com>

As of right now when trying to use functions like

`max_marginal_relevance_search()` or

`max_marginal_relevance_search_by_vector()` the rest of the kwargs are

not propagated to `self._search_helper()`. For example a user cannot

explicitly state the distance_metric they want to use when calling

`max_marginal_relevance_search`

If the library user has to decrease the `max_token_limit`, he would

probably want to prune the summary buffer even though he haven't added

any new messages.

Personally, I need it because I want to serialise memory buffer object

and save to database, and when I load it, I may have re-configured my

code to have a shorter memory to save on tokens.

In the example for creating a Python REPL tool under the Agent module,

the ".run" was omitted in the example. I believe this is required when

defining a Tool.

In the section `Get Message Completions from a Chat Model` of the quick

start guide, the HumanMessage doesn't need to include `Translate this

sentence from English to French.` when there is a system message.

Simplify HumanMessages in these examples can further demonstrate the

power of LLM.

* implemented arun, results, and aresults. Reuses aiosession if

available.

* helper tools GoogleSerperRun and GoogleSerperResults

* support for Google Images, Places and News (examples given) and

filtering based on time (e.g. past hour)

* updated docs

The deeplake integration was/is very verbose (see e.g. [the

documentation

example](https://python.langchain.com/en/latest/use_cases/code/code-analysis-deeplake.html)

when loading or creating a deeplake dataset with only limited options to

dial down verbosity.

Additionally, the warning that a "Deep Lake Dataset already exists" was

confusing, as there is as far as I can tell no other way to load a

dataset.

This small PR changes that and introduces an explicit `verbose` argument

which is also passed to the deeplake library.

There should be minimal changes to the default output (the loading line

is printed instead of warned to make it consistent with `ds.summary()`

which also prints.

Google Scholar outputs a nice list of scientific and research articles

that use LangChain.

I added a link to the Google Scholar page to the `gallery` doc page

Method confluence.get_all_pages_by_label, returns only metadata about

documents with a certain label (such as pageId, titles, ...). To return

all documents with a certain label we need to extract all page ids given

a certain label and get pages content by these ids.

---------

Co-authored-by: Andrea Biondo <a.biondo@reply.it>

A incorrect data type error happened when executing _construct_path in

`chain.py` as follows:

```python

Error with message replace() argument 2 must be str, not int

```

The path is always a string. But the result of `args.pop(param, "")` is

undefined.

This PR includes two main changes:

- Refactor the `TelegramChatLoader` and `FacebookChatLoader` classes by

removing the dependency on pandas and simplifying the message filtering

process.

- Add test cases for the `TelegramChatLoader` and `FacebookChatLoader`

classes. This test ensures that the class correctly loads and processes

the example chat data, providing better test coverage for this

functionality.

The Blockchain Document Loader's default behavior is to return 100

tokens at a time which is the Alchemy API limit. The Document Loader

exposes a startToken that can be used for pagination against the API.

This enhancement includes an optional get_all_tokens param (default:

False) which will:

- Iterate over the Alchemy API until it receives all the tokens, and

return the tokens in a single call to the loader.

- Manage all/most tokenId formats (this can be int, hex16 with zero or

all the leading zeros). There aren't constraints as to how smart

contracts can represent this value, but these three are most common.

Note that a contract with 10,000 tokens will issue 100 calls to the

Alchemy API, and could take about a minute, which is why this param will

default to False. But I've been using the doc loader with these

utilities on the side, so figured it might make sense to build them in

for others to use.

Single edit to: models/text_embedding/examples/openai.ipynb - Line 88:

changed from: "embeddings = OpenAIEmbeddings(model_name=\"ada\")" to

"embeddings = OpenAIEmbeddings()" as model_name is no longer part of the

OpenAIEmbeddings class.

@vowelparrot @hwchase17 Here a new implementation of

`acompress_documents` for `LLMChainExtractor ` without changes to the

sync-version, as you suggested in #3587 / [Async Support for

LLMChainExtractor](https://github.com/hwchase17/langchain/pull/3587) .

I created a new PR to avoid cluttering history with reverted commits,

hope that is the right way.

Happy for any improvements/suggestions.

(PS:

I also tried an alternative implementation with a nested helper function

like

``` python

async def acompress_documents_old(

self, documents: Sequence[Document], query: str

) -> Sequence[Document]:

"""Compress page content of raw documents."""

async def _compress_concurrently(doc):

_input = self.get_input(query, doc)

output = await self.llm_chain.apredict_and_parse(**_input)

return Document(page_content=output, metadata=doc.metadata)

outputs=await asyncio.gather(*[_compress_concurrently(doc) for doc in documents])

compressed_docs=list(filter(lambda x: len(x.page_content)>0,outputs))

return compressed_docs

```

But in the end I found the commited version to be better readable and

more "canonical" - hope you agree.

Related to [this

issue.](https://github.com/hwchase17/langchain/issues/3655#issuecomment-1529415363)

The `Mapped` SQLAlchemy class is introduced in SQLAlchemy 1.4 but the

migration from 1.3 to 1.4 is quite challenging so, IMO, it's better to

keep backwards compatibility and not change the SQLAlchemy requirements

just because of type annotations.

This PR fixes the "SyntaxError: invalid escape sequence" error in the

pydantic.py file. The issue was caused by the backslashes in the regular

expression pattern being treated as escape characters. By using a raw

string literal for the regex pattern (e.g., r"\{.*\}"), this fix ensures

that backslashes are treated as literal characters, thus preventing the

error.

Co-authored-by: Tomer Levy <tomer.levy@tipalti.com>

Seems the pyllamacpp package is no longer the supported bindings from

gpt4all. Tested that this works locally.

Given that the older models weren't very performant, I think it's better

to migrate now without trying to include a lot of try / except blocks

---------

Co-authored-by: Nissan Pow <npow@users.noreply.github.com>

Co-authored-by: Nissan Pow <pownissa@amazon.com>

### Summary

Adds `UnstructuredAPIFileLoaders` and `UnstructuredAPIFIleIOLoaders`

that partition documents through the Unstructured API. Defaults to the

URL for hosted Unstructured API, but can switch to a self hosted or

locally running API using the `url` kwarg. Currently, the Unstructured

API is open and does not require an API, but it will soon. A note was

added about that to the Unstructured ecosystem page.

### Testing

```python

from langchain.document_loaders import UnstructuredAPIFileIOLoader

filename = "fake-email.eml"

with open(filename, "rb") as f:

loader = UnstructuredAPIFileIOLoader(file=f, file_filename=filename)

docs = loader.load()

docs[0]

```

```python

from langchain.document_loaders import UnstructuredAPIFileLoader

filename = "fake-email.eml"

loader = UnstructuredAPIFileLoader(file_path=filename, mode="elements")

docs = loader.load()

docs[0]

```

- ActionAgent has a property called, `allowed_tools`, which is declared

as `List`. It stores all provided tools which is available to use during

agent action.

- This collection shouldn’t allow duplicates. The original datatype List

doesn’t make sense. Each tool should be unique. Even when there are

variants (assuming in the future), it would be named differently in

load_tools.

Test:

- confirm the functionality in an example by initializing an agent with

a list of 2 tools and confirm everything works.

```python3

def test_agent_chain_chat_bot():

from langchain.agents import load_tools

from langchain.agents import initialize_agent

from langchain.agents import AgentType

from langchain.chat_models import ChatOpenAI

from langchain.llms import OpenAI

from langchain.utilities.duckduckgo_search import DuckDuckGoSearchAPIWrapper

chat = ChatOpenAI(temperature=0)

llm = OpenAI(temperature=0)

tools = load_tools(["ddg-search", "llm-math"], llm=llm)

agent = initialize_agent(tools, chat, agent=AgentType.CHAT_ZERO_SHOT_REACT_DESCRIPTION, verbose=True)

agent.run("Who is Olivia Wilde's boyfriend? What is his current age raised to the 0.23 power?")

test_agent_chain_chat_bot()

```

Result:

<img width="863" alt="Screenshot 2023-05-01 at 7 58 11 PM"

src="https://user-images.githubusercontent.com/62768671/235572157-0937594c-ddfb-4760-acb2-aea4cacacd89.png">

Modified Modern Treasury and Strip slightly so credentials don't have to

be passed in explicitly. Thanks @mattgmarcus for adding Modern Treasury!

---------

Co-authored-by: Matt Marcus <matt.g.marcus@gmail.com>

Haven't gotten to all of them, but this:

- Updates some of the tools notebooks to actually instantiate a tool

(many just show a 'utility' rather than a tool. More changes to come in

separate PR)

- Move the `Tool` and decorator definitions to `langchain/tools/base.py`

(but still export from `langchain.agents`)

- Add scene explain to the load_tools() function

- Add unit tests for public apis for the langchain.tools and langchain.agents modules

Move tool validation to each implementation of the Agent.

Another alternative would be to adjust the `_validate_tools()` signature

to accept the output parser (and format instructions) and add logic

there. Something like

`parser.outputs_structured_actions(format_instructions)`

But don't think that's needed right now.

History from Motorhead memory return in reversed order

It should be Human: 1, AI:..., Human: 2, Ai...

```

You are a chatbot having a conversation with a human.

AI: I'm sorry, I'm still not sure what you're trying to communicate. Can you please provide more context or information?

Human: 3

AI: I'm sorry, I'm not sure what you mean by "1" and "2". Could you please clarify your request or question?

Human: 2

AI: Hello, how can I assist you today?

Human: 1

Human: 4

AI:

```

So, i `reversed` the messages before putting in chat_memory.

The llm type of AzureOpenAI was previously set to default, which is

openai. But since AzureOpenAI has different API from openai, it creates

problems when doing chain saving and loading. This PR corrected the llm

type of AzureOpenAI to "azure"

Re: https://github.com/hwchase17/langchain/issues/3777

Copy pasting from the issue:

While working on https://github.com/hwchase17/langchain/issues/3722 I

have noticed that there might be a bug in the current implementation of

the OpenAI length safe embeddings in `_get_len_safe_embeddings`, which

before https://github.com/hwchase17/langchain/issues/3722 was actually

the **default implementation** regardless of the length of the context

(via https://github.com/hwchase17/langchain/pull/2330).

It appears the weights used are constant and the length of the embedding

vector (1536) and NOT the number of tokens in the batch, as in the

reference implementation at

https://github.com/openai/openai-cookbook/blob/main/examples/Embedding_long_inputs.ipynb

<hr>

Here's some debug info:

<img width="1094" alt="image"

src="https://user-images.githubusercontent.com/1419010/235286595-a8b55298-7830-45df-b9f7-d2a2ad0356e0.png">

<hr>

We can also validate this against the reference implementation:

<details>

<summary>Reference implementation (click to unroll)</summary>

This implementation is copy pasted from

https://github.com/openai/openai-cookbook/blob/main/examples/Embedding_long_inputs.ipynb

```py

import openai

from itertools import islice

import numpy as np

from tenacity import retry, wait_random_exponential, stop_after_attempt, retry_if_not_exception_type

EMBEDDING_MODEL = 'text-embedding-ada-002'

EMBEDDING_CTX_LENGTH = 8191

EMBEDDING_ENCODING = 'cl100k_base'

# let's make sure to not retry on an invalid request, because that is what we want to demonstrate

@retry(wait=wait_random_exponential(min=1, max=20), stop=stop_after_attempt(6), retry=retry_if_not_exception_type(openai.InvalidRequestError))

def get_embedding(text_or_tokens, model=EMBEDDING_MODEL):

return openai.Embedding.create(input=text_or_tokens, model=model)["data"][0]["embedding"]

def batched(iterable, n):

"""Batch data into tuples of length n. The last batch may be shorter."""

# batched('ABCDEFG', 3) --> ABC DEF G

if n < 1:

raise ValueError('n must be at least one')

it = iter(iterable)

while (batch := tuple(islice(it, n))):

yield batch

def chunked_tokens(text, encoding_name, chunk_length):

encoding = tiktoken.get_encoding(encoding_name)

tokens = encoding.encode(text)

chunks_iterator = batched(tokens, chunk_length)

yield from chunks_iterator

def reference_safe_get_embedding(text, model=EMBEDDING_MODEL, max_tokens=EMBEDDING_CTX_LENGTH, encoding_name=EMBEDDING_ENCODING, average=True):

chunk_embeddings = []

chunk_lens = []

for chunk in chunked_tokens(text, encoding_name=encoding_name, chunk_length=max_tokens):

chunk_embeddings.append(get_embedding(chunk, model=model))

chunk_lens.append(len(chunk))

if average:

chunk_embeddings = np.average(chunk_embeddings, axis=0, weights=chunk_lens)

chunk_embeddings = chunk_embeddings / np.linalg.norm(chunk_embeddings) # normalizes length to 1

chunk_embeddings = chunk_embeddings.tolist()

return chunk_embeddings

```

</details>

```py

long_text = 'foo bar' * 5000

reference_safe_get_embedding(long_text, average=True)[:10]

# Here's the first 10 floats from the reference embeddings:

[0.004407593824276758,