- Current docs are pointing to the wrong module, fixed

- Added some explanation on how to find the necessary parameters

- Added chat-based codegen example w/ retrievers

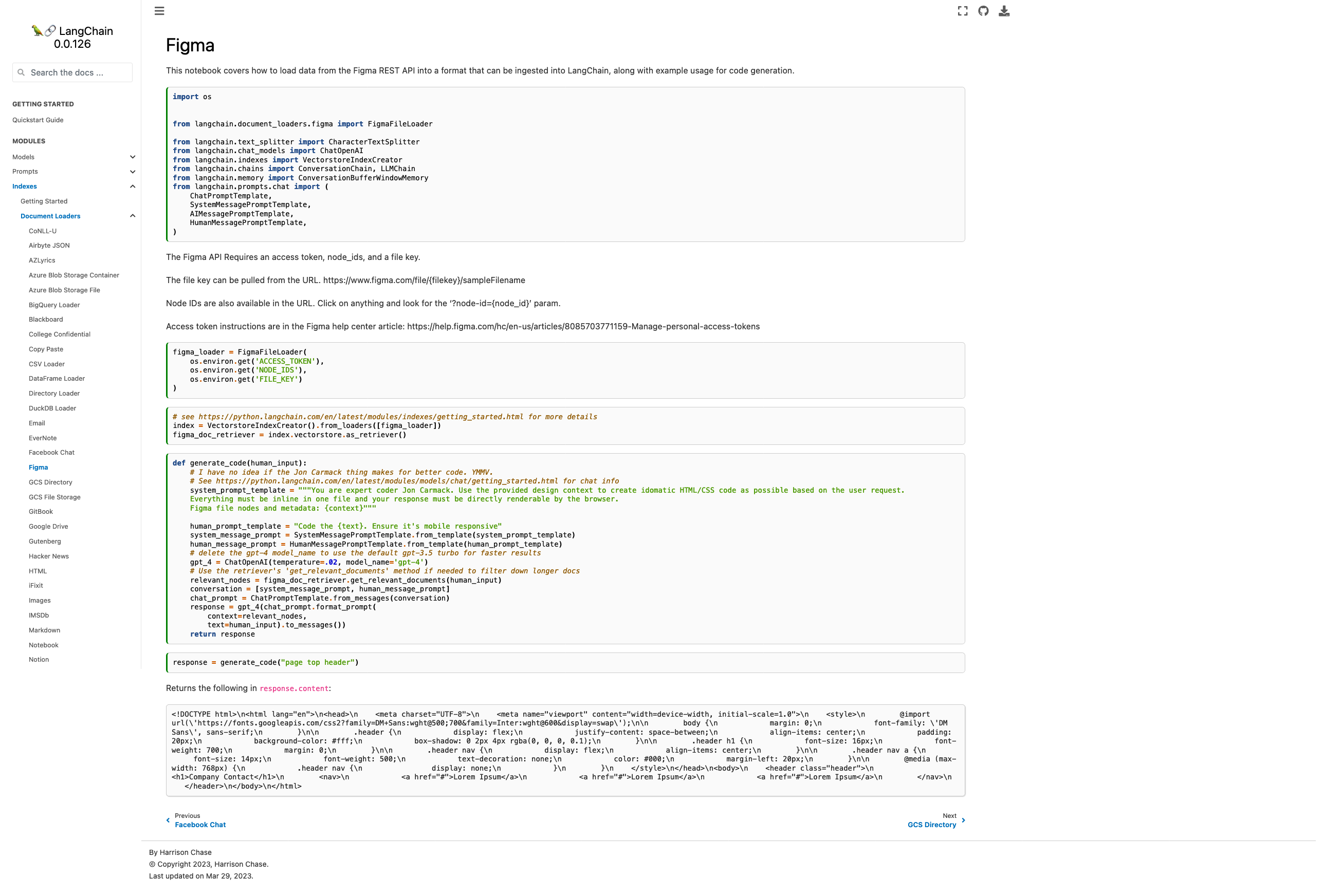

Picture of the new page:

Please let me know if you'd like any tweaks! I wasn't sure if the

example was too heavy for the page or not but decided "hey, I probably

would want to see it" and so included it.

Co-authored-by: maxtheman <max@maxs-mbp.lan>

"This notebook covers how to load data from the Figma REST API into a format that can be ingested into LangChain."

"This notebook covers how to load data from the Figma REST API into a format that can be ingested into LangChain, along with example usage for code generation."

" # I have no idea if the Jon Carmack thing makes for better code. YMMV.\n",

" # See https://python.langchain.com/en/latest/modules/models/chat/getting_started.html for chat info\n",

" system_prompt_template = \"\"\"You are expert coder Jon Carmack. Use the provided design context to create idomatic HTML/CSS code as possible based on the user request.\n",

" Everything must be inline in one file and your response must be directly renderable by the browser.\n",

" Figma file nodes and metadata: {context}\"\"\"\n",

"\n",

" human_prompt_template = \"Code the {text}. Ensure it's mobile responsive\"\n",