# Add Managed Motorhead

This change enabled MotorheadMemory to utilize Metal's managed version

of Motorhead. We can easily enable this by passing in a `api_key` and

`client_id` in order to hit the managed url and access the memory api on

Metal.

Twitter: [@softboyjimbo](https://twitter.com/softboyjimbo)

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@dev2049 @hwchase17

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Skips creating boto client if passed in constructor

Current LLM and Embeddings class always creates a new boto client, even

if one is passed in a constructor. This blocks certain users from

passing in externally created boto clients, for example in SSO

authentication.

## Who can review?

@hwchase17

@jasondotparse

@rsgrewal-aws

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

# added DeepLearing.AI course link

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

not @hwchase17 - hehe

# Added support for download GPT4All model if does not exist

I've include the class attribute `allow_download` to the GPT4All class.

By default, `allow_download` is set to False.

## Changes Made

- Added a new attribute `allow_download` to the GPT4All class.

- Updated the `validate_environment` method to pass the `allow_download`

parameter to the GPT4All model constructor.

## Context

This change provides more control over model downloading in the GPT4All

class. Previously, if the model file was not found in the cache

directory `~/.cache/gpt4all/`, the package returned error "Failed to

retrieve model (type=value_error)". Now, if `allow_download` is set as

True then it will use GPT4All package to download it . With the addition

of the `allow_download` attribute, users can now choose whether the

wrapper is allowed to download the model or not.

## Dependencies

There are no new dependencies introduced by this change. It only

utilizes existing functionality provided by the GPT4All package.

## Testing

Since this is a minor change to the existing behavior, the existing test

suite for the GPT4All package should cover this scenario

Co-authored-by: Vokturz <victornavarrrokp47@gmail.com>

# Bedrock LLM and Embeddings

This PR adds a new LLM and an Embeddings class for the

[Bedrock](https://aws.amazon.com/bedrock) service. The PR also includes

example notebooks for using the LLM class in a conversation chain and

embeddings usage in creating an embedding for a query and document.

**Note**: AWS is doing a private release of the Bedrock service on

05/31/2023; users need to request access and added to an allowlist in

order to start using the Bedrock models and embeddings. Please use the

[Bedrock Home Page](https://aws.amazon.com/bedrock) to request access

and to learn more about the models available in Bedrock.

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

# Support Qdrant filters

Qdrant has an [extensive filtering

system](https://qdrant.tech/documentation/concepts/filtering/) with rich

type support. This PR makes it possible to use the filters in Langchain

by passing an additional param to both the

`similarity_search_with_score` and `similarity_search` methods.

## Who can review?

@dev2049 @hwchase17

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# SQLite-backed Entity Memory

Following the initiative of

https://github.com/hwchase17/langchain/pull/2397 I think it would be

helpful to be able to persist Entity Memory on disk by default

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

This PR adds a new method `from_es_connection` to the

`ElasticsearchEmbeddings` class allowing users to use Elasticsearch

clusters outside of Elastic Cloud.

Users can create an Elasticsearch Client object and pass that to the new

function.

The returned object is identical to the one returned by calling

`from_credentials`

```

# Create Elasticsearch connection

es_connection = Elasticsearch(

hosts=['https://es_cluster_url:port'],

basic_auth=('user', 'password')

)

# Instantiate ElasticsearchEmbeddings using es_connection

embeddings = ElasticsearchEmbeddings.from_es_connection(

model_id,

es_connection,

)

```

I also added examples to the elasticsearch jupyter notebook

Fixes # https://github.com/hwchase17/langchain/issues/5239

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Added support for modifying the number of threads in the GPT4All model

I have added the capability to modify the number of threads used by the

GPT4All model. This allows users to adjust the model's parallel

processing capabilities based on their specific requirements.

## Changes Made

- Updated the `validate_environment` method to set the number of threads

for the GPT4All model using the `values["n_threads"]` parameter from the

`GPT4All` class constructor.

## Context

Useful in scenarios where users want to optimize the model's performance

by leveraging multi-threading capabilities.

Please note that the `n_threads` parameter was included in the `GPT4All`

class constructor but was previously unused. This change ensures that

the specified number of threads is utilized by the model .

## Dependencies

There are no new dependencies introduced by this change. It only

utilizes existing functionality provided by the GPT4All package.

## Testing

Since this is a minor change testing is not required.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

when the LLMs output 'yes|no',BooleanOutputParser can parse it to

'True|False', fix the ValueError in parse().

<!--

when use the BooleanOutputParser in the chain_filter.py, the LLMs output

'yes|no',the function 'parse' will throw ValueError。

-->

Fixes # (issue)

#5396https://github.com/hwchase17/langchain/issues/5396

---------

Co-authored-by: gaofeng27692 <gaofeng27692@hundsun.com>

# Adds ability to specify credentials when using Google BigQuery as a

data loader

Fixes#5465 . Adds ability to set credentials which must be of the

`google.auth.credentials.Credentials` type. This argument is optional

and will default to `None.

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Add maximal relevance search to SKLearnVectorStore

This PR implements the maximum relevance search in SKLearnVectorStore.

Twitter handle: jtolgyesi (I submitted also the original implementation

of SKLearnVectorStore)

## Before submitting

Unit tests are included.

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

Update [psychicapi](https://pypi.org/project/psychicapi/) python package

dependency to the latest version 0.5. The newest python package version

addresses breaking changes in the Psychic http api.

# Add batching to Qdrant

Several people requested a batching mechanism while uploading data to

Qdrant. It is important, as there are some limits for the maximum size

of the request payload, and without batching implemented in Langchain,

users need to implement it on their own. This PR exposes a new optional

`batch_size` parameter, so all the documents/texts are loaded in batches

of the expected size (64, by default).

The integration tests of Qdrant are extended to cover two cases:

1. Documents are sent in separate batches.

2. All the documents are sent in a single request.

# Added Async _acall to FakeListLLM

FakeListLLM is handy when unit testing apps built with langchain. This

allows the use of FakeListLLM inside concurrent code with

[asyncio](https://docs.python.org/3/library/asyncio.html).

I also changed the pydocstring which was out of date.

## Who can review?

@hwchase17 - project lead

@agola11 - async

# Handles the edge scenario in which the action input is a well formed

SQL query which ends with a quoted column

There may be a cleaner option here (or indeed other edge scenarios) but

this seems to robustly determine if the action input is likely to be a

well formed SQL query in which we don't want to arbitrarily trim off `"`

characters

Fixes#5423

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Agents / Tools / Toolkits

- @vowelparrot

# What does this PR do?

Bring support of `encode_kwargs` for ` HuggingFaceInstructEmbeddings`,

change the docstring example and add a test to illustrate with

`normalize_embeddings`.

Fixes#3605

(Similar to #3914)

Use case:

```python

from langchain.embeddings import HuggingFaceInstructEmbeddings

model_name = "hkunlp/instructor-large"

model_kwargs = {'device': 'cpu'}

encode_kwargs = {'normalize_embeddings': True}

hf = HuggingFaceInstructEmbeddings(

model_name=model_name,

model_kwargs=model_kwargs,

encode_kwargs=encode_kwargs

)

```

This removes duplicate code presumably introduced by a cut-and-paste

error, spotted while reviewing the code in

```langchain/client/langchain.py```. The original code had back to back

occurrences of the following code block:

```

response = self._get(

path,

params=params,

)

raise_for_status_with_text(response)

```

As the title says, I added more code splitters.

The implementation is trivial, so i don't add separate tests for each

splitter.

Let me know if any concerns.

Fixes # (issue)

https://github.com/hwchase17/langchain/issues/5170

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@eyurtsev @hwchase17

---------

Signed-off-by: byhsu <byhsu@linkedin.com>

Co-authored-by: byhsu <byhsu@linkedin.com>

# Creates GitHubLoader (#5257)

GitHubLoader is a DocumentLoader that loads issues and PRs from GitHub.

Fixes#5257

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Added New Trello loader class and documentation

Simple Loader on top of py-trello wrapper.

With a board name you can pull cards and to do some field parameter

tweaks on load operation.

I included documentation and examples.

Included unit test cases using patch and a fixture for py-trello client

class.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

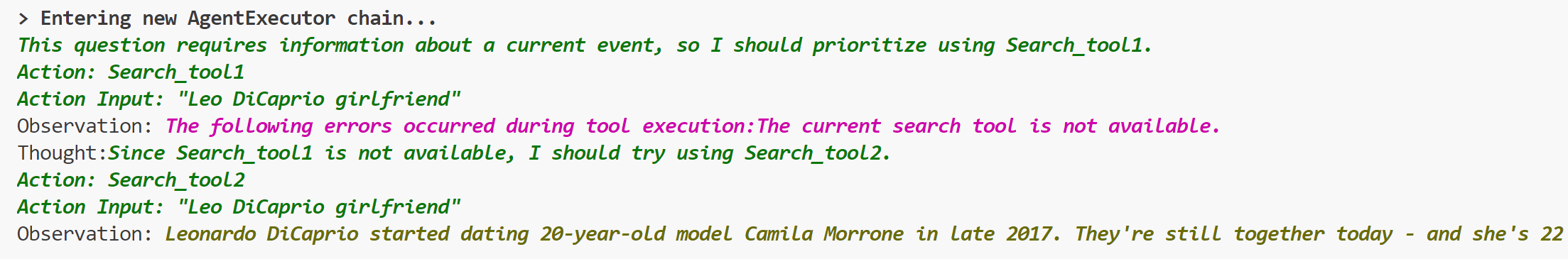

# Add ToolException that a tool can throw

This is an optional exception that tool throws when execution error

occurs.

When this exception is thrown, the agent will not stop working,but will

handle the exception according to the handle_tool_error variable of the

tool,and the processing result will be returned to the agent as

observation,and printed in pink on the console.It can be used like this:

```python

from langchain.schema import ToolException

from langchain import LLMMathChain, SerpAPIWrapper, OpenAI

from langchain.agents import AgentType, initialize_agent

from langchain.chat_models import ChatOpenAI

from langchain.tools import BaseTool, StructuredTool, Tool, tool

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI(temperature=0)

llm_math_chain = LLMMathChain(llm=llm, verbose=True)

class Error_tool:

def run(self, s: str):

raise ToolException('The current search tool is not available.')

def handle_tool_error(error) -> str:

return "The following errors occurred during tool execution:"+str(error)

search_tool1 = Error_tool()

search_tool2 = SerpAPIWrapper()

tools = [

Tool.from_function(

func=search_tool1.run,

name="Search_tool1",

description="useful for when you need to answer questions about current events.You should give priority to using it.",

handle_tool_error=handle_tool_error,

),

Tool.from_function(

func=search_tool2.run,

name="Search_tool2",

description="useful for when you need to answer questions about current events",

return_direct=True,

)

]

agent = initialize_agent(tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True,

handle_tool_errors=handle_tool_error)

agent.run("Who is Leo DiCaprio's girlfriend? What is her current age raised to the 0.43 power?")

```

## Who can review?

- @vowelparrot

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# docs: ecosystem/integrations update

It is the first in a series of `ecosystem/integrations` updates.

The ecosystem/integrations list is missing many integrations.

I'm adding the missing integrations in a consistent format:

1. description of the integrated system

2. `Installation and Setup` section with 'pip install ...`, Key setup,

and other necessary settings

3. Sections like `LLM`, `Text Embedding Models`, `Chat Models`... with

links to correspondent examples and imports of the used classes.

This PR keeps new docs, that are presented in the

`docs/modules/models/text_embedding/examples` but missed in the

`ecosystem/integrations`. The next PRs will cover the next example

sections.

Also updated `integrations.rst`: added the `Dependencies` section with a

link to the packages used in LangChain.

## Who can review?

@hwchase17

@eyurtsev

@dev2049

# docs: ecosystem/integrations update 2

#5219 - part 1

The second part of this update (parts are independent of each other! no

overlap):

- added diffbot.md

- updated confluence.ipynb; added confluence.md

- updated college_confidential.md

- updated openai.md

- added blackboard.md

- added bilibili.md

- added azure_blob_storage.md

- added azlyrics.md

- added aws_s3.md

## Who can review?

@hwchase17@agola11

@agola11

@vowelparrot

@dev2049

# Implemented appending arbitrary messages to the base chat message

history, the in-memory and cosmos ones.

<!--

Thank you for contributing to LangChain! Your PR will appear in our next

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

As discussed this is the alternative way instead of #4480, with a

add_message method added that takes a BaseMessage as input, so that the

user can control what is in the base message like kwargs.

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, include an integration test and

an example notebook showing its use! -->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@hwchase17

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>