Provide shared memory capability for the Agent.

Inspired by #1293 .

## Problem

If both Agent and Tools (i.e., LLMChain) use the same memory, both of

them will save the context. It can be annoying in some cases.

## Solution

Create a memory wrapper that ignores the save and clear, thereby

preventing updates from Agent or Tools.

for https://github.com/hwchase17/langchain/issues/1582

I simply added the `return_intermediate_steps` and changed the

`output_keys` function.

I added 2 simple tests, 1 for SQLDatabaseSequentialChain without the

intermediate steps and 1 with

Co-authored-by: brad-nemetski <115185478+brad-nemetski@users.noreply.github.com>

If a `persist_directory` param was set, chromadb would throw a warning

that ""No embedding_function provided, using default embedding function:

SentenceTransformerEmbeddingFunction". and would error with a `Illegal

instruction: 4` error.

This is on a MBP M1 13.2.1, python 3.9.

I'm not entirely sure why that error happened, but when using

`get_or_create_collection` instead of `list_collection` on our end, the

error and warning goes away and chroma works as expected.

Added bonus this is cleaner and likely more efficient.

`list_collections` builds a new `Collection` instance for each collect,

then `Chroma` would just use the `name` field to tell if the collection

existed.

I am redoing this PR, as I made a mistake by merging the latest changes

into my fork's branch, sorry. This added a bunch of commits to my

previous PR.

This fixes#1451.

Simple CSV document loader which wraps `csv` reader, and preps the file

with a single `Document` per row.

The column header is prepended to each value for context which is useful

for context with embedding and semantic search

The Python `wikipedia` package gives easy access for searching and

fetching pages from Wikipedia, see https://pypi.org/project/wikipedia/.

It can serve as an additional search and retrieval tool, like the

existing Google and SerpAPI helpers, for both chains and agents.

First of all, big kudos on what you guys are doing, langchain is

enabling some really amazing usecases and I'm having lot's of fun

playing around with it. It's really cool how many data sources it

supports out of the box.

However, I noticed some limitations of the current `GitbookLoader` which

this PR adresses:

The main change is that I added an optional `base_url` arg to

`GitbookLoader`. This enables use cases where one wants to crawl docs

from a start page other than the index page, e.g., the following call

would scrape all pages that are reachable via nav bar links from

"https://docs.zenml.io/v/0.35.0":

```python

GitbookLoader(

web_page="https://docs.zenml.io/v/0.35.0",

load_all_paths=True,

base_url="https://docs.zenml.io",

)

```

Previously, this would fail because relative links would be of the form

`/v/0.35.0/...` and the full link URLs would become

`docs.zenml.io/v/0.35.0/v/0.35.0/...`.

I also fixed another issue of the `GitbookLoader` where the link URLs

were constructed incorrectly as `website//relative_url` if the provided

`web_page` had a trailing slash.

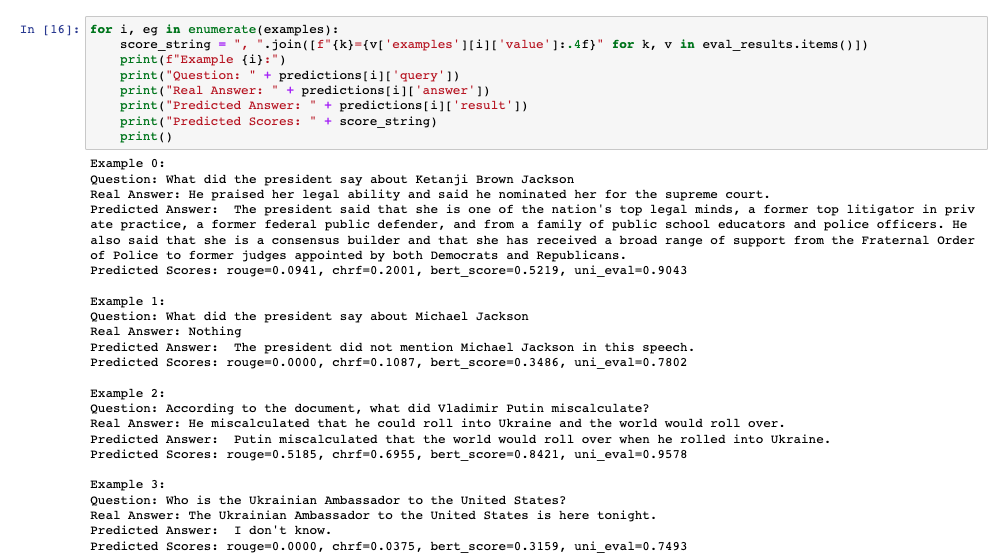

This PR adds additional evaluation metrics for data-augmented QA,

resulting in a report like this at the end of the notebook:

The score calculation is based on the

[Critique](https://docs.inspiredco.ai/critique/) toolkit, an API-based

toolkit (like OpenAI) that has minimal dependencies, so it should be

easy for people to run if they choose.

The code could further be simplified by actually adding a chain that

calls Critique directly, but that probably should be saved for another

PR if necessary. Any comments or change requests are welcome!

# Problem

The ChromaDB vecstore only supported local connection. There was no way

to use a chromadb server.

# Fix

Added `client_settings` as Chroma attribute.

# Usage

```

from chromadb.config import Settings

from langchain.vectorstores import Chroma

chroma_settings = Settings(chroma_api_impl="rest",

chroma_server_host="localhost",

chroma_server_http_port="80")

docsearch = Chroma.from_documents(chunks, embeddings, metadatas=metadatas, client_settings=chroma_settings, collection_name=COLLECTION_NAME)

```