Thank you for contributing to LangChain!

- [ ] **PR title**: "package: description"

- Where "package" is whichever of langchain, community, core,

experimental, etc. is being modified. Use "docs: ..." for purely docs

changes, "templates: ..." for template changes, "infra: ..." for CI

changes.

- Example: "community: add foobar LLM"

- [ ] **PR message**: ***Delete this entire checklist*** and replace

with

- **Description:** a description of the change

- **Issue:** the issue # it fixes, if applicable

- **Dependencies:** any dependencies required for this change

- **Twitter handle:** if your PR gets announced, and you'd like a

mention, we'll gladly shout you out!

- [ ] **Add tests and docs**: If you're adding a new integration, please

include

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

- [ ] **Lint and test**: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified. See contribution

guidelines for more: https://python.langchain.com/docs/contributing/

Additional guidelines:

- Make sure optional dependencies are imported within a function.

- Please do not add dependencies to pyproject.toml files (even optional

ones) unless they are required for unit tests.

- Most PRs should not touch more than one package.

- Changes should be backwards compatible.

- If you are adding something to community, do not re-import it in

langchain.

If no one reviews your PR within a few days, please @-mention one of

baskaryan, efriis, eyurtsev, ccurme, vbarda, hwchase17.

|

||

|---|---|---|

| .. | ||

| neo4j_semantic_layer | ||

| static | ||

| tests | ||

| ingest.py | ||

| main.py | ||

| pyproject.toml | ||

| README.md | ||

neo4j-semantic-layer

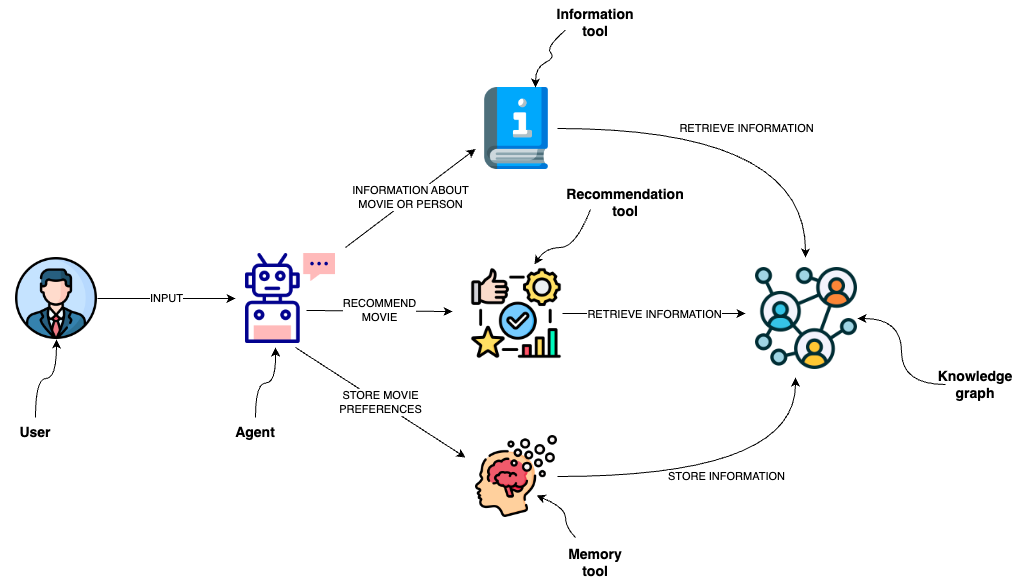

This template is designed to implement an agent capable of interacting with a graph database like Neo4j through a semantic layer using OpenAI function calling. The semantic layer equips the agent with a suite of robust tools, allowing it to interact with the graph database based on the user's intent. Learn more about the semantic layer template in the corresponding blog post.

Tools

The agent utilizes several tools to interact with the Neo4j graph database effectively:

- Information tool:

- Retrieves data about movies or individuals, ensuring the agent has access to the latest and most relevant information.

- Recommendation Tool:

- Provides movie recommendations based upon user preferences and input.

- Memory Tool:

- Stores information about user preferences in the knowledge graph, allowing for a personalized experience over multiple interactions.

Environment Setup

You need to define the following environment variables

OPENAI_API_KEY=<YOUR_OPENAI_API_KEY>

NEO4J_URI=<YOUR_NEO4J_URI>

NEO4J_USERNAME=<YOUR_NEO4J_USERNAME>

NEO4J_PASSWORD=<YOUR_NEO4J_PASSWORD>

Populating with data

If you want to populate the DB with an example movie dataset, you can run python ingest.py.

The script import information about movies and their rating by users.

Additionally, the script creates two fulltext indices, which are used to map information from user input to the database.

Usage

To use this package, you should first have the LangChain CLI installed:

pip install -U "langchain-cli[serve]"

To create a new LangChain project and install this as the only package, you can do:

langchain app new my-app --package neo4j-semantic-layer

If you want to add this to an existing project, you can just run:

langchain app add neo4j-semantic-layer

And add the following code to your server.py file:

from neo4j_semantic_layer import agent_executor as neo4j_semantic_agent

add_routes(app, neo4j_semantic_agent, path="/neo4j-semantic-layer")

(Optional) Let's now configure LangSmith. LangSmith will help us trace, monitor and debug LangChain applications. You can sign up for LangSmith here. If you don't have access, you can skip this section

export LANGCHAIN_TRACING_V2=true

export LANGCHAIN_API_KEY=<your-api-key>

export LANGCHAIN_PROJECT=<your-project> # if not specified, defaults to "default"

If you are inside this directory, then you can spin up a LangServe instance directly by:

langchain serve

This will start the FastAPI app with a server is running locally at http://localhost:8000

We can see all templates at http://127.0.0.1:8000/docs We can access the playground at http://127.0.0.1:8000/neo4j-semantic-layer/playground

We can access the template from code with:

from langserve.client import RemoteRunnable

runnable = RemoteRunnable("http://localhost:8000/neo4j-semantic-layer")