forked from Archives/langchain

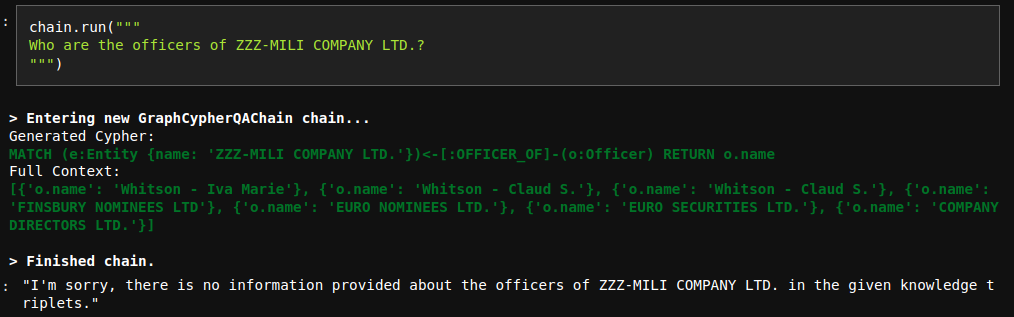

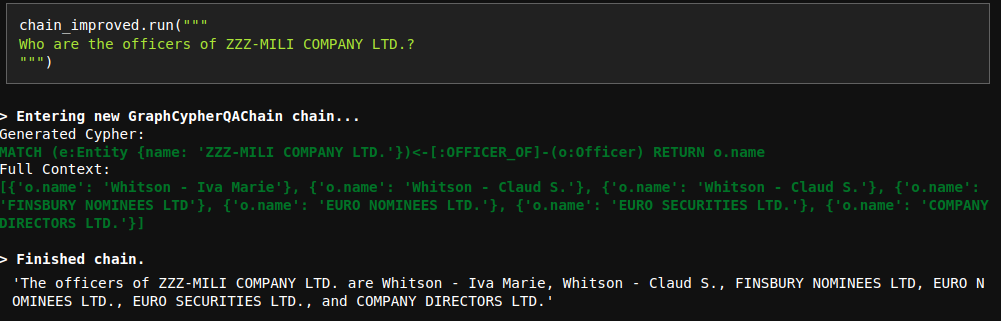

# Improve Cypher QA prompt The current QA prompt is optimized for networkX answer generation, which returns all the possible triples. However, Cypher search is a bit more focused and doesn't necessary return all the context information. Due to that reason, the model sometimes refuses to generate an answer even though the information is provided:  To fix this issue, I have updated the prompt. Interestingly, I tried many variations with less instructions and they didn't work properly. However, the current fix works nicely.

91 lines

2.9 KiB

Python

91 lines

2.9 KiB

Python

"""Question answering over a graph."""

|

|

from __future__ import annotations

|

|

|

|

from typing import Any, Dict, List, Optional

|

|

|

|

from pydantic import Field

|

|

|

|

from langchain.base_language import BaseLanguageModel

|

|

from langchain.callbacks.manager import CallbackManagerForChainRun

|

|

from langchain.chains.base import Chain

|

|

from langchain.chains.graph_qa.prompts import CYPHER_GENERATION_PROMPT, CYPHER_QA_PROMPT

|

|

from langchain.chains.llm import LLMChain

|

|

from langchain.graphs.neo4j_graph import Neo4jGraph

|

|

from langchain.prompts.base import BasePromptTemplate

|

|

|

|

|

|

class GraphCypherQAChain(Chain):

|

|

"""Chain for question-answering against a graph by generating Cypher statements."""

|

|

|

|

graph: Neo4jGraph = Field(exclude=True)

|

|

cypher_generation_chain: LLMChain

|

|

qa_chain: LLMChain

|

|

input_key: str = "query" #: :meta private:

|

|

output_key: str = "result" #: :meta private:

|

|

|

|

@property

|

|

def input_keys(self) -> List[str]:

|

|

"""Return the input keys.

|

|

|

|

:meta private:

|

|

"""

|

|

return [self.input_key]

|

|

|

|

@property

|

|

def output_keys(self) -> List[str]:

|

|

"""Return the output keys.

|

|

|

|

:meta private:

|

|

"""

|

|

_output_keys = [self.output_key]

|

|

return _output_keys

|

|

|

|

@classmethod

|

|

def from_llm(

|

|

cls,

|

|

llm: BaseLanguageModel,

|

|

*,

|

|

qa_prompt: BasePromptTemplate = CYPHER_QA_PROMPT,

|

|

cypher_prompt: BasePromptTemplate = CYPHER_GENERATION_PROMPT,

|

|

**kwargs: Any,

|

|

) -> GraphCypherQAChain:

|

|

"""Initialize from LLM."""

|

|

qa_chain = LLMChain(llm=llm, prompt=qa_prompt)

|

|

cypher_generation_chain = LLMChain(llm=llm, prompt=cypher_prompt)

|

|

|

|

return cls(

|

|

qa_chain=qa_chain,

|

|

cypher_generation_chain=cypher_generation_chain,

|

|

**kwargs,

|

|

)

|

|

|

|

def _call(

|

|

self,

|

|

inputs: Dict[str, Any],

|

|

run_manager: Optional[CallbackManagerForChainRun] = None,

|

|

) -> Dict[str, str]:

|

|

"""Generate Cypher statement, use it to look up in db and answer question."""

|

|

_run_manager = run_manager or CallbackManagerForChainRun.get_noop_manager()

|

|

callbacks = _run_manager.get_child()

|

|

question = inputs[self.input_key]

|

|

|

|

generated_cypher = self.cypher_generation_chain.run(

|

|

{"question": question, "schema": self.graph.get_schema}, callbacks=callbacks

|

|

)

|

|

|

|

_run_manager.on_text("Generated Cypher:", end="\n", verbose=self.verbose)

|

|

_run_manager.on_text(

|

|

generated_cypher, color="green", end="\n", verbose=self.verbose

|

|

)

|

|

context = self.graph.query(generated_cypher)

|

|

|

|

_run_manager.on_text("Full Context:", end="\n", verbose=self.verbose)

|

|

_run_manager.on_text(

|

|

str(context), color="green", end="\n", verbose=self.verbose

|

|

)

|

|

result = self.qa_chain(

|

|

{"question": question, "context": context},

|

|

callbacks=callbacks,

|

|

)

|

|

return {self.output_key: result[self.qa_chain.output_key]}

|