… properly Fixed a bug that was causing the streaming transfer to not work properly. - **Description: 1、The on_llm_new_token method in the streaming callback can now be called properly in streaming transfer mode. 2、In streaming transfer mode, LLM can now correctly output the complete response instead of just the first token. - **Tag maintainer: @wangxuqi - **Twitter handle: @kGX7XJjuYxzX9Km --------- Co-authored-by: Bagatur <baskaryan@gmail.com> |

||

|---|---|---|

| .devcontainer | ||

| .github | ||

| cookbook | ||

| docker | ||

| docs | ||

| libs | ||

| templates | ||

| tests/integration_tests/llms | ||

| .gitattributes | ||

| .gitignore | ||

| .readthedocs.yaml | ||

| CITATION.cff | ||

| LICENSE | ||

| Makefile | ||

| MIGRATE.md | ||

| poetry.lock | ||

| poetry.toml | ||

| pyproject.toml | ||

| README.md | ||

| SECURITY.md | ||

🦜️🔗 LangChain

⚡ Building applications with LLMs through composability ⚡

Looking for the JS/TS library? Check out LangChain.js.

To help you ship LangChain apps to production faster, check out LangSmith. LangSmith is a unified developer platform for building, testing, and monitoring LLM applications. Fill out this form to get off the waitlist or speak with our sales team.

Quick Install

With pip:

pip install langchain

With conda:

conda install langchain -c conda-forge

🤔 What is LangChain?

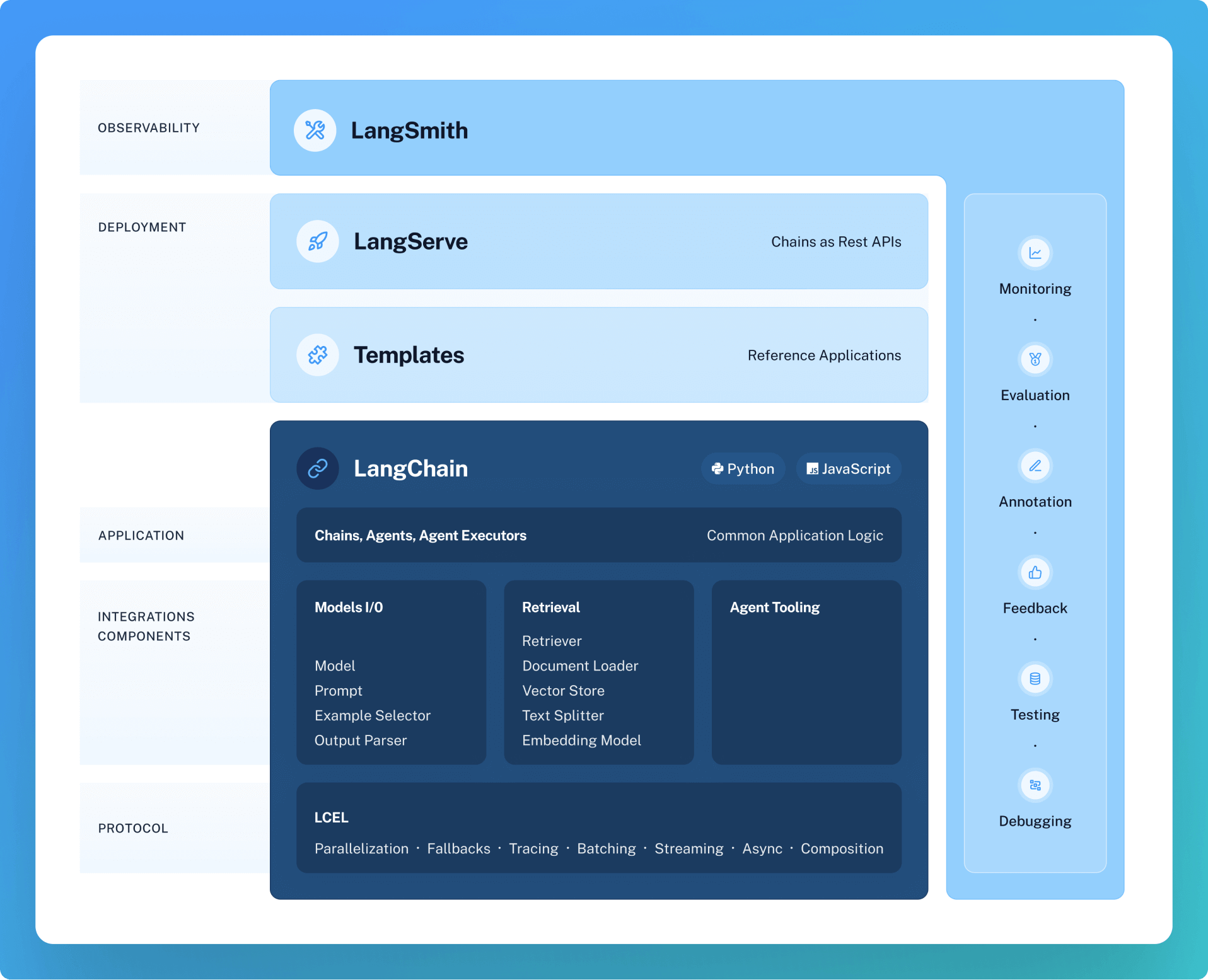

LangChain is a framework for developing applications powered by language models. It enables applications that:

- Are context-aware: connect a language model to sources of context (prompt instructions, few shot examples, content to ground its response in, etc.)

- Reason: rely on a language model to reason (about how to answer based on provided context, what actions to take, etc.)

This framework consists of several parts.

- LangChain Libraries: The Python and JavaScript libraries. Contains interfaces and integrations for a myriad of components, a basic run time for combining these components into chains and agents, and off-the-shelf implementations of chains and agents.

- LangChain Templates: A collection of easily deployable reference architectures for a wide variety of tasks.

- LangServe: A library for deploying LangChain chains as a REST API.

- LangSmith: A developer platform that lets you debug, test, evaluate, and monitor chains built on any LLM framework and seamlessly integrates with LangChain.

This repo contains the langchain (here), langchain-experimental (here), and langchain-cli (here) Python packages, as well as LangChain Templates.

🧱 What can you build with LangChain?

❓ Retrieval augmented generation

- Documentation

- End-to-end Example: Chat LangChain and repo

💬 Analyzing structured data

- Documentation

- End-to-end Example: SQL Llama2 Template

🤖 Chatbots

- Documentation

- End-to-end Example: Web LangChain (web researcher chatbot) and repo

And much more! Head to the Use cases section of the docs for more.

🚀 How does LangChain help?

The main value props of the LangChain libraries are:

- Components: composable tools and integrations for working with language models. Components are modular and easy-to-use, whether you are using the rest of the LangChain framework or not

- Off-the-shelf chains: built-in assemblages of components for accomplishing higher-level tasks

Off-the-shelf chains make it easy to get started. Components make it easy to customize existing chains and build new ones.

Components fall into the following modules:

📃 Model I/O:

This includes prompt management, prompt optimization, a generic interface for all LLMs, and common utilities for working with LLMs.

📚 Retrieval:

Data Augmented Generation involves specific types of chains that first interact with an external data source to fetch data for use in the generation step. Examples include summarization of long pieces of text and question/answering over specific data sources.

🤖 Agents:

Agents involve an LLM making decisions about which Actions to take, taking that Action, seeing an Observation, and repeating that until done. LangChain provides a standard interface for agents, a selection of agents to choose from, and examples of end-to-end agents.

📖 Documentation

Please see here for full documentation, which includes:

- Getting started: installation, setting up the environment, simple examples

- Overview of the interfaces, modules and integrations

- Use case walkthroughs and best practice guides

- LangSmith, LangServe, and LangChain Template overviews

- Reference: full API docs

💁 Contributing

As an open-source project in a rapidly developing field, we are extremely open to contributions, whether it be in the form of a new feature, improved infrastructure, or better documentation.

For detailed information on how to contribute, see here.