**Description:** enable _parse_response_candidate to support complex

structure format.

**Issue:**

currently, if Gemini response complex args format, people will get

"TypeError: Object of type RepeatedComposite is not JSON serializable"

error from _parse_response_candidate.

response candidate example

```

content {

role: "model"

parts {

function_call {

name: "Information"

args {

fields {

key: "people"

value {

list_value {

values {

string_value: "Joe is 30, his mom is Martha"

}

}

}

}

}

}

}

}

finish_reason: STOP

safety_ratings {

category: HARM_CATEGORY_HARASSMENT

probability: NEGLIGIBLE

}

safety_ratings {

category: HARM_CATEGORY_HATE_SPEECH

probability: NEGLIGIBLE

}

safety_ratings {

category: HARM_CATEGORY_SEXUALLY_EXPLICIT

probability: NEGLIGIBLE

}

safety_ratings {

category: HARM_CATEGORY_DANGEROUS_CONTENT

probability: NEGLIGIBLE

}

```

error msg:

```

Traceback (most recent call last):

File "/home/jupyter/user/abehsu/gemini_langchain_tools/example2.py", line 36, in <module>

print(tagging_chain.invoke({"input": "Joe is 30, his mom is Martha"}))

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/runnables/base.py", line 2053, in invoke

input = step.invoke(

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/runnables/base.py", line 3887, in invoke

return self.bound.invoke(

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/language_models/chat_models.py", line 165, in invoke

self.generate_prompt(

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/language_models/chat_models.py", line 543, in generate_prompt

return self.generate(prompt_messages, stop=stop, callbacks=callbacks, **kwargs)

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/language_models/chat_models.py", line 407, in generate

raise e

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/language_models/chat_models.py", line 397, in generate

self._generate_with_cache(

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_core/language_models/chat_models.py", line 576, in _generate_with_cache

return self._generate(

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_google_vertexai/chat_models.py", line 406, in _generate

generations = [

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_google_vertexai/chat_models.py", line 408, in <listcomp>

message=_parse_response_candidate(c),

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/site-packages/langchain_google_vertexai/chat_models.py", line 280, in _parse_response_candidate

function_call["arguments"] = json.dumps(

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/json/__init__.py", line 231, in dumps

return _default_encoder.encode(obj)

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/json/encoder.py", line 199, in encode

chunks = self.iterencode(o, _one_shot=True)

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/json/encoder.py", line 257, in iterencode

return _iterencode(o, 0)

File "/opt/conda/envs/gemini_langchain_tools/lib/python3.10/json/encoder.py", line 179, in default

raise TypeError(f'Object of type {o.__class__.__name__} '

TypeError: Object of type RepeatedComposite is not JSON serializable

```

**Twitter handle:** @abehsu1992626

|

||

|---|---|---|

| .devcontainer | ||

| .github | ||

| cookbook | ||

| docker | ||

| docs | ||

| libs | ||

| templates | ||

| .gitattributes | ||

| .gitignore | ||

| .readthedocs.yaml | ||

| CITATION.cff | ||

| LICENSE | ||

| Makefile | ||

| MIGRATE.md | ||

| poetry.lock | ||

| poetry.toml | ||

| pyproject.toml | ||

| README.md | ||

| SECURITY.md | ||

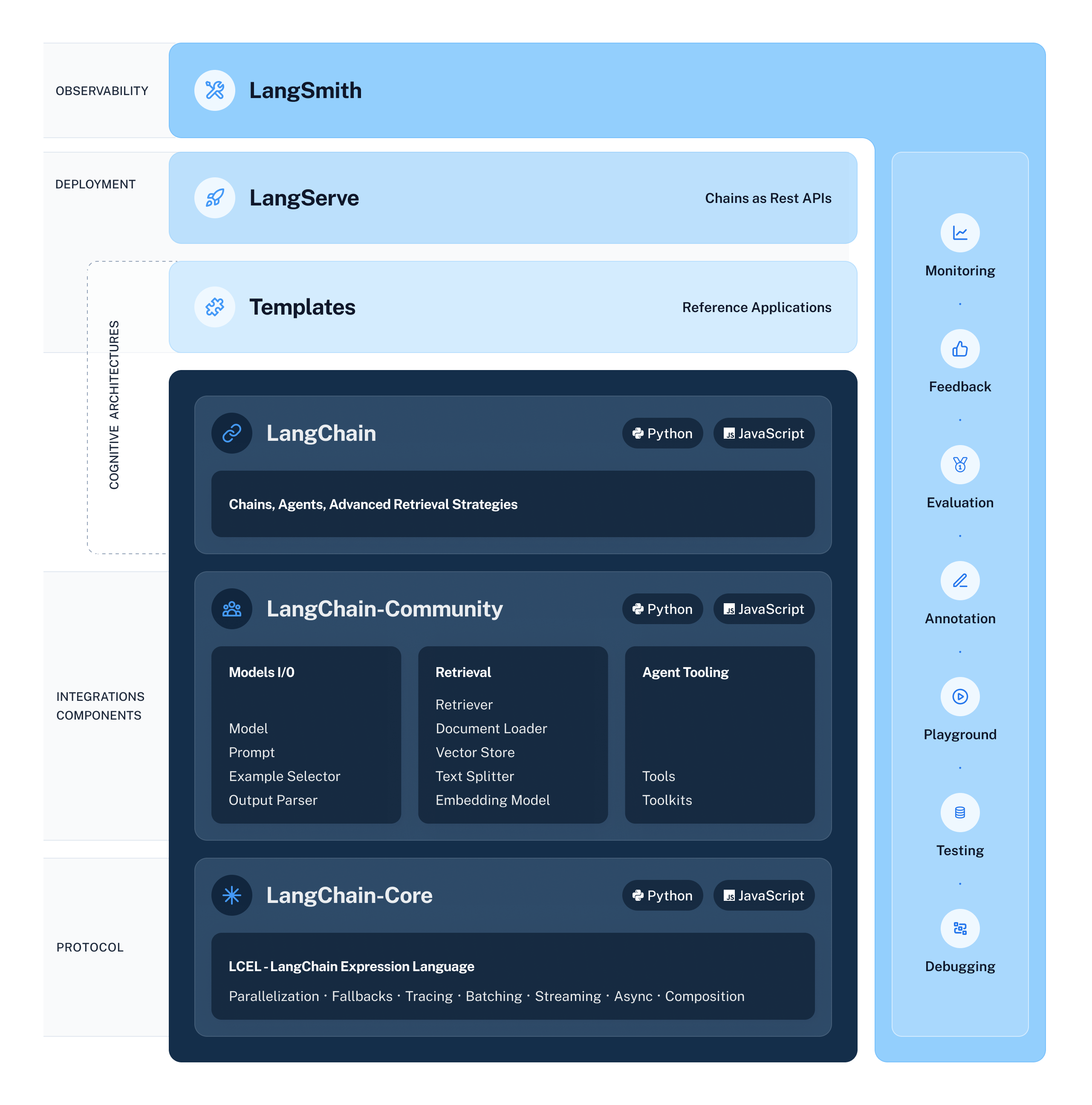

🦜️🔗 LangChain

⚡ Build context-aware reasoning applications ⚡

Looking for the JS/TS library? Check out LangChain.js.

To help you ship LangChain apps to production faster, check out LangSmith. LangSmith is a unified developer platform for building, testing, and monitoring LLM applications. Fill out this form to get off the waitlist or speak with our sales team.

Quick Install

With pip:

pip install langchain

With conda:

conda install langchain -c conda-forge

🤔 What is LangChain?

LangChain is a framework for developing applications powered by language models. It enables applications that:

- Are context-aware: connect a language model to sources of context (prompt instructions, few shot examples, content to ground its response in, etc.)

- Reason: rely on a language model to reason (about how to answer based on provided context, what actions to take, etc.)

This framework consists of several parts.

- LangChain Libraries: The Python and JavaScript libraries. Contains interfaces and integrations for a myriad of components, a basic run time for combining these components into chains and agents, and off-the-shelf implementations of chains and agents.

- LangChain Templates: A collection of easily deployable reference architectures for a wide variety of tasks.

- LangServe: A library for deploying LangChain chains as a REST API.

- LangSmith: A developer platform that lets you debug, test, evaluate, and monitor chains built on any LLM framework and seamlessly integrates with LangChain.

The LangChain libraries themselves are made up of several different packages.

langchain-core: Base abstractions and LangChain Expression Language.langchain-community: Third party integrations.langchain: Chains, agents, and retrieval strategies that make up an application's cognitive architecture.

🧱 What can you build with LangChain?

❓ Retrieval augmented generation

- Documentation

- End-to-end Example: Chat LangChain and repo

💬 Analyzing structured data

- Documentation

- End-to-end Example: SQL Llama2 Template

🤖 Chatbots

- Documentation

- End-to-end Example: Web LangChain (web researcher chatbot) and repo

And much more! Head to the Use cases section of the docs for more.

🚀 How does LangChain help?

The main value props of the LangChain libraries are:

- Components: composable tools and integrations for working with language models. Components are modular and easy-to-use, whether you are using the rest of the LangChain framework or not

- Off-the-shelf chains: built-in assemblages of components for accomplishing higher-level tasks

Off-the-shelf chains make it easy to get started. Components make it easy to customize existing chains and build new ones.

Components fall into the following modules:

📃 Model I/O:

This includes prompt management, prompt optimization, a generic interface for all LLMs, and common utilities for working with LLMs.

📚 Retrieval:

Data Augmented Generation involves specific types of chains that first interact with an external data source to fetch data for use in the generation step. Examples include summarization of long pieces of text and question/answering over specific data sources.

🤖 Agents:

Agents involve an LLM making decisions about which Actions to take, taking that Action, seeing an Observation, and repeating that until done. LangChain provides a standard interface for agents, a selection of agents to choose from, and examples of end-to-end agents.

📖 Documentation

Please see here for full documentation, which includes:

- Getting started: installation, setting up the environment, simple examples

- Overview of the interfaces, modules, and integrations

- Use case walkthroughs and best practice guides

- LangSmith, LangServe, and LangChain Template overviews

- Reference: full API docs

💁 Contributing

As an open-source project in a rapidly developing field, we are extremely open to contributions, whether it be in the form of a new feature, improved infrastructure, or better documentation.

For detailed information on how to contribute, see here.