This adds the response message as a document to the rag retriever so

users can choose to use this. Also drops document limit.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

## Description

We need to centralize the API we use to get the project name for our

tracers. This PR makes it so we always get this from a shared function

in the langsmith sdk.

## Dependencies

Upgraded langsmith from 0.52 to 0.62 to include the new API

`get_tracer_project`

- **Description:**

Recently Chroma rolled out a breaking change on the way we handle

embedding functions, in order to support multi-modal collections.

This broke the way LangChain's `Chroma` objects get created, because we

were passing the EF down into the Chroma collection:

https://docs.trychroma.com/migration#migration-to-0416---november-7-2023

However, internally, we are never actually using embeddings on the

chroma collection - LangChain's `Chroma` object calls it instead. Thus

we just don't pass an `embedding_function` to Chroma itself, which fixes

the issue.

- **Description:** The issue was not listing the proper import error for

amazon textract loader.

- **Issue:** Time wasted trying to figure out what to install...

(langchain docs don't list the dependency either)

- **Dependencies:** N/A

- **Tag maintainer:** @sbusso

- **Twitter handle:** @h9ste

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# Astra DB Vector store integration

- **Description:** This PR adds a `VectorStore` implementation for

DataStax Astra DB using its HTTP API

- **Issue:** (no related issue)

- **Dependencies:** A new required dependency is `astrapy` (`>=0.5.3`)

which was added to pyptoject.toml, optional, as per guidelines

- **Tag maintainer:** I recently mentioned to @baskaryan this

integration was coming

- **Twitter handle:** `@rsprrs` if you want to mention me

This PR introduces the `AstraDB` vector store class, extensive

integration test coverage, a reworking of the documentation which

conflates Cassandra and Astra DB on a single "provider" page and a new,

completely reworked vector-store example notebook (common to the

Cassandra store, since parts of the flow is shared by the two APIs). I

also took care in ensuring docs (and redirects therein) are behaving

correctly.

All style, linting, typechecks and tests pass as far as the `AstraDB`

integration is concerned.

I could build the documentation and check it all right (but ran into

trouble with the `api_docs_build` makefile target which I could not

verify: `Error: Unable to import module

'plan_and_execute.agent_executor' with error: No module named

'langchain_experimental'` was the first of many similar errors)

Thank you for a review!

Stefano

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

Cohere released the new embedding API (Embed v3:

https://txt.cohere.com/introducing-embed-v3/) that treats document and

query embeddings differently. This PR updated the `CohereEmbeddings` to

use them appropriately. It also works with the old models.

Description: This PR masks API key secrets for the Nebula model from

Symbl.ai

Issue: #12165

Maintainer: @eyurtsev

---------

Co-authored-by: Praveen Venkateswaran <praveen.venkateswaran@ibm.com>

* ChatAnyscale was missing coercion to SecretStr for anyscale api key

* The model inherits from ChatOpenAI so it should not force the openai

api key to be secret str until openai model has the same changes

https://github.com/langchain-ai/langchain/issues/12841

Qdrant was incorrectly calculating the cosine similarity and returning

`0.0` for the best match, instead of `1.0`. Internally Qdrant returns a

cosine score from `-1.0` (worst match) to `1.0` (best match), and the

current formula reflects it.

Possibility to pass on_artifacts to a conversation. It can be then

achieved by adding this way:

```python

result = agent.run(

input=message.text,

metadata={

"on_artifact": CALLBACK_FUNCTION

},

)

```

Calls uvicorn directly from cli:

Reload works if you define app by import string instead of object.

(was doing subprocess in order to get reloading)

Version bump to 0.0.14

Remove the need for [serve] for simplicity.

Readmes are updated in #12847 to avoid cluttering this PR

Previously we treated trace_on_chain_group as a command to always start

tracing. This is unintuitive (makes the function do 2 things), and makes

it harder to toggle tracing

When you use a MultiQuery it might be useful to use the original query

as well as the newly generated ones to maximise the changes to retriever

the correct document. I haven't created an issue, it seems a very small

and easy thing.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Correct number of elements in config list in

`batch()` and `abatch()` of `BaseLLM` in case `max_concurrency` is not

None.

- **Issue:** #12643

- **Twitter handle:** @akionux

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Zep now has the ability to search over chat history summaries. This PR

adds support for doing so. More here: https://blog.getzep.com/zep-v0-17/

@baskaryan @eyurtsev

…s present

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

### Enabling `device_map` in HuggingFacePipeline

For multi-gpu settings with large models, the

[accelerate](https://huggingface.co/docs/accelerate/usage_guides/big_modeling#using--accelerate)

library provides the `device_map` parameter to automatically distribute

the model across GPUs / disk.

The [Transformers

pipeline](3520e37e86/src/transformers/pipelines/__init__.py (L543))

enables users to specify `device` (or) `device_map`, and handles cases

(with warnings) when both are specified.

However, Langchain's HuggingFacePipeline only supports specifying

`device` when calling transformers which limits large models and

multi-gpu use-cases.

Additionally, the [default

value](8bd3ce59cd/libs/langchain/langchain/llms/huggingface_pipeline.py (L72))

of `device` is initialized to `-1` , which is incompatible with the

transformers pipeline when `device_map` is specified.

This PR addresses the addition of `device_map` as a parameter , and

solves the incompatibility of `device = -1` when `device_map` is also

specified.

An additional test has been added for this feature.

Additionally, some existing tests no longer work since

1. `max_new_tokens` has to be specified under `pipeline_kwargs` and not

`model_kwargs`

2. The GPT2 tokenizer raises a `ValueError: Pipeline with tokenizer

without pad_token cannot do batching`, since the `tokenizer.pad_token`

is `None` ([related

issue](https://github.com/huggingface/transformers/issues/19853) on the

transformers repo).

This PR handles fixing these tests as well.

Co-authored-by: Praveen Venkateswaran <praveen.venkateswaran@ibm.com>

[The python

spec](https://docs.python.org/3/reference/datamodel.html#object.__getattr__)

requires that `__getattr__` throw `AttributeError` for missing

attributes but there are several places throwing `ImportError` in the

current code base. This causes a specific problem with `hasattr` since

it calls `__getattr__` then looks only for `AttributeError` exceptions.

At present, calling `hasattr` on any of these modules will raise an

unexpected exception that most code will not handle as `hasattr`

throwing exceptions is not expected.

In our case this is triggered by an exception tracker (Airbrake) that

attempts to collect the version of all installed modules with code that

looks like: `if hasattr(mod, "__version__"):`. With `HEAD` this is

causing our exception tracker to fail on all exceptions.

I only changed instances of unknown attributes raising `ImportError` and

left instances of known attributes raising `ImportError`. It feels a

little weird but doesn't seem to break anything.

- **Description:** Use all Google search results data in SerpApi.com

wrapper instead of the first one only

- **Tag maintainer:** @hwchase17

_P.S. `libs/langchain/tests/integration_tests/utilities/test_serpapi.py`

are not executed during the `make test`._

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

It was passing in message instead of generation

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

* Restrict the chain to specific domains by default

* This is a breaking change, but it will fail loudly upon object

instantiation -- so there should be no silent errors for users

* Resolves CVE-2023-32786

* This is an opt-in feature, so users should be aware of risks if using

jinja2.

* Regardless we'll add sandboxing by default to jinja2 templates -- this

sandboxing is a best effort basis.

* Best strategy is still to make sure that jinja2 templates are only

loaded from trusted sources.

**Description:** Update `langchain.document_loaders.pdf.PyPDFLoader` to

store url in metadata (instead of a temporary file path) if user

provides a web path to a pdf

- **Issue:** Related to #7034; the reporter on that issue submitted a PR

updating `PyMuPDFParser` for this behavior, but it has unresolved merge

issues as of 20 Oct 2023 #7077

- In addition to `PyPDFLoader` and `PyMuPDFParser`, these other classes

in `langchain.document_loaders.pdf` exhibit similar behavior and could

benefit from an update: `PyPDFium2Loader`, `PDFMinerLoader`,

`PDFMinerPDFasHTMLLoader`, `PDFPlumberLoader` (I'm happy to contribute

to some/all of that, including assisting with `PyMuPDFParser`, if my

work is agreeable)

- The root cause is that the underlying pdf parser classes, e.g.

`langchain.document_loaders.parsers.pdf.PyPDFParser`, never receive

information about the url; the parsers receive a

`langchain.document_loaders.blob_loaders.blob`, which contains the pdf

contents and local file path, but not the url

- This update passes the web path directly to the parser since it's

minimally invasive and doesn't require further changes to maintain

existing behavior for local files... bigger picture, I'd consider

extending `blob` so that extra information like this can be

communicated, but that has much bigger implications on the codebase

which I think warrants maintainer input

- **Dependencies:** None

```python

# old behavior

>>> from langchain.document_loaders import PyPDFLoader

>>> loader = PyPDFLoader('https://arxiv.org/pdf/1706.03762.pdf')

>>> docs = loader.load()

>>> docs[0].metadata

{'source': '/var/folders/w2/zx77z1cs01s1thx5dhshkd58h3jtrv/T/tmpfgrorsi5/tmp.pdf', 'page': 0}

# new behavior

>>> from langchain.document_loaders import PyPDFLoader

>>> loader = PyPDFLoader('https://arxiv.org/pdf/1706.03762.pdf')

>>> docs = loader.load()

>>> docs[0].metadata

{'source': 'https://arxiv.org/pdf/1706.03762.pdf', 'page': 0}

```

- **Description:** #12273 's suggestion PR

Like other PDFLoader, loading pdf per each page and giving page

metadata.

- **Issue:** #12273

- **Twitter handle:** @blue0_0hope

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

This will allow you create the schema beforehand. The check was failing

and preventing importing into existing classes.

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

- **Description:** implement [quip](https://quip.com) loader

- **Issue:** https://github.com/langchain-ai/langchain/issues/10352

- **Dependencies:** No

- pass make format, make lint, make test

---------

Co-authored-by: Hao Fan <h_fan@apple.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

latest release broken, this fixes it

---------

Co-authored-by: Roman Vasilyev <rvasilyev@mozilla.com>

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

Prior to this PR, `ruff` was used only for linting and not for

formatting, despite the names of the commands. This PR makes it be used

for both linting code and autoformatting it.

This input key was missed in the last update PR:

https://github.com/langchain-ai/langchain/pull/7391

The input/output formats are intended to be like this:

```

{"inputs": [<prompt>]}

{"outputs": [<output_text>]}

```

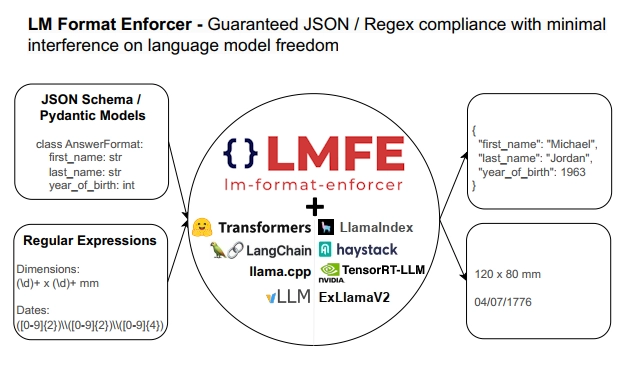

## Description

This PR adds support for

[lm-format-enforcer](https://github.com/noamgat/lm-format-enforcer) to

LangChain.

The library is similar to jsonformer / RELLM which are supported in

Langchain, but has several advantages such as

- Batching and Beam search support

- More complete JSON Schema support

- LLM has control over whitespace, improving quality

- Better runtime performance due to only calling the LLM's generate()

function once per generate() call.

The integration is loosely based on the jsonformer integration in terms

of project structure.

## Dependencies

No compile-time dependency was added, but if `lm-format-enforcer` is not

installed, a runtime error will occur if it is trying to be used.

## Tests

Due to the integration modifying the internal parameters of the

underlying huggingface transformer LLM, it is not possible to test

without building a real LM, which requires internet access. So, similar

to the jsonformer and RELLM integrations, the testing is via the

notebook.

## Twitter Handle

[@noamgat](https://twitter.com/noamgat)

Looking forward to hearing feedback!

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Best to review one commit at a time, since two of the commits are 100%

autogenerated changes from running `ruff format`:

- Install and use `ruff format` instead of black for code formatting.

- Output of `ruff format .` in the `langchain` package.

- Use `ruff format` in experimental package.

- Format changes in experimental package by `ruff format`.

- Manual formatting fixes to make `ruff .` pass.

I always take 20-30 seconds to re-discover where the

`convert_to_openai_function` wrapper lives in our codebase. Chat

langchain [has no

clue](https://smith.langchain.com/public/3989d687-18c7-4108-958e-96e88803da86/r)

what to do either. There's the older `create_openai_fn_chain` , but we

haven't been recommending it in LCEL. The example we show in the

[cookbook](https://python.langchain.com/docs/expression_language/how_to/binding#attaching-openai-functions)

is really verbose.

General function calling should be as simple as possible to do, so this

seems a bit more ergonomic to me (feel free to disagree). Another option

would be to directly coerce directly in the class's init (or when

calling invoke), if provided. I'm not 100% set against that. That

approach may be too easy but not simple. This PR feels like a decent

compromise between simple and easy.

```

from enum import Enum

from typing import Optional

from pydantic import BaseModel, Field

class Category(str, Enum):

"""The category of the issue."""

bug = "bug"

nit = "nit"

improvement = "improvement"

other = "other"

class IssueClassification(BaseModel):

"""Classify an issue."""

category: Category

other_description: Optional[str] = Field(

description="If classified as 'other', the suggested other category"

)

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI().bind_functions([IssueClassification])

llm.invoke("This PR adds a convenience wrapper to the bind argument")

# AIMessage(content='', additional_kwargs={'function_call': {'name': 'IssueClassification', 'arguments': '{\n "category": "improvement"\n}'}})

```

- Prefer lambda type annotations over inferred dict schema

- For sequences that start with RunnableAssign infer seq input type as

"input type of 2nd item in sequence - output type of runnable assign"