# Implements support for Personal Access Token Authentication in the

ConfluenceLoader

Fixes#5191

Implements a new optional parameter for the ConfluenceLoader: `token`.

This allows the use of personal access authentication when using the

on-prem server version of Confluence.

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@eyurtsev @Jflick58

Twitter Handle: felipe_yyc

---------

Co-authored-by: Felipe <feferreira@ea.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# minor refactor of GenerativeAgentMemory

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

-->

<!-- Remove if not applicable -->

- refactor `format_memories_detail` to be more reusable

- modified prompts for getting topics for reflection and for generating

insights

- update `characters.ipynb` to reflect changes

## Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

@vowelparrot

@hwchase17

@dev2049

# docs: modules pages simplified

Fixied #5627 issue

Merged several repetitive sections in the `modules` pages. Some texts,

that were hard to understand, were also simplified.

## Who can review?

@hwchase17

@dev2049

# Fixed multi input prompt for MapReduceChain

Added `kwargs` support for inner chains of `MapReduceChain` via

`from_params` method

Currently the `from_method` method of intialising `MapReduceChain` chain

doesn't work if prompt has multiple inputs. It happens because it uses

`StuffDocumentsChain` and `MapReduceDocumentsChain` underneath, both of

them require specifying `document_variable_name` if `prompt` of their

`llm_chain` has more than one `input`.

With this PR, I have added support for passing their respective `kwargs`

via the `from_params` method.

## Fixes https://github.com/hwchase17/langchain/issues/4752

## Who can review?

@dev2049 @hwchase17 @agola11

---------

Co-authored-by: imeckr <chandanroutray2012@gmail.com>

# Unstructured Excel Loader

Adds an `UnstructuredExcelLoader` class for `.xlsx` and `.xls` files.

Works with `unstructured>=0.6.7`. A plain text representation of the

Excel file will be available under the `page_content` attribute in the

doc. If you use the loader in `"elements"` mode, an HTML representation

of the Excel file will be available under the `text_as_html` metadata

key. Each sheet in the Excel document is its own document.

### Testing

```python

from langchain.document_loaders import UnstructuredExcelLoader

loader = UnstructuredExcelLoader(

"example_data/stanley-cups.xlsx",

mode="elements"

)

docs = loader.load()

```

## Who can review?

@hwchase17

@eyurtsev

Co-authored-by: Alvaro Bartolome <alvarobartt@gmail.com>

Co-authored-by: Daniel Vila Suero <daniel@argilla.io>

Co-authored-by: Tom Aarsen <37621491+tomaarsen@users.noreply.github.com>

Co-authored-by: Tom Aarsen <Cubiegamedev@gmail.com>

# Create elastic_vector_search.ElasticKnnSearch class

This extends `langchain/vectorstores/elastic_vector_search.py` by adding

a new class `ElasticKnnSearch`

Features:

- Allow creating an index with the `dense_vector` mapping compataible

with kNN search

- Store embeddings in index for use with kNN search (correct mapping

creates HNSW data structure)

- Perform approximate kNN search

- Perform hybrid BM25 (`query{}`) + kNN (`knn{}`) search

- perform knn search by either providing a `query_vector` or passing a

hosted `model_id` to use query_vector_builder to automatically generate

a query_vector at search time

Connection options

- Using `cloud_id` from Elastic Cloud

- Passing elasticsearch client object

search options

- query

- k

- query_vector

- model_id

- size

- source

- knn_boost (hybrid search)

- query_boost (hybrid search)

- fields

This also adds examples to

`docs/modules/indexes/vectorstores/examples/elasticsearch.ipynb`

Fixes # [5346](https://github.com/hwchase17/langchain/issues/5346)

cc: @dev2049

-->

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Lint sphinx documentation and fix broken links

This PR lints multiple warnings shown in generation of the project

documentation (using "make docs_linkcheck" and "make docs_build").

Additionally documentation internal links to (now?) non-existent files

are modified to point to existing documents as it seemed the new correct

target.

The documentation is not updated content wise.

There are no source code changes.

Fixes # (issue)

- broken documentation links to other files within the project

- sphinx formatting (linting)

## Before submitting

No source code changes, so no new tests added.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# docs: `ecosystem_integrations` update 3

Next cycle of updating the `ecosystem/integrations`

* Added an integration `template` file

* Added missed integration files

* Fixed several document_loaders/notebooks

## Who can review?

Is it possible to assign somebody to review PRs on docs? Thanks.

# Fix wrong class instantiation in docs MMR example

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

When looking at the Maximal Marginal Relevance ExampleSelector example

at

https://python.langchain.com/en/latest/modules/prompts/example_selectors/examples/mmr.html,

I noticed that there seems to be an error. Initially, the

`MaxMarginalRelevanceExampleSelector` class is used as an

`example_selector` argument to the `FewShotPromptTemplate` class. Then,

according to the text, a comparison is made to regular similarity

search. However, the `FewShotPromptTemplate` still uses the

`MaxMarginalRelevanceExampleSelector` class, so the output is the same.

To fix it, I added an instantiation of the

`SemanticSimilarityExampleSelector` class, because this seems to be what

is intended.

## Who can review?

@hwchase17

# Update Unstructured docs to remove the `detectron2` install

instructions

Removes `detectron2` installation instructions from the Unstructured

docs because installing `detectron2` is no longer required for

`unstructured>=0.7.0`. The `detectron2` model now runs using the ONNX

runtime.

## Who can review?

@hwchase17

@eyurtsev

# Add Managed Motorhead

This change enabled MotorheadMemory to utilize Metal's managed version

of Motorhead. We can easily enable this by passing in a `api_key` and

`client_id` in order to hit the managed url and access the memory api on

Metal.

Twitter: [@softboyjimbo](https://twitter.com/softboyjimbo)

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@dev2049 @hwchase17

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# added DeepLearing.AI course link

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

not @hwchase17 - hehe

# Bedrock LLM and Embeddings

This PR adds a new LLM and an Embeddings class for the

[Bedrock](https://aws.amazon.com/bedrock) service. The PR also includes

example notebooks for using the LLM class in a conversation chain and

embeddings usage in creating an embedding for a query and document.

**Note**: AWS is doing a private release of the Bedrock service on

05/31/2023; users need to request access and added to an allowlist in

order to start using the Bedrock models and embeddings. Please use the

[Bedrock Home Page](https://aws.amazon.com/bedrock) to request access

and to learn more about the models available in Bedrock.

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

# Support Qdrant filters

Qdrant has an [extensive filtering

system](https://qdrant.tech/documentation/concepts/filtering/) with rich

type support. This PR makes it possible to use the filters in Langchain

by passing an additional param to both the

`similarity_search_with_score` and `similarity_search` methods.

## Who can review?

@dev2049 @hwchase17

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# SQLite-backed Entity Memory

Following the initiative of

https://github.com/hwchase17/langchain/pull/2397 I think it would be

helpful to be able to persist Entity Memory on disk by default

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

This PR adds a new method `from_es_connection` to the

`ElasticsearchEmbeddings` class allowing users to use Elasticsearch

clusters outside of Elastic Cloud.

Users can create an Elasticsearch Client object and pass that to the new

function.

The returned object is identical to the one returned by calling

`from_credentials`

```

# Create Elasticsearch connection

es_connection = Elasticsearch(

hosts=['https://es_cluster_url:port'],

basic_auth=('user', 'password')

)

# Instantiate ElasticsearchEmbeddings using es_connection

embeddings = ElasticsearchEmbeddings.from_es_connection(

model_id,

es_connection,

)

```

I also added examples to the elasticsearch jupyter notebook

Fixes # https://github.com/hwchase17/langchain/issues/5239

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

As the title says, I added more code splitters.

The implementation is trivial, so i don't add separate tests for each

splitter.

Let me know if any concerns.

Fixes # (issue)

https://github.com/hwchase17/langchain/issues/5170

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@eyurtsev @hwchase17

---------

Signed-off-by: byhsu <byhsu@linkedin.com>

Co-authored-by: byhsu <byhsu@linkedin.com>

# Creates GitHubLoader (#5257)

GitHubLoader is a DocumentLoader that loads issues and PRs from GitHub.

Fixes#5257

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# Added New Trello loader class and documentation

Simple Loader on top of py-trello wrapper.

With a board name you can pull cards and to do some field parameter

tweaks on load operation.

I included documentation and examples.

Included unit test cases using patch and a fixture for py-trello client

class.

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

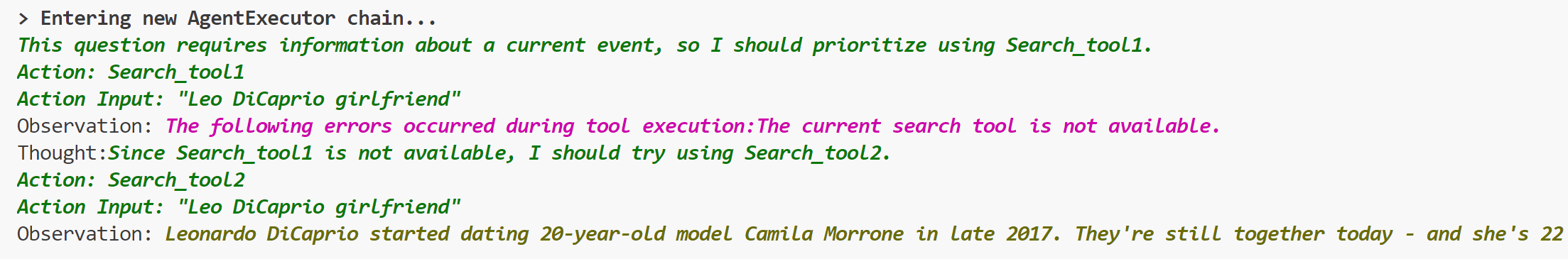

# Add ToolException that a tool can throw

This is an optional exception that tool throws when execution error

occurs.

When this exception is thrown, the agent will not stop working,but will

handle the exception according to the handle_tool_error variable of the

tool,and the processing result will be returned to the agent as

observation,and printed in pink on the console.It can be used like this:

```python

from langchain.schema import ToolException

from langchain import LLMMathChain, SerpAPIWrapper, OpenAI

from langchain.agents import AgentType, initialize_agent

from langchain.chat_models import ChatOpenAI

from langchain.tools import BaseTool, StructuredTool, Tool, tool

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI(temperature=0)

llm_math_chain = LLMMathChain(llm=llm, verbose=True)

class Error_tool:

def run(self, s: str):

raise ToolException('The current search tool is not available.')

def handle_tool_error(error) -> str:

return "The following errors occurred during tool execution:"+str(error)

search_tool1 = Error_tool()

search_tool2 = SerpAPIWrapper()

tools = [

Tool.from_function(

func=search_tool1.run,

name="Search_tool1",

description="useful for when you need to answer questions about current events.You should give priority to using it.",

handle_tool_error=handle_tool_error,

),

Tool.from_function(

func=search_tool2.run,

name="Search_tool2",

description="useful for when you need to answer questions about current events",

return_direct=True,

)

]

agent = initialize_agent(tools, llm, agent=AgentType.ZERO_SHOT_REACT_DESCRIPTION, verbose=True,

handle_tool_errors=handle_tool_error)

agent.run("Who is Leo DiCaprio's girlfriend? What is her current age raised to the 0.43 power?")

```

## Who can review?

- @vowelparrot

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# docs: ecosystem/integrations update

It is the first in a series of `ecosystem/integrations` updates.

The ecosystem/integrations list is missing many integrations.

I'm adding the missing integrations in a consistent format:

1. description of the integrated system

2. `Installation and Setup` section with 'pip install ...`, Key setup,

and other necessary settings

3. Sections like `LLM`, `Text Embedding Models`, `Chat Models`... with

links to correspondent examples and imports of the used classes.

This PR keeps new docs, that are presented in the

`docs/modules/models/text_embedding/examples` but missed in the

`ecosystem/integrations`. The next PRs will cover the next example

sections.

Also updated `integrations.rst`: added the `Dependencies` section with a

link to the packages used in LangChain.

## Who can review?

@hwchase17

@eyurtsev

@dev2049

# docs: ecosystem/integrations update 2

#5219 - part 1

The second part of this update (parts are independent of each other! no

overlap):

- added diffbot.md

- updated confluence.ipynb; added confluence.md

- updated college_confidential.md

- updated openai.md

- added blackboard.md

- added bilibili.md

- added azure_blob_storage.md

- added azlyrics.md

- added aws_s3.md

## Who can review?

@hwchase17@agola11

@agola11

@vowelparrot

@dev2049

# Update llamacpp demonstration notebook

Add instructions to install with BLAS backend, and update the example of

model usage.

Fixes#5071. However, it is more like a prevention of similar issues in

the future, not a fix, since there was no problem in the framework

functionality

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

- @hwchase17

- @agola11

# Fix for `update_document` Function in Chroma

## Summary

This pull request addresses an issue with the `update_document` function

in the Chroma class, as described in

[#5031](https://github.com/hwchase17/langchain/issues/5031#issuecomment-1562577947).

The issue was identified as an `AttributeError` raised when calling

`update_document` due to a missing corresponding method in the

`Collection` object. This fix refactors the `update_document` method in

`Chroma` to correctly interact with the `Collection` object.

## Changes

1. Fixed the `update_document` method in the `Chroma` class to correctly

call methods on the `Collection` object.

2. Added the corresponding test `test_chroma_update_document` in

`tests/integration_tests/vectorstores/test_chroma.py` to reflect the

updated method call.

3. Added an example and explanation of how to use the `update_document`

function in the Jupyter notebook tutorial for Chroma.

## Test Plan

All existing tests pass after this change. In addition, the

`test_chroma_update_document` test case now correctly checks the

functionality of `update_document`, ensuring that the function works as

expected and updates the content of documents correctly.

## Reviewers

@dev2049

This fix will ensure that users are able to use the `update_document`

function as expected, without encountering the previous

`AttributeError`. This will enhance the usability and reliability of the

Chroma class for all users.

Thank you for considering this pull request. I look forward to your

feedback and suggestions.

# Add SKLearnVectorStore

This PR adds SKLearnVectorStore, a simply vector store based on

NearestNeighbors implementations in the scikit-learn package. This

provides a simple drop-in vector store implementation with minimal

dependencies (scikit-learn is typically installed in a data scientist /

ml engineer environment). The vector store can be persisted and loaded

from json, bson and parquet format.

SKLearnVectorStore has soft (dynamic) dependency on the scikit-learn,

numpy and pandas packages. Persisting to bson requires the bson package,

persisting to parquet requires the pyarrow package.

## Before submitting

Integration tests are provided under

`tests/integration_tests/vectorstores/test_sklearn.py`

Sample usage notebook is provided under

`docs/modules/indexes/vectorstores/examples/sklear.ipynb`

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>