The following calls were throwing an exception:

575b717d10/docs/use_cases/evaluation/agent_vectordb_sota_pg.ipynb (L192)575b717d10/docs/use_cases/evaluation/agent_vectordb_sota_pg.ipynb (L239)

Exception:

```

---------------------------------------------------------------------------

ValidationError Traceback (most recent call last)

Cell In[14], line 1

----> 1 chain_sota = RetrievalQA.from_chain_type(llm=OpenAI(temperature=0), chain_type="stuff", retriever=vectorstore_sota, input_key="question")

File ~/github/langchain/venv/lib/python3.9/site-packages/langchain/chains/retrieval_qa/base.py:89, in BaseRetrievalQA.from_chain_type(cls, llm, chain_type, chain_type_kwargs, **kwargs)

85 _chain_type_kwargs = chain_type_kwargs or {}

86 combine_documents_chain = load_qa_chain(

87 llm, chain_type=chain_type, **_chain_type_kwargs

88 )

---> 89 return cls(combine_documents_chain=combine_documents_chain, **kwargs)

File ~/github/langchain/venv/lib/python3.9/site-packages/pydantic/main.py:341, in pydantic.main.BaseModel.__init__()

ValidationError: 1 validation error for RetrievalQA

retriever

instance of BaseRetriever expected (type=type_error.arbitrary_type; expected_arbitrary_type=BaseRetriever)

```

The vectorstores had to be converted to retrievers:

`vectorstore_sota.as_retriever()` and `vectorstore_pg.as_retriever()`.

The PR also:

- adds the file `paul_graham_essay.txt` referenced by this notebook

- adds to gitignore *.pkl and *.bin files that are generated by this

notebook

Interestingly enough, the performance of the prediction greatly

increased (new version of langchain or ne version of OpenAI models since

the last run of the notebook): from 19/33 correct to 28/33 correct!

Use numexpr evaluate instead of the python REPL to avoid malicious code

injection.

Tested against the (limited) math dataset and got the same score as

before.

For more permissive tools (like the REPL tool itself), other approaches

ought to be provided (some combination of Sanitizer + Restricted python

+ unprivileged-docker + ...), but for a calculator tool, only

mathematical expressions should be permitted.

See https://github.com/hwchase17/langchain/issues/814

Right now, eval chains require an answer for every question. It's

cumbersome to collect this ground truth so getting around this issue

with 2 things:

* Adding a context param in `ContextQAEvalChain` and simply evaluating

if the question is answered accurately from context

* Adding chain of though explanation prompting to improve the accuracy

of this w/o GT.

This also gets to feature parity with openai/evals which has the same

contextual eval w/o GT.

TODO in follow-up:

* Better prompt inheritance. No need for seperate prompt for CoT

reasoning. How can we merge them together

---------

Co-authored-by: Vashisht Madhavan <vashishtmadhavan@Vashs-MacBook-Pro.local>

This pull request adds an enum class for the various types of agents

used in the project, located in the `agent_types.py` file. Currently,

the project is using hardcoded strings for the initialization of these

agents, which can lead to errors and make the code harder to maintain.

With the introduction of the new enums, the code will be more readable

and less error-prone.

The new enum members include:

- ZERO_SHOT_REACT_DESCRIPTION

- REACT_DOCSTORE

- SELF_ASK_WITH_SEARCH

- CONVERSATIONAL_REACT_DESCRIPTION

- CHAT_ZERO_SHOT_REACT_DESCRIPTION

- CHAT_CONVERSATIONAL_REACT_DESCRIPTION

In this PR, I have also replaced the hardcoded strings with the

appropriate enum members throughout the codebase, ensuring a smooth

transition to the new approach.

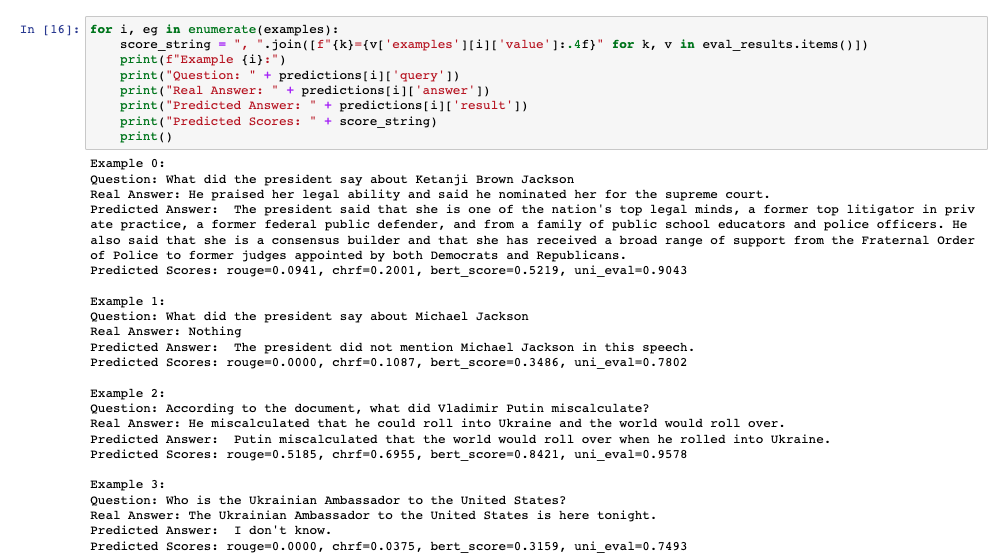

This PR adds additional evaluation metrics for data-augmented QA,

resulting in a report like this at the end of the notebook:

The score calculation is based on the

[Critique](https://docs.inspiredco.ai/critique/) toolkit, an API-based

toolkit (like OpenAI) that has minimal dependencies, so it should be

easy for people to run if they choose.

The code could further be simplified by actually adding a chain that

calls Critique directly, but that probably should be saved for another

PR if necessary. Any comments or change requests are welcome!

I originally had only modified the `from_llm` to include the prompt but

I realized that if the prompt keys used on the custom prompt didn't

match the default prompt, it wouldn't work because of how `apply` works.

So I made some changes to the evaluate method to check if the prompt is

the default and if not, it will check if the input keys are the same as

the prompt key and update the inputs appropriately.

Let me know if there is a better way to do this.

Also added the custom prompt to the QA eval notebook.

Big docs refactor! Motivation is to make it easier for people to find

resources they are looking for. To accomplish this, there are now three

main sections:

- Getting Started: steps for getting started, walking through most core

functionality

- Modules: these are different modules of functionality that langchain

provides. Each part here has a "getting started", "how to", "key

concepts" and "reference" section (except in a few select cases where it

didnt easily fit).

- Use Cases: this is to separate use cases (like summarization, question

answering, evaluation, etc) from the modules, and provide a different

entry point to the code base.

There is also a full reference section, as well as extra resources

(glossary, gallery, etc)

Co-authored-by: Shreya Rajpal <ShreyaR@users.noreply.github.com>