mirror of https://github.com/hwchase17/langchain

master

erick/core-release-0-2-42

v0.2

wfh/shallow

rlm/concept_docs

erick/community-release-0-3-2

eugene/fix_from_pydantic

eugene/experimenting_with_layout

bagatur/community_0_3_2

concept_docs

erick/core-fix-up006-noqas

langchain/langchain-people

wfh/add_react_admonition

cc/test_13

cc/oai_max_tokens

eugene/why_transition_2

eugene/why_transition

erick/multiple-update-docs-urls-to-latest

erick/docs-test-removing-biggest-bundles-performance

erick/docs-enable-ruff-rules-on-docs

wfh/update_project_name

bagatur/rm_optional_defaults

eugene/add_grit_linter

erick/test-ruff-output-existing

erick/docs-bump-memory-limit

eugene/qa_test_2

eugene/ci_fix_question_template

erick/partners-box-release-0-2-2

eugene/add_memory_equivalents

isaac/toolerrorpassing

cc/extended_tests

v0.1

cc/release_mongo

eugene/robocorp

eugene/foo

eugene/pydantic_v1_foo

erick/community-release-0-2-17

erick/docs-mdx-v3-compat-wip

eugene/update_is_caller_internal

isaac/toolerrorhandling03

bagatur/des_from_path

bagatur/v0.3/preview_api_ref

bagatur/run_tutorials

bagatur/run_all_docs_how_to

isaac/toolerrorhandling

bagatur/rfc_docs_gha

eugene/foo_meow

bagatur/fix_embeddings_filter_init

wfh/keyword_runnable_like_bu

eugene/add_mypy_plugin

eugene/v0.3_wut

bagatur/core_0_3_0_dev1

bagatur/v0.3rc_merge_master_2

bagatur/v0.3rc_merge_master

eugene/core_0.3rc_first

bagatur/oai_emb_fix

isaac-recursiveurlloader-testing

harrison/3.0

bagatur/core_pydocstyle_lint

harrison/support-kwargs-vectorstore

wfh/more_interops

bagatur/format_content_as

erick/community-undo-azure-ad-access-token-breaking-change

isaac/responseformatstuff

bagatur/delight

erick/ai21-integration-test-fixes

erick/all-more-lint-additions

erick/infra-continue-on-error

erick/ai21-address-breaking-changes-in-sdk-2-14-0-wip

bagatur/rfc_anthropic_cache_usage

bagatur/json_mode_standard

bagatur/dict_msg_tmpl2

wfh/nowarn

bagatur/rfc_dont_update_run_name

bagatur/oai_disabled_params

jacob/templates

bagatur/content_block_template

erick/chroma-fix-typing

isaac/moreembeddingtests

eugene/openai_0.3

bagatur/standard_tests_async

eugene/merge_pydantic_3_changes

eugene/0.3_release_docs

cc/deprecate_evaluators

bagatur/selector_add_examples

bagatutr/langsmith_example_selector

docs/fix_chat_model_os

eugene/security_related

eugene/add_async_api

eugene/integration_docs_cohere

eugene/multimodal_embedding_model

isaac/moretooltables

bagatur/merged_docs_styling

eugene/clean_up_pre_init

eugene/v0.3_meow

bagatur/serialize-pydantic-metadata

eugene/fix_tool_extra

erick/infra-pydantic-v2-scheduled-testing

bagatur/add_pydantic_sys_info

eugene/community_draft

cc/fix_exp_ci

eugene/pydantic_v_3

erick/docs-llm-embed-index-tables-wip

eugene/update_llm_result_types

eugene/add_json_schema_methods

bagatur/docs_versioning

bagatur/simplify_docs_header_footer

bagatur/docs_cp_ls_theme

erick/cli-new-template-types

isaac/ollamauniversalchat

isaac/llmintegrationtests

bagatur/fewshot_scratch

isaac/ollamallmfix

wfh/warn_name

eugene/add_indexer_to_retriever

bagatur/07_26_24/poetry_lock

cc/toolkits

erick/docs-new-integrations-docs

cc/api_chain

wfh/link

eugene/rate_limiting_requests

eugene/add_rate_limiter_integrations

isaac/ollamaimageissues

cc/many_tools_guide

eugene/update_relative_imports

eugene/add_timeout_for_tests

isaac/tavilynewlinefix

isaac/create_react_agent_doc_fix

eugene/add_tests_for_pydantic_models

eugene/document_indexer_v2

bagatur/ruff_0_5_3

wfh/parent

wfh/async_chromium

erick/infra-try-removing-uv-for-editable-install

eugene/indexing_abstraction_minimal

jacob/currying_docs

jacob/curry_tools

eugene/indexing_abstraction

wfh/triggered

cc/bind_tools

jacob/docs_style

isaac/fewshotpromptdocs

eugene/migrate_graphvectorstore_to_community

jacob/tools_additional_params

wfh/curry

wfh/inherit

eugene/stores_new

bagatur/rfc_tool_call_id_in_config

bagatur/docs_intro_wording

bagatur/lint_all_core_deps

eugene/update_add_texts

eugene/index_manager

eugene/tracing_interop2

eugene/migrate_vectorstores

eugene/cleanup_deprecated_code

wfh/is_error

eugene/root_validators_02

eugene/root_validators_03

eugene/pinecone_add_standard_tests

eugene/indexing_v2

wfh/url

bagatur/rfc_configurable_model

cc/tool_token_counts

renderer

bagatur/mypy_v1_update

wfh/add_list_support

bagatur/rfc_tool_call_filter

erick/anthropic-release-0-1-16

wfh/add_tool_param_descripts_2

wfh/add_tool_param_descripts

nc/19jun/core-no-pydantic

bagatur/opinionated_formatter

bagatur/rfc_docstring_lint

cc/fix_milvus

cc/update_pydantic_dep

maddy/support-options-in-langchainhub

isaac/chatopenaiparalleltoolcallingparam

erick/huggingface-relax-tokenizers-dep

rlm/test-llama-cpp

bagatur/retrieval_v2_scratch

erick/core-loosen-packaging-lib-version

eugene/disable_lint_rule

eugene/get_model_defaults

wfh/allyourtreesarebelongtome

eugene/pydantic_migration_2_b

isaac/sitemaploader-goldendocs

bagatur/recursive_url_bash

erick/docs-update-chatbedrock-with-tool-calling-docs-dont-use

erick/docs-update-chatbedrock-with-tool-calling-docs-do-not-use

isaac/sitemaploader-testing

isaac/sitemaploader-tests

eugene/pydantic_migration_2

erick/core-throw-error-on-invalid-alternative-import-in-deprecated

erick/core-throw-errors-on-invalid-alternative-import

bagatur/rfc_smithify_docs

eugene/async_history_2

bagatur/ai21_0_1_6

erick/docs-rewrite-contributor-docs

cc/update_openai_streaming_token_counts

eugene/llm_token_counts

eugene/langchain_how_to_config

bagatur/docs-format-api-ref

eugene/callbacks_propagate

erick/docs-v02-url-rfc

maddy/default-prompt-private-in-hub

eugene/update_version_docs

bagatur/parse_tool_docstring_fix

erick/docs-algolia-api-key-update

eugene/how_does_this_stream

eugene/langchain_core_manager

eugene/update_linting

erick/docs-ignore-echo-false-blocks

dqbd/api_ref_styles

erick/infra-codespell-v1

erick/infra-codespell-in-v1-branch

erick/community-release-0-2-0rc1

eugene/add_change_log2

harrison/new-docs

cc/retriever_score

bagatur/community_0_0_37

wfh/may3/help

eugene/core_0.2.0rc1

cc/docs_build

eugene/update_warnings2

bagatur/oai_tool_choice_required

wfh/add_rid_to_chain

eugene/migrate_document_loaders

bagatur/mistral_client

wfh/add_parameter_descriptions

erick/core-remove-batch-size-from-llm-start-callbacks

eugene/refactor_deprecations

eugene/release_0_2_0

eugene/web_retriever

eugene/move_memories_2

bagatur/tryout_uv

eugene/entity_store

eugene/run_type_for_lambdas

bagatur/rfc_standardize_input_msgs

bagatur/rfc_serialized_tool

brace/show-last-update-docs

erick/release-note-experiments

eugene/runnable_config

cc/function_message

rlm/rag_eval_guide

bagatur/rfc_token_usage

eugene/custom_embeddings

eugene/community_fix_imports

bagatur/goog_doc_nit

erick/docs-runnable-list-operations

bagatur/rm_convert_to_tool_docs

eugen/providers_update

erick/core-deprecate-vectorstore-relevance-scoring

eugene/outline_wrapper_1

erick/pytest-experiments-2

erick/pytest-experiments

erick/partner-cloudflare

rlm/langsmith_testing

erick/community-patch-clickhouse-make-it-possible-to-not-specify-index

eugene/postgres_vectorstore

bagatur/openllm_new_api

bagatur/layerupai

cc/deprecated_imports

erick/cohere-adaptive-rag-cookbook

erick/cohere-multi-tool-integration-test

dqbd/openai-lax-jsonschema

eugene/xml_again

brace/format-dpcs

eugene/pull_to_funcs

bagatur/fix_getattr

erick/core-patch-placeholder-message-shorthand

bagatur/0.2

eugene/unsafe_pydantic

bagatur/community_migration_script

bagatur/versioned_docs_2

bagatur/versioned_docs

bagatur/find_broken_links

bagatur/stream_pydantic

wfh/add_hub_version

eugene/stackframe

wfh/log_error

wfh/add_eval_metadata

erick/airbyte-patch-baseloader-wip

bagatur/rename_msg_kwargs

wfh/specify_version

fork/feature_audio_loader_auzre_speech

erick/infra-remove-venv-from-poetry-cache

erick/ci-test-timeout

erick/test-community-ci

erick/test-ci

wfh/add_warnings

eugene/huggingface

erick/core-patch-community-patch-baseloader-to-core

erick/core-minor-multimodal-document-page-content-rfc

bagatur/support_pydantic_context

bagatur/rfc_structured_list

erick/test-partner-failure

erick/test-partner-success

erick/test-error

eugene/fix_openai_community_stream

erick/test-ci-should-fail

erick/testutils

jacob/people

erick/docs-remove-platforms-redirect

eugene/add_people

erick/infra-check-min-versions-in-pr-ci

langchain-ai/langchain@5cbabbd

eugene/test_lint

erick/exa-lint

bagatur/make_cohere_client_optional

bagatur/rfc_as_str

erick/infra--individual-template-ci-

bagatur/rfc_@chain_typing

erick/infra-try-1-job-sphinx-build

rlm/mistral_cookbook

bagatur/rfc_chat_invoke_llm_res

erick/infra-rtd-build-bump-null

erick/infra-rtd-build-bump

erick/autoapi-test

bagatur/speedup_sphinx

erick/community-lint

erick/partner-nomic

erick/cli-langchain-dep-versions

bagatur/init_chat_prompt_msg_like

wfh/custom_prompt

fork/async-doc-loader

rlm/rag_from_scratch

eugene/message_history_test

erick/api-ref-navbar-update

bagatur/rfc_bind_collision

bagatur/bind_outside_agent

erick/release-notes

jacob/chatbot_message_passing

bagatur/3_12_ci

bagatur/assign_unpack

bagatur/docs_top_nav

bagatur/batch_overload_typing

bagatur/core_0_1_15_rc_1

bagatur/rfc_rich_retrieval

bagatur/runnable_drop

bagatur/class_chain

bagatur/initial_tool_choices

eugene/streaming_events

erick/core-patch-fallbacks-error-chain

harrison/tool-invocation

bagatur/tool_executor

erick/deepinfra-chat

eugene/agents_docs

eugene/update_index.md

bagatur/rfc_tool_executor

bagatur/rfc_extraction_improvement

bagatur/downgrade_setup_python

erick/mistralai-patch-enforce-stop-tokens

bagatur/thread_inof

bagatur/docs_last_updated_2

bagatur/docs_last_updated

erick/google-docs

dqbd/json-output-oai-parser-serialization

bagatur/cli_pkg_tmpl_lc_ver

erick/infra-try-show-last-update-time

bagatur/try_stat

bagatur/core_tracer_backwards_compat

erick/infra-ci-python-matrix-update-3-12

bagatur/rfc_retriever_return_str

do-not-merge

bagatur/dispatch_main_ci

bagatur/show_last_update_time

harrison/docs-m

harrison/docs-revamp-mirror

harrison/new-docs-revamp

harrison/agents-rewrite-code

bagatur/api_flyout

harrison/revamp-memory

harrison/merged-branches

bagatur/stuff_docs_lcel

harrison/agent-docs-concepts

harrison/agent-docs-custom

bagatur/api-ref-navbar-update

bagatur/combine_docs_chain_as_runnable

erick/ci-test-do-not-merge

bagatur/chat_hf

erick/infra--run-ci-on-all-prs-

bagatur/core_update_ruff_mypy

erick/nbconvert

bagatur/lc_stack_update

wfh/bind_tools

eugene/fix_xml_agent

harrison/anthropic-package

wfh/vertexai_fixup

bagatur/core_0_0_13

wfh/add_oai_agent_core_examples

erick/docs-bullet-points

wfh/gemini

eugene/bug_history

bagatur/community

harrison/turn-off-serializable

harrison/serializable-baga

wfh/prevent_outside

harrison/mongo-agent

erick/all-patch---change-ci-title-in-event-of-no-matrix-expansion-

rlm/update-img-prompt

eugene/update_file_chat_memory

harrison/deepsparse

bagatur/core_0.1

harrison/integrations

erick/docs-docusaurus-3

rlm/mm-rag-deck

bagatur/core_lint_docstring

bagatur/core_0_0_8

bagatur/lcel_get_started

bagatur/fmt_notebooks

bagatur/export_prompt_chat_classes

harrison/add-imports

bagatur/serialization_tests

bagatur/patch_0.0.400

wfh/tqdm_for_wait

bagatur/fix_core_namespace

erick/core-namespace-same

erick/api-docs-core-bugfix-

brace/new-lc-stack-svg

wfh/tqdm_wait

v0.0.339

bagatur/cogniswitch

dqbd/docs-responsivity-fix

bagatur/multi_return_source

wfh/func_eval

bagatur/full_template_docs

bagatur/callbacks-refactor

(vectorstore)/PGVectorAsync

rlm/mm_template

bagatur/rfc_bind_getattr

erick/skip-release-check-cli

bagatur/rm_return_direct_error

rlm/sql-pgvector-template

erick/improvement-format-notebooks

erick/improvement-default-docs-url-root

bagautr/rfc_image_template

refactorChromaInitLogic

rlm/ollama_json

bagatur/rfc_pinecone_hybrid

eugene/document_runnables2

harrison/root-listeners

wfh/add_llm_output_to_adapter

rlm/biomedical-rag

bagatur/cohere_input_type

bagatur/update-schema

erick/cli-codegen

wfh/content_union

bagatur/docs_smith_serve

rlm/open_clip_embd_expt

rlm/multi-modal-template

wfh/ossinvoc

pg/test-publish-rc-versions

wfh/conversational_feedback

bagatur/voyage-ai

template-readme-missing-env

rescana-com/master

bagatur/lakefs-loader

bagatur/readthedocs-loader-improvements

hwchase17-patch-1

eugene/fix_type_onbase_transformer

bagatur/deep_memory_version_1

api-reference-agents-functions

erick/cli-ci

bagatur/retry_nit

wfh/tree_distance

jacoblee93-patch-1-1

wfh/runnable_traceable

bagatur/rfc_chat_batch_gen

rlm/text-to-pgvector

bagatur/e2b-integration2

bagatur/api-reference-agents-functions

shorthills-ai/master

bagatur/e2b-integration

wfh/save_model_name

bagatur/voyage

rlm/LLaMA2_sql_scrub

bagatur/cogniswitch_chains

bagatur/private_fn

erick/langservehub

bagatur/rfc_vecstore_interface

nc/repl-lib

charlie/fine-tuning-notebook

harrison/move-imports

wfh/rtds

wfh/json_schema_evaluator

pg/python-3.12

wfh/eval_public_dataset

ankush/single-generations

nc/pandas-eval

eugene/update_warning_class

ankush/single-input

ankush/delete_v1_tracer

wfh/background

bagatur/bump_304

bagatur/dedup_transformer

eugene/fix_webbase_loader

harrison/move-pydantic-v1

wfh/vectorstore_tracing

vdaas-feature/vald

harrison/agents-exoskelton-1

harrison/agents-exoskeleton

jacob/routing_cookbook

harrison/more-imports

harrison/remove-from-init

eugene/automaton_variant_4

harrison/specified-input-keys

bagatur/docs_zoom

jacob/feature_vercel_analytics

wfh/update_types

francisco/sql_agent_improvements

wfh/implicit_client

bagatur/auto_rewrite_retrieval

bagatur/konko

eugene/automaton_variant_3

wfh/default_retries

bagatur/lint_fix

rlm/fix-prompts

wfh/redirects

eugene/automaton_variant_2

bagatur/fix_multiquery

wfh/json_other

wfh/fix_link

bagatur/add-data-anonymizer

bagatur/mem_session

molly/vectorstore-batching

deepsense-ai/llama-cpp-grammar

bagatur/gpt_4_docstring

harrison/add-llm-kwargs

bagatur/redis_refactor

rlm/llama-grammar

bagatur/runnable_mem

wfh/clirun

eugene/document_pipeline

harrison/retrieval-agents

bagatur/rfc_fallback_inherit

bagatur/epsilla

bagatur/promptguard

harrison/pydantic-bridge

bagatur/cheatsheet

wfh/update_criteria_prompt

pydantic/b2_bump

eugene/pydantic_v2_tools2

wfh/criteria_strat

eugene/wrap_openapi_stuff

bagatur/bump_264

bagatur/new_msg

rlm/agent_use_case

harrison/remove-things-from-init

harrison/clean-up-imports

bagatur/lite_llm

bagatur/rfc_zep_search

bagatur/pydantic_agnostic

bagatur/bagel

bagatur/fix_sched_2

wfh/async_eval_default

bagatur/respect_light_mode

bagatur/docsly

eugene/automaton_variant_1

wfh/return_exceptions

wfh/example_id_config

bagatur/rm_nuclia_ext

wfh/fix_recursive_url_loader

bagatur/runnable_locals

wfh/embeddings_callbacks_v3

bagatur/google_drive

rlm/chatbots_use_case

wfh/langsmith_nopydantic

eugene/enum_rendering

harrison/add-memory-to-sql

bagatur/rfc_fallbacks

harrison/xml-agent

bagatur/mod_desc

wfh/memory_interface

wfh/throw_on_broken_links

eugene/expand_documentation

wfh/api_ref

wfh/swizzle

eugene/test

harrison/async-web

harrison/fix-typo

wfh/retriever_additional_data

harrison/experimental-package

bagatu/rfc_pkg_per_chain

wfh/default_data_type

harrison/move-to-schema/chain

harrison/move-to-schema-more-callbacks

wfh/to_prompt_template

wfh/not_implemented

wfh/limit_concurrency

wfh/delete_deprecated

harrison/move_to_core

harrison/move-to-core/prompts

wfh/add_agent_trajectory_loader

ankush/message-eval

harrison/variable-table

wfh/skip_no_output

harrison/apply-async

harrison/improve-docs-formatting

vwp/embedding_fuzzy

wfh/evals_docs_reorg_draft

vwp/comparison_with_references

wfh/comparison_with_references

harrison/split-schema-dir

vwp/accept_no_reasoning

wfh/embeddings_callbcaks

vwp/fix_promptlayer

wfh/key_matching

harrison/marqo

vwp/make_new_eval_chain_run

vwp/any_callable

vwp/time_to_first_token

vwp/accept_chain

vwp/evals_docs_reorg

vwp/similarity

vwp/use_langsmith

vwp/rm_dep

vwp/script_for_adding_docs

octoml/master

harrison/set-pydantic-docs

harrison/markdown-docs

vwp/drafts/unit_testing

harrison/functions

ankush/asyncio-gather-agenerate

vwp/retriever_callbacks_v2

vwp/schema_dir

harrison/allow-kwargs

eugene/persistence_db

vwp/anthropic_token_usage

vwp/evaluator_chains

vwp/envurl

eugene/research_v1

eugene/chain_generics

harrison/neo4j-lint

ankush/callbacks-cleanup

dev2049/pgvector_fix

harrison/anthropic-chat

vwp/simplify_tracer2

vwp/simplify_tracer

harrison/schema-directory

harrison/comp-prompt

dev2049/rough_draft_doc_manager

vwp/child_runs

ankush/chat-agent-parsing

dev2049/combine_quickstart

dev2049/concise_get_started

vwp/feedback_crud

harrison/exclude-embedings

dev2049/azure_vecstore

vwp/base_model

dev2049/getting_started_clean

dev2049/change_llm_name

dev2049/embedding_rename

eugene/prompt_template

harrison/serialize-chat

dev2049/embed_docs_to_texts

dev2049/doc_clean

dev2049/chroma_cleanup

harrison/few-shot-w-template-fix

retrievalqafinetune

dev2049/retrieval_eval

vwp/tracing_docs

vwp/bold_headergs

eugene/add_file_system

harrison/return-prompt

vwp/tracer-async-call

eugene/check_something

dev2049/combine_refac

eugene/updat_extended_tests

eugene/meow_draft

eugene/fix_google_palm_tests

vwp/dcv2

eugene/retriever_version

tjaffri/dgloader

harrison/pdfplumber

vwp/patch

harrison/character-chat-agent

harrison/mongo-loader

harrison/sharepoint

eugene/add_caching_from_master_only

dev2049/save-to-notion-tool

dev2049/self_query_integration

dev2049/update_lock

eugene/revert_workflows

revert-4465-harrison/env-var

dev2049/pgvector-size-fix

vwp/eval_examples

fork-chains

eugene/test_branch

vwp/add-github-api-utility

vwp/from_llm_and_tools

vwp/pandas_cb_manager

add-scenexplain-tool

vwp/tools_callbacks

vwp/relax_chat_agent

vwp/parser__type

vwp/filter_ambiguous_args

harrison/get-working-with-agents

dev2049/null_callback_hack

dev2049/llm_requests_chain

vwp/test_on_built_wheel

vwp/avoid_poetry_deps_in_ci

eugene/openai_optional

vwp/agent_tests

vwp/structured_tools

vwp/align_search_tools

vwp/structured_tools_with_pyd

vwp/inheritance_same_agents

vwp/chatregtests

dev2049/default_models

dev2049/perfect_retriever

dev2049/docs_stateful

vwp/add_args

khimaros/master

vwp/chroma_elements

vwp/default_dont_raise

vwp/lintfix

harrison/anthropic

dev2049/retrieval_eval_nb

harrison/contextual-compression

vwp/marathon

agents-4-18

harrison-outerr-exc

vwp/hf_image_gen

vwp/hf_imagen

vwp/tools_undo

vwp/characters_2

vwp/tools-refactor-2

harrison/autogpt

harrison/typeo

dev2049/fmt_nbs

vwp/numexpr

harrison/characters-nb

vwp/characters_with_planning

harrison/pinecone-backwards-compat

vwp/openapi_with_tool_retrieval

harrison/aws-text

ankush/patch1

harrison/processor

harrison/script-update

harrison/api-chain

harrison/ai21-embeddings

harrison/alpaca

nc/poe-handler-chat-model

nc/poe-handler

harrison/mrkl-parser

harrison/agent-experiments

harrison/replicate

harrison/chat-chain

harrison/update-wandb

harrison/debug

harrison/qasper

harrison/dbpedia

harrison/changes

jeremy/guardrails

nc/guardrails-error-handling

harrison/guardrails

harrison/use-output-parsers

John-Church-guard

agent_evaluation

harrison/kor-chain

harrison/inference-api

ankush/callback-refactor

harrison/eval

harrison/audio

ankush/prompt-abstractions

harrison/memory-chat

harrison/indexes

ankush/partial-prompt-apply

harrison/sagemaker

harrison/datetime

harrison/openapiagent

harrison/paged-pdf

harrison/pswsl

ankush/example-runner

harrison/guards

scad/api-chain

harrison/prompt-bugs

harrison/sql-agent

harrison/pinecone-try-except

harrison/callback-updates

harrison/map-rerank

harrison/combine-docs-parse

harrison/azure-rfc

harrison/sequential_chain_from_prompts

harrison/agent-refactor

harrison/agent_intermediate_steps

harrison/agent_multi_inputs

harrison/promot-mrkl

harrison/fix_logging_api

harrison/use_output_parser

harrison/track_intermediate_steps

harrison/sql_error

harrison/logging_to_file

harrison/output_parser

harrison/flexible_model_args

harrison/agent-improvements

harrison/router_docs

harrison/docs

samantha/add_llm_to_example

harrison/reorg_smart_chains

mako-templates

harrison/save_metadatas

harrison/router

harrison/custom_pipeline

harrison/chain_pipeline

harrison/prompts_docs

harrison/attempt_citing_in_prompt

harrison/load_prompt

harrison/prompts_take_2

harrison/ape

harrison/prompt_examples

harrison/add_dependencies

langchain-ai21==0.1.4

langchain-ai21==0.1.5

langchain-ai21==0.1.6

langchain-ai21==0.1.7

langchain-airbyte==0.1.1

langchain-anthropic==0.1.12

langchain-anthropic==0.1.13

langchain-anthropic==0.1.14rc1

langchain-anthropic==0.1.14rc2

langchain-anthropic==0.1.15

langchain-anthropic==0.1.16

langchain-anthropic==0.1.17

langchain-anthropic==0.1.18

langchain-anthropic==0.1.19

langchain-anthropic==0.1.20

langchain-anthropic==0.1.21

langchain-anthropic==0.1.22

langchain-anthropic==0.1.23

langchain-anthropic==0.2.0

langchain-anthropic==0.2.0.dev0

langchain-anthropic==0.2.0.dev1

langchain-anthropic==0.2.1

langchain-anthropic==0.2.2

langchain-anthropic==0.2.3

langchain-azure-dynamic-sessions==0.1.0

langchain-azure-dynamic-sessions==0.1.0rc0

langchain-azure-dynamic-sessions==0.2.0

langchain-box==0.1.0

langchain-box==0.2.0

langchain-box==0.2.1

langchain-chroma==0.1.1

langchain-chroma==0.1.2

langchain-chroma==0.1.4

langchain-cli==0.0.22

langchain-cli==0.0.23

langchain-cli==0.0.24

langchain-cli==0.0.25

langchain-cli==0.0.26

langchain-cli==0.0.27

langchain-cli==0.0.28

langchain-cli==0.0.29

langchain-cli==0.0.30

langchain-cli==0.0.31

langchain-community==0.0.35

langchain-community==0.0.36

langchain-community==0.0.37

langchain-community==0.0.38

langchain-community==0.2.0

langchain-community==0.2.0rc1

langchain-community==0.2.1

langchain-community==0.2.10

langchain-community==0.2.11

langchain-community==0.2.12

langchain-community==0.2.13

langchain-community==0.2.14

langchain-community==0.2.15

langchain-community==0.2.16

langchain-community==0.2.17

langchain-community==0.2.2

langchain-community==0.2.3

langchain-community==0.2.4

langchain-community==0.2.5

langchain-community==0.2.6

langchain-community==0.2.7

langchain-community==0.2.9

langchain-community==0.3.0

langchain-community==0.3.0.dev1

langchain-community==0.3.0.dev2

langchain-community==0.3.1

langchain-core==0.1.47

langchain-core==0.1.48

langchain-core==0.1.50

langchain-core==0.1.51

langchain-core==0.1.52

langchain-core==0.2.0

langchain-core==0.2.0rc1

langchain-core==0.2.1

langchain-core==0.2.10

langchain-core==0.2.11

langchain-core==0.2.12

langchain-core==0.2.13

langchain-core==0.2.15

langchain-core==0.2.16

langchain-core==0.2.17

langchain-core==0.2.18

langchain-core==0.2.19

langchain-core==0.2.2

langchain-core==0.2.20

langchain-core==0.2.21

langchain-core==0.2.22

langchain-core==0.2.23

langchain-core==0.2.24

langchain-core==0.2.25

langchain-core==0.2.26

langchain-core==0.2.27

langchain-core==0.2.28

langchain-core==0.2.29

langchain-core==0.2.29rc1

langchain-core==0.2.2rc1

langchain-core==0.2.3

langchain-core==0.2.30

langchain-core==0.2.31

langchain-core==0.2.32

langchain-core==0.2.33

langchain-core==0.2.34

langchain-core==0.2.35

langchain-core==0.2.36

langchain-core==0.2.37

langchain-core==0.2.38

langchain-core==0.2.39

langchain-core==0.2.4

langchain-core==0.2.40

langchain-core==0.2.41

langchain-core==0.2.5

langchain-core==0.2.6

langchain-core==0.2.7

langchain-core==0.2.8

langchain-core==0.2.9

langchain-core==0.3.0

langchain-core==0.3.0.dev1

langchain-core==0.3.0.dev2

langchain-core==0.3.0.dev3

langchain-core==0.3.0.dev4

langchain-core==0.3.0.dev5

langchain-core==0.3.1

langchain-core==0.3.2

langchain-core==0.3.3

langchain-core==0.3.4

langchain-core==0.3.5

langchain-core==0.3.6

langchain-core==0.3.7

langchain-core==0.3.8

langchain-core==0.3.9

langchain-couchbase==0.0.1

langchain-couchbase==0.1.0

langchain-couchbase==0.1.1

langchain-exa==0.1.0

langchain-exa==0.2.0

langchain-experimental==0.0.58

langchain-experimental==0.0.59

langchain-experimental==0.0.60

langchain-experimental==0.0.61

langchain-experimental==0.0.62

langchain-experimental==0.0.63

langchain-experimental==0.0.64

langchain-experimental==0.0.65

langchain-experimental==0.3.0

langchain-experimental==0.3.0.dev1

langchain-experimental==0.3.1

langchain-fireworks==0.1.3

langchain-fireworks==0.1.4

langchain-fireworks==0.1.5

langchain-fireworks==0.1.6

langchain-fireworks==0.1.7

langchain-fireworks==0.2.0

langchain-fireworks==0.2.0.dev0

langchain-fireworks==0.2.0.dev1

langchain-fireworks==0.2.0.dev2

langchain-fireworks==0.2.1

langchain-groq==0.1.10

langchain-groq==0.1.4

langchain-groq==0.1.5

langchain-groq==0.1.6

langchain-groq==0.1.8

langchain-groq==0.1.9

langchain-groq==0.2.0

langchain-groq==0.2.0.dev0

langchain-groq==0.2.0.dev1

langchain-huggingface==0.0.1

langchain-huggingface==0.0.2

langchain-huggingface==0.0.3

langchain-huggingface==0.1.0

langchain-huggingface==0.1.0.dev1

langchain-ibm==0.1.5

langchain-ibm==0.1.6

langchain-ibm==0.1.7

langchain-ibm==0.1.8

langchain-ibm==0.1.9

langchain-milvus==0.1.0

langchain-milvus==0.1.1

langchain-milvus==0.1.2

langchain-milvus==0.1.3

langchain-milvus==0.1.4

langchain-milvus==0.1.5

langchain-mistralai==0.1.10

langchain-mistralai==0.1.11

langchain-mistralai==0.1.12

langchain-mistralai==0.1.13

langchain-mistralai==0.1.6

langchain-mistralai==0.1.7

langchain-mistralai==0.1.8

langchain-mistralai==0.1.9

langchain-mistralai==0.2.0

langchain-mistralai==0.2.0.dev1

langchain-mongodb==0.1.4

langchain-mongodb==0.1.5

langchain-mongodb==0.1.6

langchain-mongodb==0.1.7

langchain-mongodb==0.1.8

langchain-mongodb==0.1.9

langchain-mongodb==0.2.0

langchain-mongodb==0.2.0.dev1

langchain-nomic==0.1.0

langchain-nomic==0.1.1

langchain-nomic==0.1.2

langchain-nomic==0.1.3

langchain-ollama==0.1.0

langchain-ollama==0.1.1

langchain-ollama==0.1.2

langchain-ollama==0.1.3

langchain-ollama==0.2.0

langchain-ollama==0.2.0.dev1

langchain-openai==0.1.10

langchain-openai==0.1.11

langchain-openai==0.1.12

langchain-openai==0.1.13

langchain-openai==0.1.14

langchain-openai==0.1.15

langchain-openai==0.1.16

langchain-openai==0.1.17

langchain-openai==0.1.19

langchain-openai==0.1.20

langchain-openai==0.1.21

langchain-openai==0.1.21rc1

langchain-openai==0.1.21rc2

langchain-openai==0.1.22

langchain-openai==0.1.23

langchain-openai==0.1.24

langchain-openai==0.1.25

langchain-openai==0.1.5

langchain-openai==0.1.6

langchain-openai==0.1.7

langchain-openai==0.1.8

langchain-openai==0.1.8rc1

langchain-openai==0.1.9

langchain-openai==0.2.0

langchain-openai==0.2.0.dev0

langchain-openai==0.2.0.dev1

langchain-openai==0.2.0.dev2

langchain-openai==0.2.1

langchain-openai==0.2.2

langchain-pinecone==0.1.1

langchain-pinecone==0.1.2

langchain-pinecone==0.1.3

langchain-pinecone==0.2.0

langchain-pinecone==0.2.0.dev1

langchain-prompty==0.0.1

langchain-prompty==0.0.2

langchain-prompty==0.0.3

langchain-prompty==0.1.0

langchain-qdrant==0.0.1

langchain-qdrant==0.1.0

langchain-qdrant==0.1.1

langchain-qdrant==0.1.2

langchain-qdrant==0.1.3

langchain-qdrant==0.1.4

langchain-qdrant==0.2.0.dev1

langchain-robocorp==0.0.10

langchain-robocorp==0.0.10.post1

langchain-robocorp==0.0.6

langchain-robocorp==0.0.7

langchain-robocorp==0.0.8

langchain-robocorp==0.0.9

langchain-robocorp==0.0.9.post1

langchain-text-splitters==0.0.2

langchain-text-splitters==0.2.0

langchain-text-splitters==0.2.1

langchain-text-splitters==0.2.2

langchain-text-splitters==0.2.4

langchain-text-splitters==0.3.0

langchain-text-splitters==0.3.0.dev0

langchain-text-splitters==0.3.0.dev1

langchain-together==0.1.1

langchain-together==0.1.2

langchain-together==0.1.3

langchain-together==0.1.4

langchain-together==0.1.5

langchain-unstructured==0.1.0

langchain-unstructured==0.1.1

langchain-unstructured==0.1.2

langchain-unstructured==0.1.4

langchain-unstructured==0.1.5

langchain-upstage==0.1.4

langchain-upstage==0.1.5

langchain-voyageai==0.1.1

langchain-voyageai==0.1.2

langchain==0.1.17

langchain==0.1.19

langchain==0.1.20

langchain==0.2.0

langchain==0.2.0rc1

langchain==0.2.0rc2

langchain==0.2.1

langchain==0.2.10

langchain==0.2.11

langchain==0.2.12

langchain==0.2.13

langchain==0.2.14

langchain==0.2.15

langchain==0.2.16

langchain==0.2.2

langchain==0.2.3

langchain==0.2.4

langchain==0.2.5

langchain==0.2.6

langchain==0.2.7

langchain==0.2.8

langchain==0.2.9

langchain==0.3.0

langchain==0.3.0.dev1

langchain==0.3.0.dev2

langchain==0.3.1

langchain==0.3.2

v0.0.1

v0.0.100

v0.0.101

v0.0.102

v0.0.103

v0.0.104

v0.0.105

v0.0.106

v0.0.107

v0.0.108

v0.0.109

v0.0.110

v0.0.111

v0.0.112

v0.0.113

v0.0.114

v0.0.115

v0.0.116

v0.0.117

v0.0.118

v0.0.119

v0.0.120

v0.0.121

v0.0.122

v0.0.123

v0.0.124

v0.0.125

v0.0.126

v0.0.127

v0.0.128

v0.0.129

v0.0.130

v0.0.131

v0.0.132

v0.0.133

v0.0.134

v0.0.135

v0.0.136

v0.0.137

v0.0.138

v0.0.139

v0.0.140

v0.0.141

v0.0.142

v0.0.143

v0.0.144

v0.0.145

v0.0.146

v0.0.147

v0.0.148

v0.0.149

v0.0.150

v0.0.151

v0.0.152

v0.0.153

v0.0.154

v0.0.155

v0.0.156

v0.0.157

v0.0.158

v0.0.159

v0.0.160

v0.0.161

v0.0.162

v0.0.163

v0.0.164

v0.0.165

v0.0.166

v0.0.167

v0.0.168

v0.0.169

v0.0.170

v0.0.171

v0.0.172

v0.0.173

v0.0.174

v0.0.175

v0.0.176

v0.0.177

v0.0.178

v0.0.179

v0.0.180

v0.0.181

v0.0.182

v0.0.183

v0.0.184

v0.0.185

v0.0.186

v0.0.187

v0.0.188

v0.0.189

v0.0.190

v0.0.191

v0.0.192

v0.0.193

v0.0.194

v0.0.195

v0.0.196

v0.0.197

v0.0.198

v0.0.199

v0.0.1rc0

v0.0.1rc1

v0.0.1rc2

v0.0.1rc3

v0.0.1rc4

v0.0.2

v0.0.200

v0.0.201

v0.0.202

v0.0.203

v0.0.204

v0.0.205

v0.0.206

v0.0.207

v0.0.208

v0.0.209

v0.0.210

v0.0.211

v0.0.212

v0.0.213

v0.0.214

v0.0.215

v0.0.216

v0.0.217

v0.0.218

v0.0.219

v0.0.220

v0.0.221

v0.0.222

v0.0.223

v0.0.224

v0.0.225

v0.0.226

v0.0.227

v0.0.228

v0.0.229

v0.0.230

v0.0.231

v0.0.232

v0.0.233

v0.0.234

v0.0.235

v0.0.236

v0.0.237

v0.0.238

v0.0.239

v0.0.240

v0.0.240rc0

v0.0.240rc1

v0.0.240rc4

v0.0.242

v0.0.243

v0.0.244

v0.0.245

v0.0.247

v0.0.248

v0.0.249

v0.0.250

v0.0.251

v0.0.252

v0.0.253

v0.0.254

v0.0.255

v0.0.256

v0.0.257

v0.0.258

v0.0.259

v0.0.260

v0.0.261

v0.0.262

v0.0.263

v0.0.264

v0.0.265

v0.0.266

v0.0.267

v0.0.268

v0.0.269

v0.0.270

v0.0.271

v0.0.272

v0.0.273

v0.0.274

v0.0.275

v0.0.276

v0.0.277

v0.0.278

v0.0.279

v0.0.281

v0.0.283

v0.0.284

v0.0.285

v0.0.286

v0.0.287

v0.0.288

v0.0.289

v0.0.290

v0.0.291

v0.0.292

v0.0.293

v0.0.294

v0.0.295

v0.0.296

v0.0.297

v0.0.298

v0.0.299

v0.0.300

v0.0.301

v0.0.302

v0.0.303

v0.0.304

v0.0.305

v0.0.306

v0.0.307

v0.0.308

v0.0.309

v0.0.310

v0.0.311

v0.0.312

v0.0.313

v0.0.314

v0.0.315

v0.0.316

v0.0.317

v0.0.318

v0.0.319

v0.0.320

v0.0.321

v0.0.322

v0.0.323

v0.0.324

v0.0.325

v0.0.326

v0.0.327

v0.0.329

v0.0.330

v0.0.331

v0.0.331rc0

v0.0.331rc1

v0.0.331rc2

v0.0.331rc3

v0.0.332

v0.0.333

v0.0.334

v0.0.335

v0.0.336

v0.0.337

v0.0.338

v0.0.339

v0.0.339rc0

v0.0.339rc1

v0.0.339rc2

v0.0.339rc3

v0.0.340

v0.0.341

v0.0.342

v0.0.343

v0.0.344

v0.0.345

v0.0.346

v0.0.347

v0.0.348

v0.0.349

v0.0.349-rc.1

v0.0.349-rc.2

v0.0.350

v0.0.351

v0.0.352

v0.0.353

v0.0.354

v0.0.4

v0.0.5

v0.0.64

v0.0.65

v0.0.66

v0.0.67

v0.0.68

v0.0.69

v0.0.70

v0.0.71

v0.0.72

v0.0.73

v0.0.74

v0.0.75

v0.0.76

v0.0.77

v0.0.78

v0.0.79

v0.0.80

v0.0.81

v0.0.82

v0.0.83

v0.0.84

v0.0.85

v0.0.86

v0.0.87

v0.0.88

v0.0.89

v0.0.90

v0.0.91

v0.0.92

v0.0.93

v0.0.94

v0.0.95

v0.0.96

v0.0.97

v0.0.98

v0.0.99

v0.1.0

v0.1.1

v0.1.10

v0.1.11

v0.1.12

v0.1.13

v0.1.14

v0.1.15

v0.1.16

v0.1.17rc1

v0.1.2

v0.1.3

v0.1.4

v0.1.5

v0.1.6

v0.1.7

v0.1.8

v0.1.9

${ noResults }

8549 Commits (83f62fdacfa7930c975e2c4aea5733b17e8d6bde)

| Author | SHA1 | Message | Date |

|---|---|---|---|

|

|

7164015135

|

community[minor]: Add Openvino embedding support (#19632)

This PR is used to support both HF and BGE embeddings with openvino --------- Co-authored-by: Alexander Kozlov <alexander.kozlov@intel.com> |

6 months ago |

|

|

cd55d587c2

|

langchain[patch]: Upgrade openai's sdk and solve some interface adaptation problems. (#19548)

- **Issue:** close #19534 |

6 months ago |

|

|

12861273e1

|

experimental[patch]: Removed 'SQLResults:' from the LLMResponse in SQLDatabaseChain (#17104)

**Description:**

When using the SQLDatabaseChain with Llama2-70b LLM and, SQLite

database. I was getting `Warning: You can only execute one statement at

a time.`.

```

from langchain.sql_database import SQLDatabase

from langchain_experimental.sql import SQLDatabaseChain

sql_database_path = '/dccstor/mmdataretrieval/mm_dataset/swimming_record/rag_data/swimmingdataset.db'

sql_db = get_database(sql_database_path)

db_chain = SQLDatabaseChain.from_llm(mistral, sql_db, verbose=True, callbacks = [callback_obj])

db_chain.invoke({

"query": "What is the best time of Lance Larson in men's 100 meter butterfly competition?"

})

```

Error:

```

Warning Traceback (most recent call last)

Cell In[31], line 3

1 import langchain

2 langchain.debug=False

----> 3 db_chain.invoke({

4 "query": "What is the best time of Lance Larson in men's 100 meter butterfly competition?"

5 })

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/langchain/chains/base.py:162, in Chain.invoke(self, input, config, **kwargs)

160 except BaseException as e:

161 run_manager.on_chain_error(e)

--> 162 raise e

163 run_manager.on_chain_end(outputs)

164 final_outputs: Dict[str, Any] = self.prep_outputs(

165 inputs, outputs, return_only_outputs

166 )

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/langchain/chains/base.py:156, in Chain.invoke(self, input, config, **kwargs)

149 run_manager = callback_manager.on_chain_start(

150 dumpd(self),

151 inputs,

152 name=run_name,

153 )

154 try:

155 outputs = (

--> 156 self._call(inputs, run_manager=run_manager)

157 if new_arg_supported

158 else self._call(inputs)

159 )

160 except BaseException as e:

161 run_manager.on_chain_error(e)

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/langchain_experimental/sql/base.py:198, in SQLDatabaseChain._call(self, inputs, run_manager)

194 except Exception as exc:

195 # Append intermediate steps to exception, to aid in logging and later

196 # improvement of few shot prompt seeds

197 exc.intermediate_steps = intermediate_steps # type: ignore

--> 198 raise exc

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/langchain_experimental/sql/base.py:143, in SQLDatabaseChain._call(self, inputs, run_manager)

139 intermediate_steps.append(

140 sql_cmd

141 ) # output: sql generation (no checker)

142 intermediate_steps.append({"sql_cmd": sql_cmd}) # input: sql exec

--> 143 result = self.database.run(sql_cmd)

144 intermediate_steps.append(str(result)) # output: sql exec

145 else:

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/langchain_community/utilities/sql_database.py:436, in SQLDatabase.run(self, command, fetch, include_columns)

425 def run(

426 self,

427 command: str,

428 fetch: Literal["all", "one"] = "all",

429 include_columns: bool = False,

430 ) -> str:

431 """Execute a SQL command and return a string representing the results.

432

433 If the statement returns rows, a string of the results is returned.

434 If the statement returns no rows, an empty string is returned.

435 """

--> 436 result = self._execute(command, fetch)

438 res = [

439 {

440 column: truncate_word(value, length=self._max_string_length)

(...)

443 for r in result

444 ]

446 if not include_columns:

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/langchain_community/utilities/sql_database.py:413, in SQLDatabase._execute(self, command, fetch)

410 elif self.dialect == "postgresql": # postgresql

411 connection.exec_driver_sql("SET search_path TO %s", (self._schema,))

--> 413 cursor = connection.execute(text(command))

414 if cursor.returns_rows:

415 if fetch == "all":

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/base.py:1416, in Connection.execute(self, statement, parameters, execution_options)

1414 raise exc.ObjectNotExecutableError(statement) from err

1415 else:

-> 1416 return meth(

1417 self,

1418 distilled_parameters,

1419 execution_options or NO_OPTIONS,

1420 )

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/sql/elements.py:516, in ClauseElement._execute_on_connection(self, connection, distilled_params, execution_options)

514 if TYPE_CHECKING:

515 assert isinstance(self, Executable)

--> 516 return connection._execute_clauseelement(

517 self, distilled_params, execution_options

518 )

519 else:

520 raise exc.ObjectNotExecutableError(self)

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/base.py:1639, in Connection._execute_clauseelement(self, elem, distilled_parameters, execution_options)

1627 compiled_cache: Optional[CompiledCacheType] = execution_options.get(

1628 "compiled_cache", self.engine._compiled_cache

1629 )

1631 compiled_sql, extracted_params, cache_hit = elem._compile_w_cache(

1632 dialect=dialect,

1633 compiled_cache=compiled_cache,

(...)

1637 linting=self.dialect.compiler_linting | compiler.WARN_LINTING,

1638 )

-> 1639 ret = self._execute_context(

1640 dialect,

1641 dialect.execution_ctx_cls._init_compiled,

1642 compiled_sql,

1643 distilled_parameters,

1644 execution_options,

1645 compiled_sql,

1646 distilled_parameters,

1647 elem,

1648 extracted_params,

1649 cache_hit=cache_hit,

1650 )

1651 if has_events:

1652 self.dispatch.after_execute(

1653 self,

1654 elem,

(...)

1658 ret,

1659 )

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/base.py:1848, in Connection._execute_context(self, dialect, constructor, statement, parameters, execution_options, *args, **kw)

1843 return self._exec_insertmany_context(

1844 dialect,

1845 context,

1846 )

1847 else:

-> 1848 return self._exec_single_context(

1849 dialect, context, statement, parameters

1850 )

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/base.py:1988, in Connection._exec_single_context(self, dialect, context, statement, parameters)

1985 result = context._setup_result_proxy()

1987 except BaseException as e:

-> 1988 self._handle_dbapi_exception(

1989 e, str_statement, effective_parameters, cursor, context

1990 )

1992 return result

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/base.py:2346, in Connection._handle_dbapi_exception(self, e, statement, parameters, cursor, context, is_sub_exec)

2344 else:

2345 assert exc_info[1] is not None

-> 2346 raise exc_info[1].with_traceback(exc_info[2])

2347 finally:

2348 del self._reentrant_error

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/base.py:1969, in Connection._exec_single_context(self, dialect, context, statement, parameters)

1967 break

1968 if not evt_handled:

-> 1969 self.dialect.do_execute(

1970 cursor, str_statement, effective_parameters, context

1971 )

1973 if self._has_events or self.engine._has_events:

1974 self.dispatch.after_cursor_execute(

1975 self,

1976 cursor,

(...)

1980 context.executemany,

1981 )

File ~/.conda/envs/guardrails1/lib/python3.9/site-packages/sqlalchemy/engine/default.py:922, in DefaultDialect.do_execute(self, cursor, statement, parameters, context)

921 def do_execute(self, cursor, statement, parameters, context=None):

--> 922 cursor.execute(statement, parameters)

Warning: You can only execute one statement at a time.

```

**Issue:**

The Error occurs because when generating the SQLQuery, the llm_input

includes the stop character of "\nSQLResult:", so for this user query

the LLM generated response is **SELECT Time FROM men_butterfly_100m

WHERE Swimmer = 'Lance Larson';\nSQLResult:** it is required to remove

the SQLResult suffix on the llm response before executing it on the

database.

```

llm_inputs = {

"input": input_text,

"top_k": str(self.top_k),

"dialect": self.database.dialect,

"table_info": table_info,

"stop": ["\nSQLResult:"],

}

sql_cmd = self.llm_chain.predict(

callbacks=_run_manager.get_child(),

**llm_inputs,

).strip()

if SQL_RESULT in sql_cmd:

sql_cmd = sql_cmd.split(SQL_RESULT)[0].strip()

result = self.database.run(sql_cmd)

```

<!-- Thank you for contributing to LangChain!

Please title your PR "<package>: <description>", where <package> is

whichever of langchain, community, core, experimental, etc. is being

modified.

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes if applicable,

- **Dependencies:** any dependencies required for this change,

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` from the root

of the package you've modified to check this locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc: https://python.langchain.com/docs/contributing/

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com>

|

6 months ago |

|

|

540ebf35a9

|

community[patch]: Add explicit error message to Bedrock error output. (#17328)

- **Description:** Propagate Bedrock errors into Langchain explicitly. Use-case: unset region error is hidden behind 'Could not load credentials...' message - **Issue:** [17654](https://github.com/langchain-ai/langchain/issues/17654) - **Dependencies:** None --------- Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> |

6 months ago |

|

|

69bb96c80f

|

community[patch]: surrealdb handle for empty metadata and allow collection names with complex characters (#17374)

- **Description:** Handle for empty metadata and allow collection names with complex characters - **Issue:** #17057 - **Dependencies:** `surrealdb` --------- Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> |

6 months ago |

|

|

0df76bee37

|

core[patch]:: XML parser to cover the case when the xml only contains the root level tag (#17456)

Description: Fix xml parser to handle strings that only contain the root

tag

Issue: N/A

Dependencies: None

Twitter handle: N/A

A valid xml text can contain only the root level tag. Example: <body>

Some text here

</body>

The example above is a valid xml string. If parsed with the current

implementation the result is {"body": []}. This fix checks if the root

level text contains any non-whitespace character and if that's the case

it returns {root.tag: root.text}. The result is that the above text is

correctly parsed as {"body": "Some text here"}

@ale-delfino

Thank you for contributing to LangChain!

Checklist:

- [x] PR title: Please title your PR "package: description", where

"package" is whichever of langchain, community, core, experimental, etc.

is being modified. Use "docs: ..." for purely docs changes, "templates:

..." for template changes, "infra: ..." for CI changes.

- Example: "community: add foobar LLM"

- [x] PR message: **Delete this entire template message** and replace it

with the following bulleted list

- **Description:** a description of the change

- **Issue:** the issue # it fixes, if applicable

- **Dependencies:** any dependencies required for this change

- **Twitter handle:** if your PR gets announced, and you'd like a

mention, we'll gladly shout you out!

- [x] Pass lint and test: Run `make format`, `make lint` and `make test`

from the root of the package(s) you've modified to check that you're

passing lint and testing. See contribution guidelines for more

information on how to write/run tests, lint, etc:

https://python.langchain.com/docs/contributing/

- [x] Add tests and docs: If you're adding a new integration, please

include

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in

`docs/docs/integrations` directory.

Additional guidelines:

- Make sure optional dependencies are imported within a function.

- Please do not add dependencies to pyproject.toml files (even optional

ones) unless they are required for unit tests.

- Most PRs should not touch more than one package.

- Changes should be backwards compatible.

- If you are adding something to community, do not re-import it in

langchain.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @efriis, @eyurtsev, @hwchase17.

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

|

6 months ago |

|

|

124ab79c23

|

community[minor]: Add Anyscale embedding support (#17605)

**Description:** Add embedding model support for Anyscale Endpoint **Dependencies:** openai --------- Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

12843f292f

|

community[patch]: llama cpp embeddings reset default n_batch (#17594)

When testing Nomic embeddings --

```

from langchain_community.embeddings import LlamaCppEmbeddings

embd_model_path = "/Users/rlm/Desktop/Code/llama.cpp/models/nomic-embd/nomic-embed-text-v1.Q4_K_S.gguf"

embd_lc = LlamaCppEmbeddings(model_path=embd_model_path)

embedding_lc = embd_lc.embed_query(query)

```

We were seeing this error for strings > a certain size --

```

File ~/miniforge3/envs/llama2/lib/python3.9/site-packages/llama_cpp/llama.py:827, in Llama.embed(self, input, normalize, truncate, return_count)

824 s_sizes = []

826 # add to batch

--> 827 self._batch.add_sequence(tokens, len(s_sizes), False)

828 t_batch += n_tokens

829 s_sizes.append(n_tokens)

File ~/miniforge3/envs/llama2/lib/python3.9/site-packages/llama_cpp/_internals.py:542, in _LlamaBatch.add_sequence(self, batch, seq_id, logits_all)

540 self.batch.token[j] = batch[i]

541 self.batch.pos[j] = i

--> 542 self.batch.seq_id[j][0] = seq_id

543 self.batch.n_seq_id[j] = 1

544 self.batch.logits[j] = logits_all

ValueError: NULL pointer access

```

The default `n_batch` of llama-cpp-python's Llama is `512` but we were

explicitly setting it to `8`.

These need to be set to equal for embedding models.

* The embedding.cpp example has an assertion to make sure these are

always equal.

* Apparently this is not being done properly in llama-cpp-python.

With `n_batch` set to 8, if more than 8 tokens are passed the batch runs

out of space and it crashes.

This also explains why the CPU compute buffer size was small:

raw client with default `n_batch=512`

```

llama_new_context_with_model: CPU input buffer size = 3.51 MiB

llama_new_context_with_model: CPU compute buffer size = 21.00 MiB

```

langchain with `n_batch=8`

```

llama_new_context_with_model: CPU input buffer size = 0.04 MiB

llama_new_context_with_model: CPU compute buffer size = 0.33 MiB

```

We can work around this by passing `n_batch=512`, but this will not be

obvious to some users:

```

embedding = LlamaCppEmbeddings(model_path=embd_model_path,

n_batch=512)

```

From discussion w/ @cebtenzzre. Related:

https://github.com/abetlen/llama-cpp-python/issues/1189

Co-authored-by: Bagatur <baskaryan@gmail.com>

|

6 months ago |

|

|

8e976545f3

|

community[patch]: support OpenAI whisper base url (#17695)

**Description:** The base URL for OpenAI is retrieved from the

environment variable "OPENAI_BASE_URL", whereas for langchain it is

obtained from "OPENAI_API_BASE". By adding `base_url =

os.environ.get("OPENAI_API_BASE")`, the OpenAI proxy can execute

correctly.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

|

6 months ago |

|

|

44a3484503

|

community[patch]: add NotebookLoader unit test (#17721)

Thank you for contributing to LangChain! - **Description:** added unit tests for NotebookLoader. Linked PR: https://github.com/langchain-ai/langchain/pull/17614 - **Issue:** [#17614](https://github.com/langchain-ai/langchain/pull/17614) - **Twitter handle:** @paulodoestech - [x] Pass lint and test: Run `make format`, `make lint` and `make test` from the root of the package(s) you've modified to check that you're passing lint and testing. See contribution guidelines for more information on how to write/run tests, lint, etc: https://python.langchain.com/docs/contributing/ - [x] Add tests and docs: If you're adding a new integration, please include 1. a test for the integration, preferably unit tests that do not rely on network access, 2. an example notebook showing its use. It lives in `docs/docs/integrations` directory. If no one reviews your PR within a few days, please @-mention one of baskaryan, efriis, eyurtsev, hwchase17. --------- Co-authored-by: lachiewalker <lachiewalker1@hotmail.com> Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

4c3a67122f

|

community[patch]: add Integration for OpenAI image gen with v1 sdk (#17771)

**Description:** Created a Langchain Tool for OpenAI DALLE Image Generation. **Issue:** [#15901](https://github.com/langchain-ai/langchain/issues/15901) **Dependencies:** n/a **Twitter handle:** @paulodoestech - [x] **Add tests and docs**: If you're adding a new integration, please include 1. a test for the integration, preferably unit tests that do not rely on network access, 2. an example notebook showing its use. It lives in `docs/docs/integrations` directory. - [x] **Lint and test**: Run `make format`, `make lint` and `make test` from the root of the package(s) you've modified. See contribution guidelines for more: https://python.langchain.com/docs/contributing/ Additional guidelines: - Make sure optional dependencies are imported within a function. - Please do not add dependencies to pyproject.toml files (even optional ones) unless they are required for unit tests. - Most PRs should not touch more than one package. - Changes should be backwards compatible. - If you are adding something to community, do not re-import it in langchain. If no one reviews your PR within a few days, please @-mention one of baskaryan, efriis, eyurtsev, hwchase17. --------- Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

a8104ea8e9

|

openai[patch]: add checking codes for calling AI model get error (#17909)

**Description:**: adding checking codes for calling AI model get error

in chat_models/base.py and llms/base.py

**Issue**: Sometimes the AI Model calling will get error, we should

raise it.

Otherwise, the next code 'choices.extend(response["choices"])' will

throw a "TypeError: 'NoneType' object is not iterable" error to mask the

true error.

Because 'response["choices"]' is None.

**Dependencies**: None

---------

Co-authored-by: yangkx <yangkx@asiainfo-int.com>

Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com>

|

6 months ago |

|

|

833d61adb3

|

docs: update Together README.md (#18004)

## PR message **Description:** This PR adds a README file for the Together API in the `libs/partners` folder of this repository. The README includes: - A brief description of the package - Installation instructions and class introductions - Simple usage examples **Issue:** #17545 This PR only contains document changes. --------- Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

3d3cc71287

|

community[patch]: fix bugs for bilibili Loader (#18036)

- **Description:** 1. Fix the BiliBiliLoader that can receive cookie parameters, it requires 3 other parameters to run. The change is backward compatible. 2. Add test; 3. Add example in docs - **Issue:** [#14213] Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> |

6 months ago |

|

|

1ef3fa0411

|

docs: improve readability of Langchain Expression Language get_started.ipynb (#18157)

**Description:** A few grammatical changes to improve readability of the LCEL .ipynb and tidy some null characters. **Issue:** N/A Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> |

6 months ago |

|

|

25c9f3d1d1

|

community[patch]: Support Streaming in Azure Machine Learning (#18246)

- [x] **PR title**: "community: Support streaming in Azure ML and few naming changes" - [x] **PR message**: - **Description:** Added support for streaming for azureml_endpoint. Also, renamed and AzureMLEndpointApiType.realtime to AzureMLEndpointApiType.dedicated. Also, added new classes CustomOpenAIChatContentFormatter and CustomOpenAIContentFormatter and updated the classes LlamaChatContentFormatter and LlamaContentFormatter to now show a deprecated warning message when instantiated. --------- Co-authored-by: Sachin Paryani <saparan@microsoft.com> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

ecb11a4a32

|

langchain[patch]: fix BaseChatMemory get output data error with extra key (#18117)

**Description:** At times, BaseChatMemory._get_input_output may acquire some extra keys such as 'intermediate_steps' (agent_executor with return_intermediate_steps set to True) and 'messages' (agent_executor.iter with memory). In these instances, _get_input_output can raise an error due to the presence of multiple keys. The 'output' field should be used as the default field in these cases. **Issue:** #16791 |

6 months ago |

|

|

f5e84c8858

|

docs: fixing markdown for tips (#18199)

Previous markdown code was not working as intended, new code should add green box around the tip so it is highlighted Co-authored-by: Hershenson, Isaac (Extern) <isaac.hershenson.extern@bayer04.de> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

85deee521a

|

docs: Nvidia Riva Runnables Documentation (#18237)

- **Description:** Documents how to use the Riva runnables to add streamed automatic-speech-recognition (ASR) and text-to-speech (TTS) to chains. - **Issue:** None - **Dependencies:** None - **Twitter handle:** @HaydenWolff1 --------- Co-authored-by: Hayden Wolff <hwolff@Haydens-Laptop.local> Co-authored-by: Hayden Wolff <hwolff@MacBook-Pro.local> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

afa2d85405

|

community[patch]: Added missing from_documents method to KNNRetriever. (#18411)

- Description: Added missing `from_documents` method to `KNNRetriever`, providing the ability to supply metadata to LangChain `Document`s, and to give it parity to the other retrievers, which do have `from_documents`. - Issue: None - Dependencies: None - Twitter handle: None Co-authored-by: Victor Adan <vadan@netroadshow.com> Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> |

6 months ago |

|

|

dfc4177b50

|

community[patch]: mypy ignore fix (#18483)

Relates to #17048 Description : Applied fix to dynamodb and elasticsearch file. Error was : `Cannot override writeable attribute with read-only property` Suggestion: instead of adding ``` @messages.setter def messages(self, messages: List[BaseMessage]) -> None: raise NotImplementedError("Use add_messages instead") ``` we can change base class property `messages: List[BaseMessage]` to ``` @property def messages(self) -> List[BaseMessage]:... ``` then we don't need to add `@messages.setter` in all child classes. |

6 months ago |

|

|

dc9e9a66db

|

docs: update docstring of the ChatAnthropic and AnthropicLLM classes (#18649)

**Description:** Update docstring of the ChatAnthropic and AnthropicLLM classes **Issue:** Not applicable **Dependencies:** None |

6 months ago |

|

|

f19229c564

|

core[patch]: fix beta, deprecated typing (#18877)

**Description:** While not technically incorrect, the TypeVar used for the `@beta` decorator prevented pyright (and thus most vscode users) from correctly seeing the types of functions/classes decorated with `@beta`. This is in part due to a small bug in pyright (https://github.com/microsoft/pyright/issues/7448 ) - however, the `Type` bound in the typevar `C = TypeVar("C", Type, Callable)` is not doing anything - classes are `Callables` by default, so by my understanding binding to `Type` does not actually provide any more safety - the modified annotation still works correctly for both functions, properties, and classes. --------- Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

263ee78886

|

core[runnables]: docstring for class RunnableSerializable, method configurable_fields (#19722)

**Description:** Update to the docstring for class RunnableSerializable, method configurable_fields **Issue:** [Add in code documentation to core Runnable methods #18804](https://github.com/langchain-ai/langchain/issues/18804) **Dependencies:** None --------- Co-authored-by: Chester Curme <chester.curme@gmail.com> |

6 months ago |

|

|

e1f10a697e

|

openai[patch]: perform judgment processing on chat model streaming delta (#18983)

**PR title:** partners: openai chat model **PR message:** perform judgment processing on chat model streaming delta Closes #18977 Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> |

6 months ago |

|

|

b7c8bc8268

|

community[patch]: fix yuan2 errors in LLMs (#19004)

1. fix yuan2 errors while invoke Yuan2. 2. update tests. |

6 months ago |

|

|

aba4bd0d13

|

docs: Add async batch case (#19686) | 6 months ago |

|

|

ec4dcfca7f

|

core[runnables]: docstring of class RunnableSerializable, method configurable_alternatives (#19724)

**Description:** Update to the docstring for class RunnableSerializable, method configurable_alternatives **Issue:** [Add in code documentation to core Runnable methods #18804](https://github.com/langchain-ai/langchain/issues/18804) **Dependencies:** None --------- Co-authored-by: Chester Curme <chester.curme@gmail.com> |

6 months ago |

|

|

824dbc49ee

|

langchain[patch]: add template_tool_response arg to create_json_chat (#19696)

In this small PR I added the `template_tool_response` arg to the `create_json_chat` function, so that users can customize this prompt in case of need. Thanks for your reviews! --------- Co-authored-by: taamedag <Davide.Menini@swisscom.com> |

6 months ago |

|

|

688ca48019

|

community[patch]: Adding validation when vector does not exist (#19698)

Adding validation when vector does not exist Co-authored-by: gaoyuan <gaoyuan.20001218@bytedance.com> |

6 months ago |

|

|

f55b11fb73

|

infra: Revert run partner CI on core PRs (#19733)

Reverts parts of langchain-ai/langchain#19688 |

6 months ago |

|

|

665f15bd48

|

docs: fix typos and make quickstart more readable (#19712)

Description: minor docs changes to make it more readable. Issue: N/A Dependencies: N/A Twitter handle: _kubealex |

6 months ago |

|

|

36090c84f2

|

docs: Update function "run" to "invoke" in llm_symbolic_math.ipynb (#19713)

This patch updates multiple function "run" to "invoke" in llm_symbolic_math.ipynb. Without this patch, you see following message. The function `run` was deprecated in LangChain 0.1.0 and will be removed in 0.2.0. Use invoke instead. Signed-off-by: Masanari Iida <standby24x7@gmail.com> |

6 months ago |

|

|

4a49fc5a95

|

community[patch]: Fix bug in vdms (#19728)

**Description:** Fix embedding check in vdms **Contribution maintainer:** [@cwlacewe](https://github.com/cwlacewe) |

6 months ago |

|

|

75173d31db

|

community[minor]: Add solar model chat model (#18556)

Add our solar chat models, available model choices: * solar-1-mini-chat * solar-1-mini-translate-enko * solar-1-mini-translate-koen More documents and pricing can be found at https://console.upstage.ai/services/solar. The references to our solar model can be found at * https://arxiv.org/abs/2402.17032 --------- Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

e576d6c6b4

|

cohere[patch]: release 0.1.0rc1 (rc1-2 never released) (#19731) | 6 months ago |

|

|

ea57050122

|

cohere: add with_structured_output to ChatCohere (#19730)

**Description:** Adds support for `with_structured_output` to Cohere, which supports single function calling. --------- Co-authored-by: BeatrixCohere <128378696+BeatrixCohere@users.noreply.github.com> |

6 months ago |

|

|

0571f886d1

|

core[patch]: Fix jsonOutputParser fails if a json value contains ``` inside it. (#19717)

- **Issue:** fix #19646 - @baskaryan, @eyurtsev PTAL |

6 months ago |

|

|

f7042321f1

|

community[patch]: gather token usage info in BedrockChat during generation (#19127)

This PR allows to calculate token usage for prompts and completion directly in the generation method of BedrockChat. The token usage details are then returned together with the generations, so that other downstream tasks can access them easily. This allows to define a callback for tokens tracking and cost calculation, similarly to what happens with OpenAI (see [OpenAICallbackHandler](https://api.python.langchain.com/en/latest/_modules/langchain_community/callbacks/openai_info.html#OpenAICallbackHandler). I plan on adding a BedrockCallbackHandler later. Right now keeping track of tokens in the callback is already possible, but it requires passing the llm, as done here: https://how.wtf/how-to-count-amazon-bedrock-anthropic-tokens-with-langchain.html. However, I find the approach of this PR cleaner. Thanks for your reviews. FYI @baskaryan, @hwchase17 --------- Co-authored-by: taamedag <Davide.Menini@swisscom.com> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

a662468dde

|

community[patch]: Fix the error of Baidu Qianfan not passing the stop parameter (#18666)

- [x] **PR title**: "community: fix baidu qianfan missing stop parameter" - [x] **PR message**: - **Description: Baidu Qianfan lost the stop parameter when requesting service due to extracting it from kwargs. This bug can cause the agent to receive incorrect results --------- Co-authored-by: ligang33 <ligang33@baidu.com> Co-authored-by: Bagatur <22008038+baskaryan@users.noreply.github.com> Co-authored-by: Bagatur <baskaryan@gmail.com> |

6 months ago |

|

|

d1a2e194c3

|

cohere[patch]: misc fixs tool use agent and cohere chat (#19705)

Bug fixes in this PR: * allows for other params such as "message" not just the input param to the prompt for the cohere tools agent * fixes to documents kwarg from messages * fixes to tool_calls API call --------- Co-authored-by: Harry M <127103098+harry-cohere@users.noreply.github.com> |

6 months ago |

|

|

b35e68c41f

|

docs: update use_cases/question_answering/chat_history (#19349)

Update following https://github.com/langchain-ai/langchain/issues/19344 |

6 months ago |

|

|

8c2ed85a45

|

core[patch], infra: release 0.1.36, run partner CI on core PRs (#19688) | 6 months ago |

|

|

5327bc9ec4

|

elasticsearch[patch]: move to repo (#19620) | 6 months ago |

|

|

239dd7c0c0

|

langchain[patch]: Use map() and avoid "ValueError: max() arg is an empty sequence" in MergerRetriever (#18679)

- **Issue:** When passing an empty list to MergerRetriever it fails with

error: ValueError: max() arg is an empty sequence

- **Description:** We have a use case where we dynamically select

retrievers and use MergerRetriever for merging the output of the

retrievers. We faced this issue when the retriever_docs list is empty.

Adding a default 0 for cases when retriever_docs is an empty list to

avoid "ValueError: max() arg is an empty sequence". Also, changed to use

map() which is more than twice as fast compared to the current

implementation.

```

import timeit

# Sample retriever_docs with varying lengths of sublists

retriever_docs = [[i for i in range(j)] for j in range(1, 1000)]

# First code snippet

code1 = '''

max_docs = max(len(docs) for docs in retriever_docs)

'''

# Second code snippet

code2 = '''

max_docs = max(map(len, retriever_docs), default=0)

'''

# Benchmarking

time1 = timeit.timeit(stmt=code1, globals=globals(), number=10000)

time2 = timeit.timeit(stmt=code2, globals=globals(), number=10000)

# Output

print(f"Execution time for code snippet 1: {time1} seconds")

print(f"Execution time for code snippet 2: {time2} seconds")

```

- **Dependencies:** none

|

6 months ago |

|

|

4cd38fe89f

|

docs: update docstring of the ChatGroq class (#18645)

**Description:** Update docstring of the ChatGroq class **Issue:** Not applicable **Dependencies:** None |

6 months ago |

|

|

e4d7b1a482

|

voyageai[patch]: top level reranker import (#19645)

The previous version didn't had Voyage rerank in the init file

- [ ] **PR title**: langchain_voyageai reranker is not working

- [ ] **PR message**:

- **Description:** This fix let you run reranker from voyage

- **Issue:** Was not able to run reranker from voyage

@efriis

|

6 months ago |

|

|

26eed70c11

|

infra: Optimize Makefile for Better Usability and Maintenance (#18859)

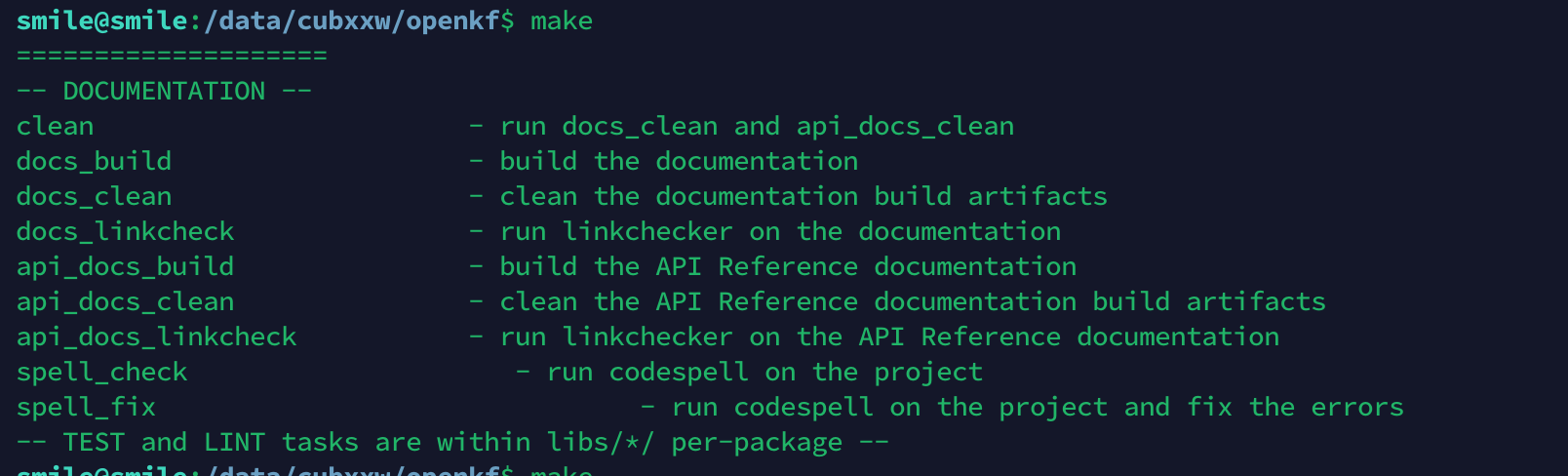

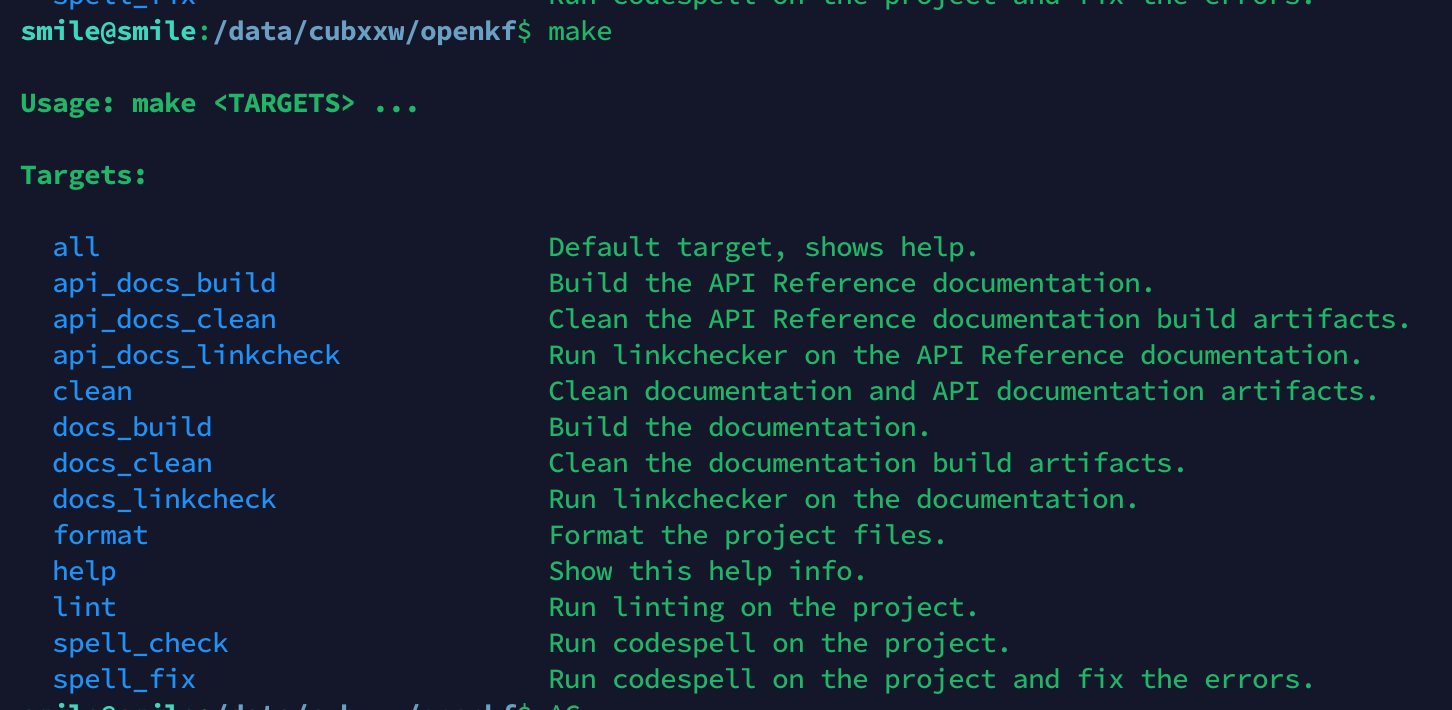

**Previous screenshots:**  **Current screenshot:**  |

6 months ago |

|

|

51baa1b5cf

|

langchain[patch]: fix-cohere-reranker-rerank-method with cohere v5 (#19486)