"One Retriever to merge them all, One Retriever to expose them, One

Retriever to bring them all and in and process them with Document

formatters."

Hi @dev2049! Here bothering people again!

I'm using this simple idea to deal with merging the output of several

retrievers into one.

I'm aware of DocumentCompressorPipeline and

ContextualCompressionRetriever but I don't think they allow us to do

something like this. Also I was getting in trouble to get the pipeline

working too. Please correct me if i'm wrong.

This allow to do some sort of "retrieval" preprocessing and then using

the retrieval with the curated results anywhere you could use a

retriever.

My use case is to generate diff indexes with diff embeddings and sources

for a more colorful results then filtering them with one or many

document formatters.

I saw some people looking for something like this, here:

https://github.com/hwchase17/langchain/issues/3991

and something similar here:

https://github.com/hwchase17/langchain/issues/5555

This is just a proposal I know I'm missing tests , etc. If you think

this is a worth it idea I can work on tests and anything you want to

change.

Let me know!

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

This PR adds the possibility of specifying the endpoint URL to AWS in

the DynamoDBChatMessageHistory, so that it is possible to target not

only the AWS cloud services, but also a local installation.

Specifying the endpoint URL, which is normally not done when addressing

the cloud services, is very helpful when targeting a local instance

(like [Localstack](https://localstack.cloud/)) when running local tests.

Fixes#5835

#### Who can review?

Tag maintainers/contributors who might be interested: @dev2049

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

#### Add start index to metadata in TextSplitter

- Modified method `create_documents` to track start position of each

chunk

- The `start_index` is included in the metadata if the `add_start_index`

parameter in the class constructor is set to `True`

This enables referencing back to the original document, particularly

useful when a specific chunk is retrieved.

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

@eyurtsev @agola11

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

This PR adds a Baseten integration. I've done my best to follow the

contributor's guidelines and add docs, an example notebook, and an

integration test modeled after similar integrations' test.

Please let me know if there is anything I can do to improve the PR. When

it is merged, please tag https://twitter.com/basetenco and

https://twitter.com/philip_kiely as contributors (the note on the PR

template said to include Twitter accounts)

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: rlm <pexpresss31@gmail.com>

# Your PR Title (What it does)

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

## Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

- Added `SingleStoreDB` vector store, which is a wrapper over the

SingleStore DB database, that can be used as a vector storage and has an

efficient similarity search.

- Added integration tests for the vector store

- Added jupyter notebook with the example

@dev2049

---------

Co-authored-by: Volodymyr Tkachuk <vtkachuk-ua@singlestore.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Simply fixing a small typo in the memory page.

Also removed an extra code block at the end of the file.

Along the way, the current outputs seem to have changed in a few places

so left that for posterity, and updated the number of runs which seems

harmless, though I can clean that up if preferred.

### Summary

Adds an `UnstructuredCSVLoader` for loading CSVs. One advantage of using

`UnstructuredCSVLoader` relative to the standard `CSVLoader` is that if

you use `UnstructuredCSVLoader` in `"elements"` mode, an HTML

representation of the table will be available in the metadata.

#### Who can review?

@hwchase17

@eyurtsev

Hi! I just added an example of how to use a custom scraping function

with the sitemap loader. I recently used this feature and had to dig in

the source code to find it. I thought it might be useful to other devs

to have an example in the Jupyter Notebook directly.

I only added the example to the documentation page.

@eyurtsev I was not able to run the lint. Please let me know if I have

to do anything else.

I know this is a very small contribution, but I hope it will be

valuable. My Twitter handle is @web3Dav3.

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

@hwchase17 - project lead

- @agola11

---------

Co-authored-by: Yessen Kanapin <yessen@deepinfra.com>

just change "to" to "too" so it matches the above prompt

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes # (issue)

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

This introduces the `YoutubeAudioLoader`, which will load blobs from a

YouTube url and write them. Blobs are then parsed by

`OpenAIWhisperParser()`, as show in this

[PR](https://github.com/hwchase17/langchain/pull/5580), but we extend

the parser to split audio such that each chuck meets the 25MB OpenAI

size limit. As shown in the notebook, this enables a very simple UX:

```

# Transcribe the video to text

loader = GenericLoader(YoutubeAudioLoader([url],save_dir),OpenAIWhisperParser())

docs = loader.load()

```

Tested on full set of Karpathy lecture videos:

```

# Karpathy lecture videos

urls = ["https://youtu.be/VMj-3S1tku0"

"https://youtu.be/PaCmpygFfXo",

"https://youtu.be/TCH_1BHY58I",

"https://youtu.be/P6sfmUTpUmc",

"https://youtu.be/q8SA3rM6ckI",

"https://youtu.be/t3YJ5hKiMQ0",

"https://youtu.be/kCc8FmEb1nY"]

# Directory to save audio files

save_dir = "~/Downloads/YouTube"

# Transcribe the videos to text

loader = GenericLoader(YoutubeAudioLoader(urls,save_dir),OpenAIWhisperParser())

docs = loader.load()

```

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

In the [Databricks

integration](https://python.langchain.com/en/latest/integrations/databricks.html)

and [Databricks

LLM](https://python.langchain.com/en/latest/modules/models/llms/integrations/databricks.html),

we suggestted users to set the ENV variable `DATABRICKS_API_TOKEN`.

However, this is inconsistent with the other Databricks library. To make

it consistent, this PR changes the variable from `DATABRICKS_API_TOKEN`

to `DATABRICKS_TOKEN`

After changes, there is no more `DATABRICKS_API_TOKEN` in the doc

```

$ git grep DATABRICKS_API_TOKEN|wc -l

0

$ git grep DATABRICKS_TOKEN|wc -l

8

```

cc @hwchase17 @dev2049 @mengxr since you have reviewed the previous PRs.

# Scores in Vectorestores' Docs Are Explained

Following vectorestores can return scores with similar documents by

using `similarity_search_with_score`:

- chroma

- docarray_hnsw

- docarray_in_memory

- faiss

- myscale

- qdrant

- supabase

- vectara

- weaviate

However, in documents, these scores were either not explained at all or

explained in a way that could lead to misunderstandings (e.g., FAISS).

For instance in FAISS document: if we consider the score returned by the

function as a similarity score, we understand that a document returning

a higher score is more similar to the source document. However, since

the scores returned by the function are distance scores, we should

understand that smaller scores correspond to more similar documents.

For the libraries other than Vectara, I wrote the scores they use by

investigating from the source libraries. Since I couldn't be certain

about the score metric used by Vectara, I didn't make any changes in its

documentation. The links mentioned in Vectara's documentation became

broken due to updates, so I replaced them with working ones.

VectorStores / Retrievers / Memory

- @dev2049

my twitter: [berkedilekoglu](https://twitter.com/berkedilekoglu)

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Aviary is an open source toolkit for evaluating and deploying open

source LLMs. You can find out more about it on

[http://github.com/ray-project/aviary). You can try it out at

[http://aviary.anyscale.com](aviary.anyscale.com).

This code adds support for Aviary in LangChain. To minimize

dependencies, it connects directly to the HTTP endpoint.

The current implementation is not accelerated and uses the default

implementation of `predict` and `generate`.

It includes a test and a simple example.

@hwchase17 and @agola11 could you have a look at this?

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# OpenAIWhisperParser

This PR creates a new parser, `OpenAIWhisperParser`, that uses the

[OpenAI Whisper

model](https://platform.openai.com/docs/guides/speech-to-text/quickstart)

to perform transcription of audio files to text (`Documents`). Please

see the notebook for usage.

# Token text splitter for sentence transformers

The current TokenTextSplitter only works with OpenAi models via the

`tiktoken` package. This is not clear from the name `TokenTextSplitter`.

In this (first PR) a token based text splitter for sentence transformer

models is added. In the future I think we should work towards injecting

a tokenizer into the TokenTextSplitter to make ti more flexible.

Could perhaps be reviewed by @dev2049

---------

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

<!-- Remove if not applicable -->

Fixes#5638. Retitles "Amazon Bedrock" page to "Bedrock" so that the

Integrations section of the left nav is properly sorted in alphabetical

order.

#### Who can review?

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

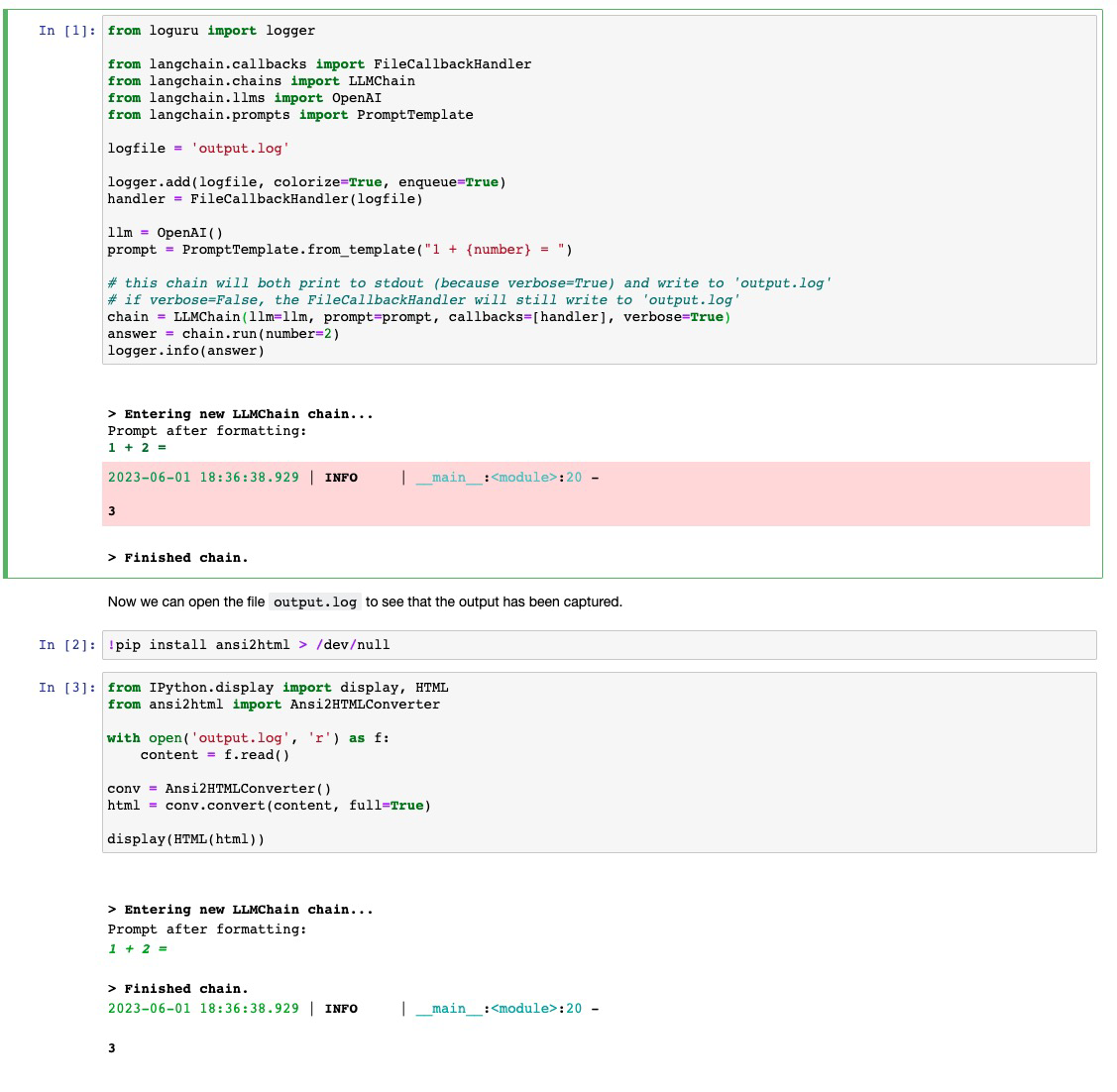

# like

[StdoutCallbackHandler](https://github.com/hwchase17/langchain/blob/master/langchain/callbacks/stdout.py),

but writes to a file

When running experiments I have found myself wanting to log the outputs

of my chains in a more lightweight way than using WandB tracing. This PR

contributes a callback handler that writes to file what

`StdoutCallbackHandler` would print.

<!--

Thank you for contributing to LangChain! Your PR will appear in our

release under the title you set. Please make sure it highlights your

valuable contribution.

Replace this with a description of the change, the issue it fixes (if

applicable), and relevant context. List any dependencies required for

this change.

After you're done, someone will review your PR. They may suggest

improvements. If no one reviews your PR within a few days, feel free to

@-mention the same people again, as notifications can get lost.

Finally, we'd love to show appreciation for your contribution - if you'd

like us to shout you out on Twitter, please also include your handle!

-->

## Example Notebook

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

See the included `filecallbackhandler.ipynb` notebook for usage. Would

it be better to include this notebook under `modules/callbacks` or under

`integrations/`?

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@agola11

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

Created fix for 5475

Currently in PGvector, we do not have any function that returns the

instance of an existing store. The from_documents always adds embeddings

and then returns the store. This fix is to add a function that will

return the instance of an existing store

Also changed the jupyter example for PGVector to show the example of

using the function

<!-- Remove if not applicable -->

Fixes # 5475

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

@dev2049

@hwchase17

Tag maintainers/contributors who might be interested:

<!-- For a quicker response, figure out the right person to tag with @

@hwchase17 - project lead

Tracing / Callbacks

- @agola11

Async

- @agola11

DataLoaders

- @eyurtsev

Models

- @hwchase17

- @agola11

Agents / Tools / Toolkits

- @vowelparrot

VectorStores / Retrievers / Memory

- @dev2049

-->

---------

Co-authored-by: rajib76 <rajib76@yahoo.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

This PR corrects a minor typo in the Momento chat message history

notebook and also expands the title from "Momento" to "Momento Chat

History", inline with other chat history storage providers.

#### Before submitting

<!-- If you're adding a new integration, please include:

1. a test for the integration - favor unit tests that does not rely on

network access.

2. an example notebook showing its use

See contribution guidelines for more information on how to write tests,

lint

etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

#### Who can review?

cc @dev2049 who reviewed the original integration

# Your PR Title (What it does)

Fixes the pgvector python example notebook : one of the variables was

not referencing anything

## Before submitting

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

VectorStores / Retrievers / Memory

- @dev2049

# Make FinalStreamingStdOutCallbackHandler more robust by ignoring new

lines & white spaces

`FinalStreamingStdOutCallbackHandler` doesn't work out of the box with

`ChatOpenAI`, as it tokenized slightly differently than `OpenAI`. The

response of `OpenAI` contains the tokens `["\nFinal", " Answer", ":"]`

while `ChatOpenAI` contains `["Final", " Answer", ":"]`.

This PR make `FinalStreamingStdOutCallbackHandler` more robust by

ignoring new lines & white spaces when determining if the answer prefix

has been reached.

Fixes#5433

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

Tracing / Callbacks

- @agola11

Twitter: [@UmerHAdil](https://twitter.com/@UmerHAdil) | Discord:

RicChilligerDude#7589

# Implements support for Personal Access Token Authentication in the

ConfluenceLoader

Fixes#5191

Implements a new optional parameter for the ConfluenceLoader: `token`.

This allows the use of personal access authentication when using the

on-prem server version of Confluence.

## Who can review?

Community members can review the PR once tests pass. Tag

maintainers/contributors who might be interested:

@eyurtsev @Jflick58

Twitter Handle: felipe_yyc

---------

Co-authored-by: Felipe <feferreira@ea.com>

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>

# docs: modules pages simplified

Fixied #5627 issue

Merged several repetitive sections in the `modules` pages. Some texts,

that were hard to understand, were also simplified.

## Who can review?

@hwchase17

@dev2049

# Fixed multi input prompt for MapReduceChain

Added `kwargs` support for inner chains of `MapReduceChain` via

`from_params` method

Currently the `from_method` method of intialising `MapReduceChain` chain

doesn't work if prompt has multiple inputs. It happens because it uses

`StuffDocumentsChain` and `MapReduceDocumentsChain` underneath, both of

them require specifying `document_variable_name` if `prompt` of their

`llm_chain` has more than one `input`.

With this PR, I have added support for passing their respective `kwargs`

via the `from_params` method.

## Fixes https://github.com/hwchase17/langchain/issues/4752

## Who can review?

@dev2049 @hwchase17 @agola11

---------

Co-authored-by: imeckr <chandanroutray2012@gmail.com>

# Unstructured Excel Loader

Adds an `UnstructuredExcelLoader` class for `.xlsx` and `.xls` files.

Works with `unstructured>=0.6.7`. A plain text representation of the

Excel file will be available under the `page_content` attribute in the

doc. If you use the loader in `"elements"` mode, an HTML representation

of the Excel file will be available under the `text_as_html` metadata

key. Each sheet in the Excel document is its own document.

### Testing

```python

from langchain.document_loaders import UnstructuredExcelLoader

loader = UnstructuredExcelLoader(

"example_data/stanley-cups.xlsx",

mode="elements"

)

docs = loader.load()

```

## Who can review?

@hwchase17

@eyurtsev

Co-authored-by: Alvaro Bartolome <alvarobartt@gmail.com>

Co-authored-by: Daniel Vila Suero <daniel@argilla.io>

Co-authored-by: Tom Aarsen <37621491+tomaarsen@users.noreply.github.com>

Co-authored-by: Tom Aarsen <Cubiegamedev@gmail.com>

# Create elastic_vector_search.ElasticKnnSearch class

This extends `langchain/vectorstores/elastic_vector_search.py` by adding

a new class `ElasticKnnSearch`

Features:

- Allow creating an index with the `dense_vector` mapping compataible

with kNN search

- Store embeddings in index for use with kNN search (correct mapping

creates HNSW data structure)

- Perform approximate kNN search

- Perform hybrid BM25 (`query{}`) + kNN (`knn{}`) search

- perform knn search by either providing a `query_vector` or passing a

hosted `model_id` to use query_vector_builder to automatically generate

a query_vector at search time

Connection options

- Using `cloud_id` from Elastic Cloud

- Passing elasticsearch client object

search options

- query

- k

- query_vector

- model_id

- size

- source

- knn_boost (hybrid search)

- query_boost (hybrid search)

- fields

This also adds examples to

`docs/modules/indexes/vectorstores/examples/elasticsearch.ipynb`

Fixes # [5346](https://github.com/hwchase17/langchain/issues/5346)

cc: @dev2049

-->

---------

Co-authored-by: Dev 2049 <dev.dev2049@gmail.com>