- **Description:** implement [quip](https://quip.com) loader

- **Issue:** https://github.com/langchain-ai/langchain/issues/10352

- **Dependencies:** No

- pass make format, make lint, make test

---------

Co-authored-by: Hao Fan <h_fan@apple.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

latest release broken, this fixes it

---------

Co-authored-by: Roman Vasilyev <rvasilyev@mozilla.com>

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

Prior to this PR, `ruff` was used only for linting and not for

formatting, despite the names of the commands. This PR makes it be used

for both linting code and autoformatting it.

This input key was missed in the last update PR:

https://github.com/langchain-ai/langchain/pull/7391

The input/output formats are intended to be like this:

```

{"inputs": [<prompt>]}

{"outputs": [<output_text>]}

```

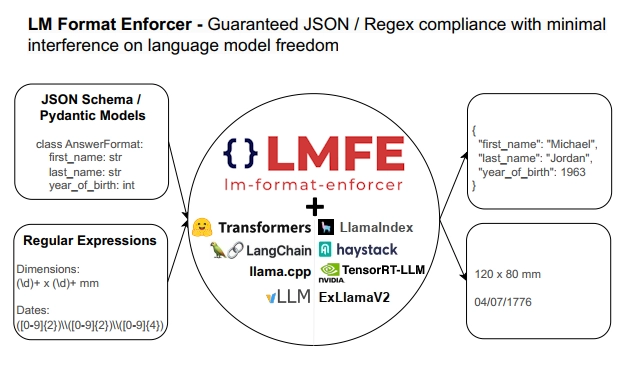

## Description

This PR adds support for

[lm-format-enforcer](https://github.com/noamgat/lm-format-enforcer) to

LangChain.

The library is similar to jsonformer / RELLM which are supported in

Langchain, but has several advantages such as

- Batching and Beam search support

- More complete JSON Schema support

- LLM has control over whitespace, improving quality

- Better runtime performance due to only calling the LLM's generate()

function once per generate() call.

The integration is loosely based on the jsonformer integration in terms

of project structure.

## Dependencies

No compile-time dependency was added, but if `lm-format-enforcer` is not

installed, a runtime error will occur if it is trying to be used.

## Tests

Due to the integration modifying the internal parameters of the

underlying huggingface transformer LLM, it is not possible to test

without building a real LM, which requires internet access. So, similar

to the jsonformer and RELLM integrations, the testing is via the

notebook.

## Twitter Handle

[@noamgat](https://twitter.com/noamgat)

Looking forward to hearing feedback!

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Best to review one commit at a time, since two of the commits are 100%

autogenerated changes from running `ruff format`:

- Install and use `ruff format` instead of black for code formatting.

- Output of `ruff format .` in the `langchain` package.

- Use `ruff format` in experimental package.

- Format changes in experimental package by `ruff format`.

- Manual formatting fixes to make `ruff .` pass.

I always take 20-30 seconds to re-discover where the

`convert_to_openai_function` wrapper lives in our codebase. Chat

langchain [has no

clue](https://smith.langchain.com/public/3989d687-18c7-4108-958e-96e88803da86/r)

what to do either. There's the older `create_openai_fn_chain` , but we

haven't been recommending it in LCEL. The example we show in the

[cookbook](https://python.langchain.com/docs/expression_language/how_to/binding#attaching-openai-functions)

is really verbose.

General function calling should be as simple as possible to do, so this

seems a bit more ergonomic to me (feel free to disagree). Another option

would be to directly coerce directly in the class's init (or when

calling invoke), if provided. I'm not 100% set against that. That

approach may be too easy but not simple. This PR feels like a decent

compromise between simple and easy.

```

from enum import Enum

from typing import Optional

from pydantic import BaseModel, Field

class Category(str, Enum):

"""The category of the issue."""

bug = "bug"

nit = "nit"

improvement = "improvement"

other = "other"

class IssueClassification(BaseModel):

"""Classify an issue."""

category: Category

other_description: Optional[str] = Field(

description="If classified as 'other', the suggested other category"

)

from langchain.chat_models import ChatOpenAI

llm = ChatOpenAI().bind_functions([IssueClassification])

llm.invoke("This PR adds a convenience wrapper to the bind argument")

# AIMessage(content='', additional_kwargs={'function_call': {'name': 'IssueClassification', 'arguments': '{\n "category": "improvement"\n}'}})

```

- Prefer lambda type annotations over inferred dict schema

- For sequences that start with RunnableAssign infer seq input type as

"input type of 2nd item in sequence - output type of runnable assign"

Replace this entire comment with:

-Add MultiOn close function and update key value and add async

functionality

- solved the key value TabId not found.. (updated to use latest key

value)

@hwchase17

- **Description:** This pull request removes secrets present in raw

format,

- **Issue:** Fireworks api key was exposed when printing out the

langchain object

[#12165](https://github.com/langchain-ai/langchain/issues/12165)

- **Maintainer:** @eyurtsev

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Textract PDF Loader generating linearized output,

meaning it will replicate the structure of the source document as close

as possible based on the features passed into the call (e. g. LAYOUT,

FORMS, TABLES). With LAYOUT reading order for multi-column documents or

identification of lists and figures is supported and with TABLES it will

generate the table structure as well. FORMS will indicate "key: value"

with columms.

- **Issue:** the issue fixes#12068

- **Dependencies:** amazon-textract-textractor is added, which provides

the linearization

- **Tag maintainer:** @3coins

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

can get the correct token count instead of using gpt-2 model

**Description:**

Implement get_num_tokens within VertexLLM to use google's count_tokens

function.

(https://cloud.google.com/vertex-ai/docs/generative-ai/get-token-count).

So we don't need to download gpt-2 model from huggingface, also when we

do the mapreduce chain we can get correct token count.

**Tag maintainer:**

@lkuligin

**Twitter handle:**

My twitter: @abehsu1992626

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Following this tutoral about using OpenAI Embeddings with FAISS

https://python.langchain.com/docs/integrations/vectorstores/faiss

```python

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import CharacterTextSplitter

from langchain.vectorstores import FAISS

from langchain.document_loaders import TextLoader

from langchain.document_loaders import TextLoader

loader = TextLoader("../../../extras/modules/state_of_the_union.txt")

documents = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

docs = text_splitter.split_documents(documents)

embeddings = OpenAIEmbeddings()

```

This works fine

```python

db = FAISS.from_documents(docs, embeddings)

query = "What did the president say about Ketanji Brown Jackson"

docs = db.similarity_search(query)

```

But the async version is not

```python

db = await FAISS.afrom_documents(docs, embeddings) # NotImplementedError

query = "What did the president say about Ketanji Brown Jackson"

docs = await db.asimilarity_search(query) # this will use await asyncio.get_event_loop().run_in_executor under the hood and will not call OpenAIEmbeddings.aembed_query but call OpenAIEmbeddings.embed_query

```

So this PR add async/await supports for FAISS

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

- Description: adding support to Activeloop's DeepMemory feature that

boosts recall up to 25%. Added Jupyter notebook showcasing the feature

and also made index params explicit.

- Twitter handle: will really appreciate if we could announce this on

twitter.

---------

Co-authored-by: adolkhan <adilkhan.sarsen@alumni.nu.edu.kz>

Hey, we're looking to invest more in adding cohere integrations to

langchain so would love to get more of an idea for how it's used.

Hopefully this pr is acceptable. This week I'm also going to be looking

into adding our new [retrieval augmented generation

product](https://txt.cohere.com/chat-with-rag/) to langchain.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

## **Description:**

When building our own readthedocs.io scraper, we noticed a couple

interesting things:

1. Text lines with a lot of nested <span> tags would give unclean text

with a bunch of newlines. For example, for [Langchain's

documentation](https://api.python.langchain.com/en/latest/document_loaders/langchain.document_loaders.readthedocs.ReadTheDocsLoader.html#langchain.document_loaders.readthedocs.ReadTheDocsLoader),

a single line is represented in a complicated nested HTML structure, and

the naive `soup.get_text()` call currently being made will create a

newline for each nested HTML element. Therefore, the document loader

would give a messy, newline-separated blob of text. This would be true

in a lot of cases.

<img width="945" alt="Screenshot 2023-10-26 at 6 15 39 PM"

src="https://github.com/langchain-ai/langchain/assets/44193474/eca85d1f-d2bf-4487-a18a-e1e732fadf19">

<img width="1031" alt="Screenshot 2023-10-26 at 6 16 00 PM"

src="https://github.com/langchain-ai/langchain/assets/44193474/035938a0-9892-4f6a-83cd-0d7b409b00a3">

Additionally, content from iframes, code from scripts, css from styles,

etc. will be gotten if it's a subclass of the selector (which happens

more often than you'd think). For example, [this

page](https://pydeck.gl/gallery/contour_layer.html#) will scrape 1.5

million characters of content that looks like this:

<img width="1372" alt="Screenshot 2023-10-26 at 6 32 55 PM"

src="https://github.com/langchain-ai/langchain/assets/44193474/dbd89e39-9478-4a18-9e84-f0eb91954eac">

Therefore, I wrote a recursive _get_clean_text(soup) class function that

1. skips all irrelevant elements, and 2. only adds newlines when

necessary.

2. Index pages (like [this

one](https://api.python.langchain.com/en/latest/api_reference.html))

would be loaded, chunked, and eventually embedded. This is really bad

not just because the user will be embedding irrelevant information - but

because index pages are very likely to show up in retrieved content,

making retrieval less effective (in our tests). Therefore, I added a

bool parameter `exclude_index_pages` defaulted to False (which is the

current behavior — although I'd petition to default this to True) that

will skip all pages where links take up 50%+ of the page. Through manual

testing, this seems to be the best threshold.

## Other Information:

- **Issue:** n/a

- **Dependencies:** n/a

- **Tag maintainer:** n/a

- **Twitter handle:** @andrewthezhou

---------

Co-authored-by: Andrew Zhou <andrew@heykona.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:**

* Add unit tests for document_transformers/beautiful_soup_transformer.py

* Basic functionality is tested (extract tags, remove tags, drop lines)

* add a FIXME comment about the order of tags that is not preserved

(and a passing test, but with the expected tags now out-of-order)

- **Issue:** None

- **Dependencies:** None

- **Tag maintainer:** @rlancemartin

- **Twitter handle:** `peter_v`

Please make sure your PR is passing linting and testing before

submitting.

=> OK: I ran `make format`, `make test` (passing after install of

beautifulsoup4) and `make lint`.

- **Description:** Added masking of the API Key for AI21 LLM when

printed and improved the docstring for AI21 LLM.

- Updated the AI21 LLM to utilize SecretStr from pydantic to securely

manage API key.

- Made improvements in the docstring of AI21 LLM. It now mentions that

the API key can also be passed as a named parameter to the constructor.

- Added unit tests.

- **Issue:** #12165

- **Tag maintainer:** @eyurtsev

---------

Co-authored-by: Anirudh Gautam <anirudh@Anirudhs-Mac-mini.local>

Currently this gives a bug:

```

from langchain.schema.runnable import RunnableLambda

bound = RunnableLambda(lambda x: x).with_config({"callbacks": []})

# ConfigError: field "callbacks" not yet prepared so type is still a ForwardRef, you might need to call RunnableConfig.update_forward_refs().

```

Rather than deal with cyclic imports and extra load time, etc., I think

it makes sense to just have a separate Callbacks definition here that is

a relaxed typehint.

1. Allow run evaluators to return {"results": [list of evaluation

results]} in the evaluator callback.

2. Allows run evaluators to pick the target run ID to provide feedback

to

(1) means you could do something like a function call that populates a

full rubric in one go (not sure how reliable that is in general though)

rather than splitting off into separate LLM calls - cheaper and less

code to write

(2) means you can provide feedback to runs on subsequent calls.

Immediate use case is if you wanted to add an evaluator to a chat bot

and assign to assign to previous conversation turns

have a corresponding one in the SDK