Description :

Updated the functions with new Clarifai python SDK.

Enabled initialisation of Clarifai class with model URL.

Updated docs with new functions examples.

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** add gitlab url from env,

- **Issue:** no issue,

- **Dependencies:** no,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

- **Description:** Added a notebook to illustrate how to use

`text-embeddings-inference` from huggingface. As

`HuggingFaceHubEmbeddings` was using a deprecated client, I made the

most of this PR updating that too.

- **Issue:** #13286

- **Dependencies**: None

- **Tag maintainer:** @baskaryan

### Description

Fixed 3 doc issues:

1. `ConfigurableField ` needs to be imported in

`docs/docs/expression_language/how_to/configure.ipynb`

2. use `error` instead of `RateLimitError()` in

`docs/docs/expression_language/how_to/fallbacks.ipynb`

3. I think it might be better to output the fixed json data(when I

looked at this example, I didn't understand its purpose at first, but

then I suddenly realized):

<img width="1219" alt="Screenshot 2023-12-05 at 10 34 13 PM"

src="https://github.com/langchain-ai/langchain/assets/10000925/7623ba13-7b56-4964-8c98-b7430fabc6de">

- **Description:** Adapt JinaEmbeddings to run with the new Jina AI

Embedding platform

- **Twitter handle:** https://twitter.com/JinaAI_

---------

Co-authored-by: Joan Fontanals Martinez <joan.fontanals.martinez@jina.ai>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

- **Description:**

Reference library azure-search-documents has been adapted in version

11.4.0:

1. Notebook explaining Azure AI Search updated with most recent info

2. HnswVectorSearchAlgorithmConfiguration --> HnswAlgorithmConfiguration

3. PrioritizedFields(prioritized_content_fields) -->

SemanticPrioritizedFields(content_fields)

4. SemanticSettings --> SemanticSearch

5. VectorSearch(algorithm_configurations) -->

VectorSearch(configurations)

--> Changes now reflected on Langchain: default vector search config

from langchain is now compatible with officially released library from

Azure.

- **Issue:**

Issue creating a new index (due to wrong class used for default vector

search configuration) if using latest version of azure-search-documents

with current langchain version

- **Dependencies:** azure-search-documents>=11.4.0,

- **Tag maintainer:** ,

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

The Github utilities are fantastic, so I'm adding support for deeper

interaction with pull requests. Agents should read "regular" comments

and review comments, and the content of PR files (with summarization or

`ctags` abbreviations).

Progress:

- [x] Add functions to read pull requests and the full content of

modified files.

- [x] Function to use Github's built in code / issues search.

Out of scope:

- Smarter summarization of file contents of large pull requests (`tree`

output, or ctags).

- Smarter functions to checkout PRs and edit the files incrementally

before bulk committing all changes.

- Docs example for creating two agents:

- One watches issues: For every new issue, open a PR with your best

attempt at fixing it.

- The other watches PRs: For every new PR && every new comment on a PR,

check the status and try to finish the job.

<!-- Thank you for contributing to LangChain!

Replace this comment with:

- Description: a description of the change,

- Issue: the issue # it fixes (if applicable),

- Dependencies: any dependencies required for this change,

- Tag maintainer: for a quicker response, tag the relevant maintainer

(see below),

- Twitter handle: we announce bigger features on Twitter. If your PR

gets announced and you'd like a mention, we'll gladly shout you out!

Please make sure you're PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use.

Maintainer responsibilities:

- General / Misc / if you don't know who to tag: @baskaryan

- DataLoaders / VectorStores / Retrievers: @rlancemartin, @eyurtsev

- Models / Prompts: @hwchase17, @baskaryan

- Memory: @hwchase17

- Agents / Tools / Toolkits: @hinthornw

- Tracing / Callbacks: @agola11

- Async: @agola11

If no one reviews your PR within a few days, feel free to @-mention the

same people again.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

The `/docs/integrations/toolkits/vectorstore` page is not the

Integration page. The best place is in `/docs/modules/agents/how_to/`

- Moved the file

- Rerouted the page URL

Allow users to pass a generic `BaseStore[str, bytes]` to

MultiVectorRetriever, removing the need to use the `create_kv_docstore`

method. This encoding will now happen internally.

@rlancemartin @eyurtsev

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

Switches to a more maintained solution for building ipynb -> md files

(`quarto`)

Also bumps us down to python3.8 because it's significantly faster in the

vercel build step. Uses default openssl version instead of upgrading as

well.

**Description:**

Adds the document loader for [Couchbase](http://couchbase.com/), a

distributed NoSQL database.

**Dependencies:**

Added the Couchbase SDK as an optional dependency.

**Twitter handle:** nithishr

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Our PR is an integration of a Steam API Tool that

makes recommendations on steam games based on user's Steam profile and

provides information on games based on user provided queries.

- **Issue:** the issue # our PR implements:

https://github.com/langchain-ai/langchain/issues/12120

- **Dependencies:** python-steam-api library, steamspypi library and

decouple library

- **Tag maintainer:** @baskaryan, @hwchase17

- **Twitter handle:** N/A

Hello langchain Maintainers,

We are a team of 4 University of Toronto students contributing to

langchain as part of our course [CSCD01 (link to course

page)](https://cscd01.com/work/open-source-project). We hope our changes

help the community. We have run make format, make lint and make test

locally before submitting the PR. To our knowledge, our changes do not

introduce any new errors.

Our PR integrates the python-steam-api, steamspypi and decouple

packages. We have added integration tests to test our python API

integration into langchain and an example notebook is also provided.

Our amazing team that contributed to this PR: @JohnY2002, @shenceyang,

@andrewqian2001 and @muntaqamahmood

Thank you in advance to all the maintainers for reviewing our PR!

---------

Co-authored-by: Shence <ysc1412799032@163.com>

Co-authored-by: JohnY2002 <johnyuan0526@gmail.com>

Co-authored-by: Andrew Qian <andrewqian2001@gmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

Co-authored-by: JohnY <94477598+JohnY2002@users.noreply.github.com>

### Description

Starting from [openai version

1.0.0](17ac677995 (module-level-client)),

the camel case form of `openai.ChatCompletion` is no longer supported

and has been changed to lowercase `openai.chat.completions`. In

addition, the returned object only accepts attribute access instead of

index access:

```python

import openai

# optional; defaults to `os.environ['OPENAI_API_KEY']`

openai.api_key = '...'

# all client options can be configured just like the `OpenAI` instantiation counterpart

openai.base_url = "https://..."

openai.default_headers = {"x-foo": "true"}

completion = openai.chat.completions.create(

model="gpt-4",

messages=[

{

"role": "user",

"content": "How do I output all files in a directory using Python?",

},

],

)

print(completion.choices[0].message.content)

```

So I implemented a compatible adapter that supports both attribute

access and index access:

```python

In [1]: from langchain.adapters import openai as lc_openai

...: messages = [{"role": "user", "content": "hi"}]

In [2]: result = lc_openai.chat.completions.create(

...: messages=messages, model="gpt-3.5-turbo", temperature=0

...: )

In [3]: result.choices[0].message

Out[3]: {'role': 'assistant', 'content': 'Hello! How can I assist you today?'}

In [4]: result["choices"][0]["message"]

Out[4]: {'role': 'assistant', 'content': 'Hello! How can I assist you today?'}

In [5]: result = await lc_openai.chat.completions.acreate(

...: messages=messages, model="gpt-3.5-turbo", temperature=0

...: )

In [6]: result.choices[0].message

Out[6]: {'role': 'assistant', 'content': 'Hello! How can I assist you today?'}

In [7]: result["choices"][0]["message"]

Out[7]: {'role': 'assistant', 'content': 'Hello! How can I assist you today?'}

In [8]: for rs in lc_openai.chat.completions.create(

...: messages=messages, model="gpt-3.5-turbo", temperature=0, stream=True

...: ):

...: print(rs.choices[0].delta)

...: print(rs["choices"][0]["delta"])

...:

{'role': 'assistant', 'content': ''}

{'role': 'assistant', 'content': ''}

{'content': 'Hello'}

{'content': 'Hello'}

{'content': '!'}

{'content': '!'}

In [20]: async for rs in await lc_openai.chat.completions.acreate(

...: messages=messages, model="gpt-3.5-turbo", temperature=0, stream=True

...: ):

...: print(rs.choices[0].delta)

...: print(rs["choices"][0]["delta"])

...:

{'role': 'assistant', 'content': ''}

{'role': 'assistant', 'content': ''}

{'content': 'Hello'}

{'content': 'Hello'}

{'content': '!'}

{'content': '!'}

...

```

### Twitter handle

[lin_bob57617](https://twitter.com/lin_bob57617)

Depends on #13699. Updates the existing mlflow and databricks examples.

---------

Co-authored-by: Ben Wilson <39283302+BenWilson2@users.noreply.github.com>

The `AWS` platform page has many missed integrations.

- added missed integration references to the `AWS` platform page

- added/updated descriptions and links in the referenced notebooks

- renamed two notebook files. They have file names != page Title, which

generate unordered ToC.

- reroute the URLs for renamed files

- fixed `amazon_textract` notebook: removed failed cell outputs

Hi,

I made some code changes on the Hologres vector store to improve the

data insertion performance.

Also, this version of the code uses `hologres-vector` library. This

library is more convenient for us to update, and more efficient in

performance.

The code has passed the format/lint/spell check. I have run the unit

test for Hologres connecting to my own database.

Please check this PR again and tell me if anything needs to change.

Best,

Changgeng,

Developer @ Alibaba Cloud

Co-authored-by: Changgeng Zhao <zhaochanggeng.zcg@alibaba-inc.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

`Hugging Face` is definitely a platform. It includes many integrations

for many modules (LLM, Embedding, DocumentLoader, Tool)

So, a doc page was added that defines Hugging Face as a platform.

- **Description:**

This PR introduces the Slack toolkit to LangChain, which allows users to

read and write to Slack using the Slack API. Specifically, we've added

the following tools.

1. get_channel: Provides a summary of all the channels in a workspace.

2. get_message: Gets the message history of a channel.

3. send_message: Sends a message to a channel.

4. schedule_message: Sends a message to a channel at a specific time and

date.

- **Issue:** This pull request addresses [Add Slack Toolkit

#11747](https://github.com/langchain-ai/langchain/issues/11747)

- **Dependencies:** package`slack_sdk`

Note: For this toolkit to function you will need to add a Slack app to

your workspace. Additional info can be found

[here](https://slack.com/help/articles/202035138-Add-apps-to-your-Slack-workspace).

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Co-authored-by: ArianneLavada <ariannelavada@gmail.com>

Co-authored-by: ArianneLavada <84357335+ArianneLavada@users.noreply.github.com>

Co-authored-by: ariannelavada@gmail.com <you@example.com>

- **Description:** : As described in the issue below,

https://python.langchain.com/docs/use_cases/summarization

I've modified the Python code in the above notebook to perform well.

I also modified the OpenAI LLM model to the latest version as shown

below.

`gpt-3.5-turbo-16k --> gpt-3.5-turbo-1106`

This is because it seems to be a bit more responsive.

- **Issue:** : #14066

### Description

The `RateLimitError` initialization method has changed after openai v1,

and the usage of `patch` needs to be changed.

### Twitter handle

[lin_bob57617](https://twitter.com/lin_bob57617)

This PR adds an "Azure AI data" document loader, which allows Azure AI

users to load their registered data assets as a document object in

langchain.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Change instances of RunnableMap to RunnableParallel,

as that should be the one used going forward. This makes it consistent

across the codebase.

### Description:

Doc addition for LCEL introduction. Adds a more basic starter guide for

using LCEL.

---------

Co-authored-by: Alex Kira <akira@Alexs-MBP.local.tld>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** just a little change of ErnieChatBot class

description, sugguesting user to use more suitable class

- **Issue:** none,

- **Dependencies:** none,

- **Tag maintainer:** @baskaryan ,

- **Twitter handle:** none

### Description

Now if `example` in Message is False, it will not be displayed. Update

the output in this document.

```python

In [22]: m = HumanMessage(content="Text")

In [23]: m

Out[23]: HumanMessage(content='Text')

In [24]: m = HumanMessage(content="Text", example=True)

In [25]: m

Out[25]: HumanMessage(content='Text', example=True)

```

### Twitter handle

[lin_bob57617](https://twitter.com/lin_bob57617)

- **Description:** Touch up of the documentation page for Metaphor

Search Tool integration. Removes documentation for old built-in tool

wrapper.

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

CC @baskaryan @hwchase17 @jmorganca

Having a bit of trouble importing `langchain_experimental` from a

notebook, will figure it out tomorrow

~Ah and also is blocked by #13226~

---------

Co-authored-by: Lance Martin <lance@langchain.dev>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:**

Added support for a Pandas DataFrame OutputParser with format

instructions, along with unit tests and a demo notebook. Namely, we've

added the ability to request data from a DataFrame, have the LLM parse

the request, and then use that request to retrieve a well-formatted

response.

Within LangChain, it seamlessly integrates with language models like

OpenAI's `text-davinci-003`, facilitating streamlined interaction using

the format instructions (just like the other output parsers).

This parser structures its requests as

`<operation/column/row>[<optional_array_params>]`. The instructions

detail permissible operations, valid columns, and array formats,

ensuring clarity and adherence to the required format.

For example:

- When the LLM receives the input: "Retrieve the mean of `num_legs` from

rows 1 to 3."

- The provided format instructions guide the LLM to structure the

request as: "mean:num_legs[1..3]".

The parser processes this formatted request, leveraging the LLM's

understanding to extract the mean of `num_legs` from rows 1 to 3 within

the Pandas DataFrame.

This integration allows users to communicate requests naturally, with

the LLM transforming these instructions into structured commands

understood by the `PandasDataFrameOutputParser`. The format instructions

act as a bridge between natural language queries and precise DataFrame

operations, optimizing communication and data retrieval.

**Issue:**

- https://github.com/langchain-ai/langchain/issues/11532

**Dependencies:**

No additional dependencies :)

**Tag maintainer:**

@baskaryan

**Twitter handle:**

No need. :)

---------

Co-authored-by: Wasee Alam <waseealam@protonmail.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

**Description:**

When using Vald, only insecure grpc connection was supported, so secure

connection is now supported.

In addition, grpc metadata can be added to Vald requests to enable

authentication with a token.

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

grammar correction

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

# Description

This PR implements Self-Query Retriever for MongoDB Atlas vector store.

I've implemented the comparators and operators that are supported by

MongoDB Atlas vector store according to the section titled "Atlas Vector

Search Pre-Filter" from

https://www.mongodb.com/docs/atlas/atlas-vector-search/vector-search-stage/.

Namely:

```

allowed_comparators = [

Comparator.EQ,

Comparator.NE,

Comparator.GT,

Comparator.GTE,

Comparator.LT,

Comparator.LTE,

Comparator.IN,

Comparator.NIN,

]

"""Subset of allowed logical operators."""

allowed_operators = [

Operator.AND,

Operator.OR

]

```

Translations from comparators/operators to MongoDB Atlas filter

operators(you can find the syntax in the "Atlas Vector Search

Pre-Filter" section from the previous link) are done using the following

dictionary:

```

map_dict = {

Operator.AND: "$and",

Operator.OR: "$or",

Comparator.EQ: "$eq",

Comparator.NE: "$ne",

Comparator.GTE: "$gte",

Comparator.LTE: "$lte",

Comparator.LT: "$lt",

Comparator.GT: "$gt",

Comparator.IN: "$in",

Comparator.NIN: "$nin",

}

```

In visit_structured_query() the filters are passed as "pre_filter" and

not "filter" as in the MongoDB link above since langchain's

implementation of MongoDB atlas vector

store(libs\langchain\langchain\vectorstores\mongodb_atlas.py) in

_similarity_search_with_score() sets the "filter" key to have the value

of the "pre_filter" argument.

```

params["filter"] = pre_filter

```

Test cases and documentation have also been added.

# Issue

#11616

# Dependencies

No new dependencies have been added.

# Documentation

I have created the notebook mongodb_atlas_self_query.ipynb outlining the

steps to get the self-query mechanism working.

I worked closely with [@Farhan-Faisal](https://github.com/Farhan-Faisal)

on this PR.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Update the document for drop box loader + made the

messages more verbose when loading pdf file since people were getting

confused

- **Issue:** #13952

- **Tag maintainer:** @baskaryan, @eyurtsev, @hwchase17,

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

- **Description:** Added a tool called RedditSearchRun and an

accompanying API wrapper, which searches Reddit for posts with support

for time filtering, post sorting, query string and subreddit filtering.

- **Issue:** #13891

- **Dependencies:** `praw` module is used to search Reddit

- **Tag maintainer:** @baskaryan , and any of the other maintainers if

needed

- **Twitter handle:** None.

Hello,

This is our first PR and we hope that our changes will be helpful to the

community. We have run `make format`, `make lint` and `make test`

locally before submitting the PR. To our knowledge, our changes do not

introduce any new errors.

Our PR integrates the `praw` package which is already used by

RedditPostsLoader in LangChain. Nonetheless, we have added integration

tests and edited unit tests to test our changes. An example notebook is

also provided. These changes were put together by me, @Anika2000,

@CharlesXu123, and @Jeremy-Cheng-stack

Thank you in advance to the maintainers for their time.

---------

Co-authored-by: What-Is-A-Username <49571870+What-Is-A-Username@users.noreply.github.com>

Co-authored-by: Anika2000 <anika.sultana@mail.utoronto.ca>

Co-authored-by: Jeremy Cheng <81793294+Jeremy-Cheng-stack@users.noreply.github.com>

Co-authored-by: Harrison Chase <hw.chase.17@gmail.com>

- **Description:** Added some of the more endpoints supported by serpapi

that are not suported on langchain at the moment, like google trends,

google finance, google jobs, and google lens

- **Issue:** [Add support for many of the querying endpoints with

serpapi #11811](https://github.com/langchain-ai/langchain/issues/11811)

---------

Co-authored-by: zushenglu <58179949+zushenglu@users.noreply.github.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

Co-authored-by: Ian Xu <ian.xu@mail.utoronto.ca>

Co-authored-by: zushenglu <zushenglu1809@gmail.com>

Co-authored-by: KevinT928 <96837880+KevinT928@users.noreply.github.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Volc Engine MaaS serves as an enterprise-grade,

large-model service platform designed for developers. You can visit its

homepage at https://www.volcengine.com/docs/82379/1099455 for details.

This change will facilitate developers to integrate quickly with the

platform.

- **Issue:** None

- **Dependencies:** volcengine

- **Tag maintainer:** @baskaryan

- **Twitter handle:** @he1v3tica

---------

Co-authored-by: lvzhong <lvzhong@bytedance.com>

Instead of using JSON-like syntax to describe node and relationship

properties we changed to a shorter and more concise schema description

Old:

```

Node properties are the following:

[{'properties': [{'property': 'name', 'type': 'STRING'}], 'labels': 'Movie'}, {'properties': [{'property': 'name', 'type': 'STRING'}], 'labels': 'Actor'}]

Relationship properties are the following:

[]

The relationships are the following:

['(:Actor)-[:ACTED_IN]->(:Movie)']

```

New:

```

Node properties are the following:

Movie {name: STRING},Actor {name: STRING}

Relationship properties are the following:

The relationships are the following:

(:Actor)-[:ACTED_IN]->(:Movie)

```

Implements

[#12115](https://github.com/langchain-ai/langchain/issues/12115)

Who can review?

@baskaryan , @eyurtsev , @hwchase17

Integrated Stack Exchange API into Langchain, enabling access to diverse

communities within the platform. This addition enhances Langchain's

capabilities by allowing users to query Stack Exchange for specialized

information and engage in discussions. The integration provides seamless

interaction with Stack Exchange content, offering content from varied

knowledge repositories.

A notebook example and test cases were included to demonstrate the

functionality and reliability of this integration.

- Add StackExchange as a tool.

- Add unit test for the StackExchange wrapper and tool.

- Add documentation for the StackExchange wrapper and tool.

If you have time, could you please review the code and provide any

feedback as necessary! My team is welcome to any suggestions.

---------

Co-authored-by: Yuval Kamani <yuvalkamani@gmail.com>

Co-authored-by: Aryan Thakur <aryanthakur@Aryans-MacBook-Pro.local>

Co-authored-by: Manas1818 <79381912+manas1818@users.noreply.github.com>

Co-authored-by: aryan-thakur <61063777+aryan-thakur@users.noreply.github.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

Small fix to _summarization_ example, `reduce_template` should use

`{docs}` variable.

Bug likely introduced as following code suggests using

`hub.pull("rlm/map-prompt")` instead of defined prompt.

### Description:

Hey 👋🏽 this is a small docs example fix. Hoping it helps future developers who are working with Langchain.

### Problem:

Take a look at the original example code. You were not able to get the `dialogue_turn[0]` while it was a tuple.

Original code:

```python

def _format_chat_history(chat_history: List[Tuple]) -> str:

buffer = ""

for dialogue_turn in chat_history:

human = "Human: " + dialogue_turn[0]

ai = "Assistant: " + dialogue_turn[1]

buffer += "\n" + "\n".join([human, ai])

return buffer

```

In the original code you were getting this error:

```bash

human = "Human: " + dialogue_turn[0].content

~~~~~~~~~~~~~^^^

TypeError: 'HumanMessage' object is not subscriptable

```

### Solution:

The fix is to just for loop over the chat history and look to see if its a human or ai message and add it to the buffer.

The `integrations/vectorstores/matchingengine.ipynb` example has the

"Google Vertex AI Vector Search" title. This place this Title in the

wrong order in the ToC (it is sorted by the file name).

- Renamed `integrations/vectorstores/matchingengine.ipynb` into

`integrations/vectorstores/google_vertex_ai_vector_search.ipynb`.

- Updated a correspondent comment in docstring

- Rerouted old URL to a new URL

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

Addressed this issue with the top menu: It allocates too much space. If the screen is small, then the top menu items are split into two lines and look unreadable.

Another issue is with several top menu items: "Chat our docs" and "Also by LangChain". They are compound of several words which also hurts readability. The top menu items should be 1-word size.

Updates:

- "Chat our docs" -> "Chat" (the meaning is clean after clicking/opening the item)

- "Also by LangChain" -> "🦜️🔗"

- "🦜️🔗" moved before "Chat" item. This new item is partially copied from the first left item, the "🦜️🔗 LangChain". This design (with two 🦜️🔗 elements, visually splits the top menu into two parts. The first item in each part holds the 🦜️🔗 symbols and, when we click the second 🦜️🔗 item, it opens the drop-down menu. So, we've got two visually similar parts, which visually split the top menu on the right side: the LangChain Docs (and Doc-related items) and the lift side: other LangChain.ai (company) products/docs.

There are the following main changes in this PR:

1. Rewrite of the DocugamiLoader to not do any XML parsing of the DGML

format internally, and instead use the `dgml-utils` library we are

separately working on. This is a very lightweight dependency.

2. Added MMR search type as an option to multi-vector retriever, similar

to other retrievers. MMR is especially useful when using Docugami for

RAG since we deal with large sets of documents within which a few might

be duplicates and straight similarity based search doesn't give great

results in many cases.

We are @docugami on twitter, and I am @tjaffri

---------

Co-authored-by: Taqi Jaffri <tjaffri@docugami.com>

- **Description:** Adds a retriever implementation for [Knowledge Bases

for Amazon Bedrock](https://aws.amazon.com/bedrock/knowledge-bases/), a

new service announced at AWS re:Invent, shortly before this PR was

opened. This depends on the `bedrock-agent-runtime` service, which will

be included in a future version of `boto3` and of `botocore`. We will

open a follow-up PR documenting the minimum required versions of `boto3`

and `botocore` after that information is available.

- **Issue:** N/A

- **Dependencies:** `boto3>=1.33.2, botocore>=1.33.2`

- **Tag maintainer:** @baskaryan

- **Twitter handles:** `@pjain7` `@dead_letter_q`

This PR includes a documentation notebook under

`docs/docs/integrations/retrievers`, which I (@dlqqq) have verified

independently.

EDIT: `bedrock-agent-runtime` service is now included in

`boto3>=1.33.2`:

5cf793f493

---------

Co-authored-by: Piyush Jain <piyushjain@duck.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** dead link replacement

- **Issue:** no open issue

**Note:**

Hi langchain team,

Sorry to open a PR for this concern but we realized that one of the

links present in the documentation booklet was broken 😄

- **Description:** Reduce image asset file size used in documentation by

running them via lossless image optimization

([tinypng](https://www.npmjs.com/package/tinypng-cli) was used in this

case). Images wider than 1916px (the maximum width of an image displayed

in documentation) where downsized.

- **Issue:** No issue is created for this, but the large image file

assets caused slow documentation load times

- **Dependencies:** No dependencies affected

- **Description:** Existing model used for Prompt Injection is quite

outdated but we fine-tuned and open-source a new model based on the same

model deberta-v3-base from Microsoft -

[laiyer/deberta-v3-base-prompt-injection](https://huggingface.co/laiyer/deberta-v3-base-prompt-injection).

It supports more up-to-date injections and less prone to

false-positives.

- **Dependencies:** No

- **Tag maintainer:** -

- **Twitter handle:** @alex_yaremchuk

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Current docs for adapters are in the `Guides/Adapters which is not a

good place.

- moved Adapters into `Integratons/Components/Adapters/

- simplified the OpenAI adapter notebook

- rerouted the old OpenAI adapter page URL to a new one.

**Description:**

This PR adds Databricks Vector Search as a new vector store in

LangChain.

- [x] Add `DatabricksVectorSearch` in `langchain/vectorstores/`

- [x] Unit tests

- [x] Add

[`databricks-vectorsearch`](https://pypi.org/project/databricks-vectorsearch/)

as a new optional dependency

We ran the following checks:

- `make format` passed ✅

- `make lint` failed but the failures were caused by other files

+ Files touched by this PR passed the linter ✅

- `make test` passed ✅

- `make coverage` failed but the failures were caused by other files.

Tests added by or related to this PR all passed

+ langchain/vectorstores/databricks_vector_search.py test coverage 94% ✅

- `make spell_check` passed ✅

The example notebook and updates to the [provider's documentation

page](https://github.com/langchain-ai/langchain/blob/master/docs/docs/integrations/providers/databricks.md)

will be added later in a separate PR.

**Dependencies:**

Optional dependency:

[`databricks-vectorsearch`](https://pypi.org/project/databricks-vectorsearch/)

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:** Added a retriever for the Outline API to ask

questions on knowledge base

- **Issue:** resolves#11814

- **Dependencies:** None

- **Tag maintainer:** @baskaryan

- **Description:**

I encountered an issue while running the existing sample code on the

page https://python.langchain.com/docs/modules/agents/how_to/agent_iter

in an environment with Pydantic 2.0 installed. The following error was

triggered:

```python

ValidationError Traceback (most recent call last)

<ipython-input-12-2ffff2c87e76> in <cell line: 43>()

41

42 tools = [

---> 43 Tool(

44 name="GetPrime",

45 func=get_prime,

2 frames

/usr/local/lib/python3.10/dist-packages/pydantic/v1/main.py in __init__(__pydantic_self__, **data)

339 values, fields_set, validation_error = validate_model(__pydantic_self__.__class__, data)

340 if validation_error:

--> 341 raise validation_error

342 try:

343 object_setattr(__pydantic_self__, '__dict__', values)

ValidationError: 1 validation error for Tool

args_schema

subclass of BaseModel expected (type=type_error.subclass; expected_class=BaseModel)

```

I have made modifications to the example code to ensure it functions

correctly in environments with Pydantic 2.0.

This PR provides idiomatic implementations for the exact-match and the

semantic LLM caches using Astra DB as backend through the database's

HTTP JSON API. These caches require the `astrapy` library as dependency.

Comes with integration tests and example usage in the `llm_cache.ipynb`

in the docs.

@baskaryan this is the Astra DB counterpart for the Cassandra classes

you merged some time ago, tagging you for your familiarity with the

topic. Thank you!

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

This PR adds a chat message history component that uses Astra DB for

persistence through the JSON API.

The `astrapy` package is required for this class to work.

I have added tests and a small notebook, and updated the relevant

references in the other docs pages.

(@rlancemartin this is the counterpart of the Cassandra equivalent class

you so helpfully reviewed back at the end of June)

Thank you!

- **Description:** Fix typo in MongoDB memory docs

- **Tag maintainer:** @eyurtsev

<!-- Thank you for contributing to LangChain!

- **Description:** Fix typo in MongoDB memory docs

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** @baskaryan

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

- **Description:** This change adds an agent to the Azure Cognitive

Services toolkit for identifying healthcare entities

- **Dependencies:** azure-ai-textanalytics (Optional)

---------

Co-authored-by: James Beck <James.Beck@sa.gov.au>

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:**

This commit adds embedchain retriever along with tests and docs.

Embedchain is a RAG framework to create data pipelines.

**Twitter handle:**

- [Taranjeet's twitter](https://twitter.com/taranjeetio) and

[Embedchain's twitter](https://twitter.com/embedchain)

**Reviewer**

@hwchase17

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:**

Enhance the functionality of YoutubeLoader to enable the translation of

available transcripts by refining the existing logic.

**Issue:**

Encountering a problem with YoutubeLoader (#13523) where the translation

feature is not functioning as expected.

Tag maintainers/contributors who might be interested:

@eyurtsev

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

## Update 2023-09-08

This PR now supports further models in addition to Lllama-2 chat models.

See [this comment](#issuecomment-1668988543) for further details. The

title of this PR has been updated accordingly.

## Original PR description

This PR adds a generic `Llama2Chat` model, a wrapper for LLMs able to

serve Llama-2 chat models (like `LlamaCPP`,

`HuggingFaceTextGenInference`, ...). It implements `BaseChatModel`,

converts a list of chat messages into the [required Llama-2 chat prompt

format](https://huggingface.co/blog/llama2#how-to-prompt-llama-2) and

forwards the formatted prompt as `str` to the wrapped `LLM`. Usage

example:

```python

# uses a locally hosted Llama2 chat model

llm = HuggingFaceTextGenInference(

inference_server_url="http://127.0.0.1:8080/",

max_new_tokens=512,

top_k=50,

temperature=0.1,

repetition_penalty=1.03,

)

# Wrap llm to support Llama2 chat prompt format.

# Resulting model is a chat model

model = Llama2Chat(llm=llm)

messages = [

SystemMessage(content="You are a helpful assistant."),

MessagesPlaceholder(variable_name="chat_history"),

HumanMessagePromptTemplate.from_template("{text}"),

]

prompt = ChatPromptTemplate.from_messages(messages)

memory = ConversationBufferMemory(memory_key="chat_history", return_messages=True)

chain = LLMChain(llm=model, prompt=prompt, memory=memory)

# use chat model in a conversation

# ...

```

Also part of this PR are tests and a demo notebook.

- Tag maintainer: @hwchase17

- Twitter handle: `@mrt1nz`

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

The original notebook has the `faiss` title which is duplicated in

the`faiss.jpynb`. As a result, we have two `faiss` items in the

vectorstore ToC. And the first item breaks the searching order (it is

placed between `A...` items).

- I updated title to `Asynchronous Faiss`.

- Fixed titles for two notebooks. They were inconsistent with other

titles and clogged ToC.

- Added `Upstash` description and link

- Moved the authentication text up in the `Elasticsearch` nb, right

after package installation. It was on the end of the page which was a

wrong place.

This PR brings a few minor improvements to the docs, namely class/method

docstrings and the demo notebook.

- A note on how to control concurrency levels to tune performance in

bulk inserts, both in the class docstring and the demo notebook;

- Slightly increased concurrency defaults after careful experimentation

(still on the conservative side even for clients running on

less-than-typical network/hardware specs)

- renamed the DB token variable to the standardized

`ASTRA_DB_APPLICATION_TOKEN` name (used elsewhere, e.g. in the Astra DB

docs)

- added a note and a reference (add_text docstring, demo notebook) on

allowed metadata field names.

Thank you!

The current `integrations/document_loaders/` sidebar has the

`example_data` item, which is a menu with a single item: "Notebook".

It is happening because the `integrations/document_loaders/` folder has

the `example_data/notebook.md` file that is used to autogenerate the

above menu item.

- removed an example_data/notebook.md file. Docusaurus doesn't have

simple ways to fix this problem (to exclude folders/files from an

autogenerated sidebar). Removing this file didn't break any existing

examples, so this fix is safe.

Updated several notebooks:

- fixed titles which are inconsistent or break the ToC sorting order.

- added missed soruce descriptions and links

- fixed formatting

- the `SemaDB` notebook was placed in additional subfolder which breaks

the vectorstore ToC. I moved file up, removed this unnecessary

subfolder; updated the `vercel.json` with rerouting for the new URL

- Added SemaDB description and link

- improved text consistency

- Fixed the title of the notebook. It created an ugly ToC element as

`Activeloop DeepLake's DeepMemory + LangChain + ragas or how to get +27%

on RAG recall.`

- Added Activeloop description

- improved consistency in text

- fixed ToC (it was using HTML tagas that break left-side in-page ToC).

Now in-page ToC works

- Fixed headers (was more then 1 Titles)

- Removed security token value. It was OK to have it, because it is

temporary token, but the automatic security swippers raise warnings on

that.

- Added `ClickUp` service description and link.

The `Integrations` site is hidden now.

I've added it into the `More` menu.

The name is `Integration Cards` otherwise, it is confused with the

`Integrations` menu.

---------

Co-authored-by: Erick Friis <erickfriis@gmail.com>

The new ruff version fixed the blocking bugs, and I was able to fairly

easily us to a passing state: ruff fixed some issues on its own, I fixed

a handful by hand, and I added a list of narrowly-targeted exclusions

for files that are currently failing ruff rules that we probably should

look into eventually.

I went pretty lenient on the docs / cookbooks rules, allowing dead code

and such things. Perhaps in the future we may want to tighten the rules

further, but this is already a good set of checks that found real issues

and will prevent them going forward.

Hey @rlancemartin, @eyurtsev ,

I did some minimal changes to the `ElasticVectorSearch` client so that

it plays better with existing ES indices.

Main changes are as follows:

1. You can pass the dense vector field name into `_default_script_query`

2. You can pass a custom script query implementation and the respective

parameters to `similarity_search_with_score`

3. You can pass functions for building page content and metadata for the

resulting `Document`

<!-- Thank you for contributing to LangChain!

Replace this comment with:

- Description: a description of the change,

- Issue: the issue # it fixes (if applicable),

- Dependencies: any dependencies required for this change,

- Tag maintainer: for a quicker response, tag the relevant maintainer

(see below),

- Twitter handle: we announce bigger features on Twitter. If your PR

gets announced and you'd like a mention, we'll gladly shout you out!

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

4. an example notebook showing its use.

Maintainer responsibilities:

- General / Misc / if you don't know who to tag: @dev2049

- DataLoaders / VectorStores / Retrievers: @rlancemartin, @eyurtsev

- Models / Prompts: @hwchase17, @dev2049

- Memory: @hwchase17

- Agents / Tools / Toolkits: @vowelparrot

- Tracing / Callbacks: @agola11

- Async: @agola11

If no one reviews your PR within a few days, feel free to @-mention the

same people again.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/hwchase17/langchain/blob/master/.github/CONTRIBUTING.md

-->

- **Description:** Refine Weaviate tutorial and add an example for

Retrieval-Augmented Generation (RAG)

- **Issue:** (not applicable),

- **Dependencies:** none

- **Tag maintainer:** @baskaryan <!--

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

- **Twitter handle:** @helloiamleonie

Co-authored-by: Leonie <leonie@Leonies-MBP-2.fritz.box>

On the [Defining Custom

Tools](https://python.langchain.com/docs/modules/agents/tools/custom_tools)

page, there's a 'Subclassing the BaseTool class' paragraph under the

'Completely New Tools - String Input and Output' header. Also there's

another 'Subclassing the BaseTool' paragraph under no header, which I

think may belong to the 'Custom Structured Tools' header.

Another thing is, there's a 'Using the tool decorator' and a 'Using the

decorator' paragraph, I think should belong to 'Completely New Tools -

String Input and Output' and 'Custom Structured Tools' separately.

This PR moves those paragraphs to corresponding headers.

- **Description:** Changed the fleet_context documentation to use

`context.download_embeddings()` from the latest release from our

package. More details here:

https://github.com/fleet-ai/context/tree/main#api

- **Issue:** n/a

- **Dependencies:** n/a

- **Tag maintainer:** @baskaryan

- **Twitter handle:** @andrewthezhou

Added a Docusaurus Loader

Issue: #6353

I had to implement this for working with the Ionic documentation, and

wanted to open this up as a draft to get some guidance on building this

out further. I wasn't sure if having it be a light extension of the

SitemapLoader was in the spirit of a proper feature for the library --

but I'm grateful for the opportunities Langchain has given me and I'd

love to build this out properly for the sake of the community.

Any feedback welcome!

# Astra DB Vector store integration

- **Description:** This PR adds a `VectorStore` implementation for

DataStax Astra DB using its HTTP API

- **Issue:** (no related issue)

- **Dependencies:** A new required dependency is `astrapy` (`>=0.5.3`)

which was added to pyptoject.toml, optional, as per guidelines

- **Tag maintainer:** I recently mentioned to @baskaryan this

integration was coming

- **Twitter handle:** `@rsprrs` if you want to mention me

This PR introduces the `AstraDB` vector store class, extensive

integration test coverage, a reworking of the documentation which

conflates Cassandra and Astra DB on a single "provider" page and a new,

completely reworked vector-store example notebook (common to the

Cassandra store, since parts of the flow is shared by the two APIs). I

also took care in ensuring docs (and redirects therein) are behaving

correctly.

All style, linting, typechecks and tests pass as far as the `AstraDB`

integration is concerned.

I could build the documentation and check it all right (but ran into

trouble with the `api_docs_build` makefile target which I could not

verify: `Error: Unable to import module

'plan_and_execute.agent_executor' with error: No module named

'langchain_experimental'` was the first of many similar errors)

Thank you for a review!

Stefano

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

- **Description:** Remove text "LangChain currently does not support"

which appears to be vestigial leftovers from a previous change.

- **Issue:** N/A

- **Dependencies:** N/A

- **Tag maintainer:** @baskaryan, @eyurtsev

- **Twitter handle:** thezanke

- **Description:** Noticed that the Hugging Face Pipeline documentation

was a bit out of date.

Updated with information about passing in a pipeline directly

(consistent with docstring) and a recent contribution of mine on adding

support for multi-gpu specifications with Accelerate in

21eeba075c

The line removed is not required as there are no other alternative

solutions above than that.

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

**Description**

Removed confusing sentence.

Not clear what "both" was referring to. The two required components

mentioned previously? The two methods listed below?

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

Zep now has the ability to search over chat history summaries. This PR

adds support for doing so. More here: https://blog.getzep.com/zep-v0-17/

@baskaryan @eyurtsev

This PR replaces broken links to end to end usecases

([/docs/use_cases](https://python.langchain.com/docs/use_cases)) with a

non-broken version

([/docs/use_cases/qa_structured/sql](https://python.langchain.com/docs/use_cases/qa_structured/sql)),

consistently with the "Use cases" navigation button at the top of the

page.

---------

Co-authored-by: Matvey Arye <mat@timescale.com>

Co-authored-by: Erick Friis <erick@langchain.dev>

- **Description:**

Corrected a specific link within the documentation.

- **Issue:**

#12490

- **Dependencies:**

- **Tag maintainer:**

- **Twitter handle:**

---------

Co-authored-by: Erick Friis <erick@langchain.dev>

Fixed a typo

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** Fixed a typo on the code

- **Issue:** the issue # it fixes (if applicable),

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

* Restrict the chain to specific domains by default

* This is a breaking change, but it will fail loudly upon object

instantiation -- so there should be no silent errors for users

* Resolves CVE-2023-32786

- **Description:** implement [quip](https://quip.com) loader

- **Issue:** https://github.com/langchain-ai/langchain/issues/10352

- **Dependencies:** No

- pass make format, make lint, make test

---------

Co-authored-by: Hao Fan <h_fan@apple.com>

Co-authored-by: Bagatur <baskaryan@gmail.com>

<!-- Thank you for contributing to LangChain!

Replace this entire comment with:

- **Description:** a description of the change,

- **Issue:** the issue # it fixes (if applicable),

- **Dependencies:** any dependencies required for this change,

- **Tag maintainer:** for a quicker response, tag the relevant

maintainer (see below),

- **Twitter handle:** we announce bigger features on Twitter. If your PR

gets announced, and you'd like a mention, we'll gladly shout you out!

Please make sure your PR is passing linting and testing before

submitting. Run `make format`, `make lint` and `make test` to check this

locally.

See contribution guidelines for more information on how to write/run

tests, lint, etc:

https://github.com/langchain-ai/langchain/blob/master/.github/CONTRIBUTING.md

If you're adding a new integration, please include:

1. a test for the integration, preferably unit tests that do not rely on

network access,

2. an example notebook showing its use. It lives in `docs/extras`

directory.

If no one reviews your PR within a few days, please @-mention one of

@baskaryan, @eyurtsev, @hwchase17.

-->

---------

Co-authored-by: Matvey Arye <mat@timescale.com>

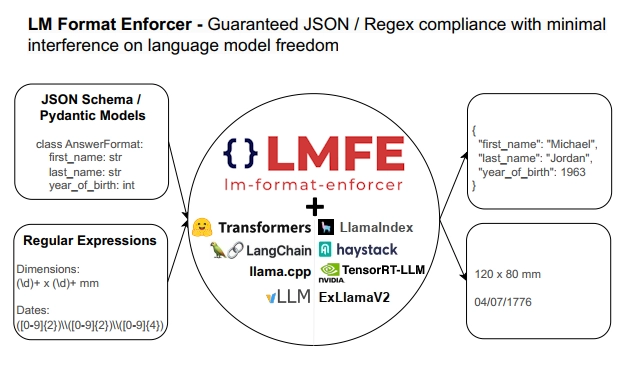

## Description

This PR adds support for

[lm-format-enforcer](https://github.com/noamgat/lm-format-enforcer) to

LangChain.

The library is similar to jsonformer / RELLM which are supported in

Langchain, but has several advantages such as

- Batching and Beam search support

- More complete JSON Schema support

- LLM has control over whitespace, improving quality

- Better runtime performance due to only calling the LLM's generate()

function once per generate() call.

The integration is loosely based on the jsonformer integration in terms

of project structure.

## Dependencies

No compile-time dependency was added, but if `lm-format-enforcer` is not

installed, a runtime error will occur if it is trying to be used.

## Tests

Due to the integration modifying the internal parameters of the

underlying huggingface transformer LLM, it is not possible to test

without building a real LM, which requires internet access. So, similar

to the jsonformer and RELLM integrations, the testing is via the

notebook.

## Twitter Handle

[@noamgat](https://twitter.com/noamgat)

Looking forward to hearing feedback!

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

- **Description:**

Before:

`

To install modules needed for the common LLM providers, run:

`

After:

`

To install modules needed for the common LLM providers, run the

following command. Please bear in mind that this command is exclusively

compatible with the `bash` shell:

`

> This is required for the user so that the user will know if this

command is compatible with `zsh` or not.

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

**Description:** Textract PDF Loader generating linearized output,

meaning it will replicate the structure of the source document as close

as possible based on the features passed into the call (e. g. LAYOUT,

FORMS, TABLES). With LAYOUT reading order for multi-column documents or

identification of lists and figures is supported and with TABLES it will

generate the table structure as well. FORMS will indicate "key: value"

with columms.

- **Issue:** the issue fixes#12068

- **Dependencies:** amazon-textract-textractor is added, which provides

the linearization

- **Tag maintainer:** @3coins

---------

Co-authored-by: Bagatur <baskaryan@gmail.com>

Following this tutoral about using OpenAI Embeddings with FAISS

https://python.langchain.com/docs/integrations/vectorstores/faiss

```python

from langchain.embeddings.openai import OpenAIEmbeddings

from langchain.text_splitter import CharacterTextSplitter

from langchain.vectorstores import FAISS

from langchain.document_loaders import TextLoader

from langchain.document_loaders import TextLoader

loader = TextLoader("../../../extras/modules/state_of_the_union.txt")

documents = loader.load()

text_splitter = CharacterTextSplitter(chunk_size=1000, chunk_overlap=0)

docs = text_splitter.split_documents(documents)

embeddings = OpenAIEmbeddings()

```

This works fine

```python

db = FAISS.from_documents(docs, embeddings)

query = "What did the president say about Ketanji Brown Jackson"

docs = db.similarity_search(query)

```

But the async version is not

```python

db = await FAISS.afrom_documents(docs, embeddings) # NotImplementedError

query = "What did the president say about Ketanji Brown Jackson"

docs = await db.asimilarity_search(query) # this will use await asyncio.get_event_loop().run_in_executor under the hood and will not call OpenAIEmbeddings.aembed_query but call OpenAIEmbeddings.embed_query

```

So this PR add async/await supports for FAISS

---------

Co-authored-by: Eugene Yurtsev <eyurtsev@gmail.com>

- Description: adding support to Activeloop's DeepMemory feature that

boosts recall up to 25%. Added Jupyter notebook showcasing the feature

and also made index params explicit.

- Twitter handle: will really appreciate if we could announce this on

twitter.

---------

Co-authored-by: adolkhan <adilkhan.sarsen@alumni.nu.edu.kz>

Bumps

[@babel/traverse](https://github.com/babel/babel/tree/HEAD/packages/babel-traverse)

from 7.22.8 to 7.23.2.

<details>

<summary>Release notes</summary>

<p><em>Sourced from <a

href="https://github.com/babel/babel/releases"><code>@babel/traverse</code>'s

releases</a>.</em></p>

<blockquote>

<h2>v7.23.2 (2023-10-11)</h2>

<p><strong>NOTE</strong>: This release also re-publishes

<code>@babel/core</code>, even if it does not appear in the linked

release commit.</p>

<p>Thanks <a

href="https://github.com/jimmydief"><code>@jimmydief</code></a> for

your first PR!</p>

<h4>🐛 Bug Fix</h4>

<ul>

<li><code>babel-traverse</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16033">#16033</a>

Only evaluate own String/Number/Math methods (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-preset-typescript</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16022">#16022</a>

Rewrite <code>.tsx</code> extension when using

<code>rewriteImportExtensions</code> (<a

href="https://github.com/jimmydief"><code>@jimmydief</code></a>)</li>

</ul>

</li>

<li><code>babel-helpers</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16017">#16017</a>

Fix: fallback to typeof when toString is applied to incompatible object

(<a href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

</ul>

</li>

<li><code>babel-helpers</code>,

<code>babel-plugin-transform-modules-commonjs</code>,

<code>babel-runtime-corejs2</code>, <code>babel-runtime-corejs3</code>,

<code>babel-runtime</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16025">#16025</a>

Avoid override mistake in namespace imports (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

</ul>

<h4>Committers: 5</h4>

<ul>

<li>Babel Bot (<a

href="https://github.com/babel-bot"><code>@babel-bot</code></a>)</li>

<li>Huáng Jùnliàng (<a

href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

<li>James Diefenderfer (<a

href="https://github.com/jimmydief"><code>@jimmydief</code></a>)</li>

<li>Nicolò Ribaudo (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

<li><a

href="https://github.com/liuxingbaoyu"><code>@liuxingbaoyu</code></a></li>

</ul>

<h2>v7.23.1 (2023-09-25)</h2>

<p>Re-publishing <code>@babel/helpers</code> due to a publishing error

in 7.23.0.</p>

<h2>v7.23.0 (2023-09-25)</h2>

<p>Thanks <a

href="https://github.com/lorenzoferre"><code>@lorenzoferre</code></a>

and <a

href="https://github.com/RajShukla1"><code>@RajShukla1</code></a> for

your first PRs!</p>

<h4>🚀 New Feature</h4>

<ul>

<li><code>babel-plugin-proposal-import-wasm-source</code>,

<code>babel-plugin-syntax-import-source</code>,

<code>babel-plugin-transform-dynamic-import</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15870">#15870</a>

Support transforming <code>import source</code> for wasm (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-helper-module-transforms</code>,

<code>babel-helpers</code>,

<code>babel-plugin-proposal-import-defer</code>,

<code>babel-plugin-syntax-import-defer</code>,

<code>babel-plugin-transform-modules-commonjs</code>,

<code>babel-runtime-corejs2</code>, <code>babel-runtime-corejs3</code>,

<code>babel-runtime</code>, <code>babel-standalone</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15878">#15878</a>

Implement <code>import defer</code> proposal transform support (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-generator</code>, <code>babel-parser</code>,

<code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15845">#15845</a>

Implement <code>import defer</code> parsing support (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

<li><a

href="https://redirect.github.com/babel/babel/pull/15829">#15829</a> Add

parsing support for the "source phase imports" proposal (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-generator</code>,

<code>babel-helper-module-transforms</code>, <code>babel-parser</code>,

<code>babel-plugin-transform-dynamic-import</code>,

<code>babel-plugin-transform-modules-amd</code>,

<code>babel-plugin-transform-modules-commonjs</code>,

<code>babel-plugin-transform-modules-systemjs</code>,

<code>babel-traverse</code>, <code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15682">#15682</a> Add

<code>createImportExpressions</code> parser option (<a

href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

</ul>

</li>

<li><code>babel-standalone</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15671">#15671</a>

Pass through nonce to the transformed script element (<a

href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

</ul>

</li>

<li><code>babel-helper-function-name</code>,

<code>babel-helper-member-expression-to-functions</code>,

<code>babel-helpers</code>, <code>babel-parser</code>,

<code>babel-plugin-proposal-destructuring-private</code>,

<code>babel-plugin-proposal-optional-chaining-assign</code>,

<code>babel-plugin-syntax-optional-chaining-assign</code>,

<code>babel-plugin-transform-destructuring</code>,

<code>babel-plugin-transform-optional-chaining</code>,

<code>babel-runtime-corejs2</code>, <code>babel-runtime-corejs3</code>,

<code>babel-runtime</code>, <code>babel-standalone</code>,

<code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15751">#15751</a> Add

support for optional chain in assignments (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-helpers</code>,

<code>babel-plugin-proposal-decorators</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15895">#15895</a>

Implement the "decorator metadata" proposal (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-traverse</code>, <code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15893">#15893</a> Add

<code>t.buildUndefinedNode</code> (<a

href="https://github.com/liuxingbaoyu"><code>@liuxingbaoyu</code></a>)</li>

</ul>

</li>

<li><code>babel-preset-typescript</code></li>

</ul>

<!-- raw HTML omitted -->

</blockquote>

<p>... (truncated)</p>

</details>

<details>

<summary>Changelog</summary>

<p><em>Sourced from <a

href="https://github.com/babel/babel/blob/main/CHANGELOG.md"><code>@babel/traverse</code>'s

changelog</a>.</em></p>

<blockquote>

<h2>v7.23.2 (2023-10-11)</h2>

<h4>🐛 Bug Fix</h4>

<ul>

<li><code>babel-traverse</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16033">#16033</a>

Only evaluate own String/Number/Math methods (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-preset-typescript</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16022">#16022</a>

Rewrite <code>.tsx</code> extension when using

<code>rewriteImportExtensions</code> (<a

href="https://github.com/jimmydief"><code>@jimmydief</code></a>)</li>

</ul>

</li>

<li><code>babel-helpers</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16017">#16017</a>

Fix: fallback to typeof when toString is applied to incompatible object

(<a href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

</ul>

</li>

<li><code>babel-helpers</code>,

<code>babel-plugin-transform-modules-commonjs</code>,

<code>babel-runtime-corejs2</code>, <code>babel-runtime-corejs3</code>,

<code>babel-runtime</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/16025">#16025</a>

Avoid override mistake in namespace imports (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

</ul>

<h2>v7.23.0 (2023-09-25)</h2>

<h4>🚀 New Feature</h4>

<ul>

<li><code>babel-plugin-proposal-import-wasm-source</code>,

<code>babel-plugin-syntax-import-source</code>,

<code>babel-plugin-transform-dynamic-import</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15870">#15870</a>

Support transforming <code>import source</code> for wasm (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-helper-module-transforms</code>,

<code>babel-helpers</code>,

<code>babel-plugin-proposal-import-defer</code>,

<code>babel-plugin-syntax-import-defer</code>,

<code>babel-plugin-transform-modules-commonjs</code>,

<code>babel-runtime-corejs2</code>, <code>babel-runtime-corejs3</code>,

<code>babel-runtime</code>, <code>babel-standalone</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15878">#15878</a>

Implement <code>import defer</code> proposal transform support (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-generator</code>, <code>babel-parser</code>,

<code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15845">#15845</a>

Implement <code>import defer</code> parsing support (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

<li><a

href="https://redirect.github.com/babel/babel/pull/15829">#15829</a> Add

parsing support for the "source phase imports" proposal (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-generator</code>,

<code>babel-helper-module-transforms</code>, <code>babel-parser</code>,

<code>babel-plugin-transform-dynamic-import</code>,

<code>babel-plugin-transform-modules-amd</code>,

<code>babel-plugin-transform-modules-commonjs</code>,

<code>babel-plugin-transform-modules-systemjs</code>,

<code>babel-traverse</code>, <code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15682">#15682</a> Add

<code>createImportExpressions</code> parser option (<a

href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

</ul>

</li>

<li><code>babel-standalone</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15671">#15671</a>

Pass through nonce to the transformed script element (<a

href="https://github.com/JLHwung"><code>@JLHwung</code></a>)</li>

</ul>

</li>

<li><code>babel-helper-function-name</code>,

<code>babel-helper-member-expression-to-functions</code>,

<code>babel-helpers</code>, <code>babel-parser</code>,

<code>babel-plugin-proposal-destructuring-private</code>,

<code>babel-plugin-proposal-optional-chaining-assign</code>,

<code>babel-plugin-syntax-optional-chaining-assign</code>,

<code>babel-plugin-transform-destructuring</code>,

<code>babel-plugin-transform-optional-chaining</code>,

<code>babel-runtime-corejs2</code>, <code>babel-runtime-corejs3</code>,

<code>babel-runtime</code>, <code>babel-standalone</code>,

<code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15751">#15751</a> Add

support for optional chain in assignments (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-helpers</code>,

<code>babel-plugin-proposal-decorators</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15895">#15895</a>

Implement the "decorator metadata" proposal (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-traverse</code>, <code>babel-types</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15893">#15893</a> Add

<code>t.buildUndefinedNode</code> (<a

href="https://github.com/liuxingbaoyu"><code>@liuxingbaoyu</code></a>)</li>

</ul>

</li>

<li><code>babel-preset-typescript</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15913">#15913</a> Add

<code>rewriteImportExtensions</code> option to TS preset (<a

href="https://github.com/nicolo-ribaudo"><code>@nicolo-ribaudo</code></a>)</li>

</ul>

</li>

<li><code>babel-parser</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15896">#15896</a>

Allow TS tuples to have both labeled and unlabeled elements (<a

href="https://github.com/yukukotani"><code>@yukukotani</code></a>)</li>

</ul>

</li>

</ul>

<h4>🐛 Bug Fix</h4>

<ul>

<li><code>babel-plugin-transform-block-scoping</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15962">#15962</a>

fix: <code>transform-block-scoping</code> captures the variables of the

method in the loop (<a

href="https://github.com/liuxingbaoyu"><code>@liuxingbaoyu</code></a>)</li>

</ul>

</li>

</ul>

<h4>💅 Polish</h4>

<ul>

<li><code>babel-traverse</code>

<ul>

<li><a

href="https://redirect.github.com/babel/babel/pull/15797">#15797</a>