mirror of

https://github.com/hwchase17/langchain

synced 2024-11-06 03:20:49 +00:00

[Documents] Updated Figma docs and added example (#2172)

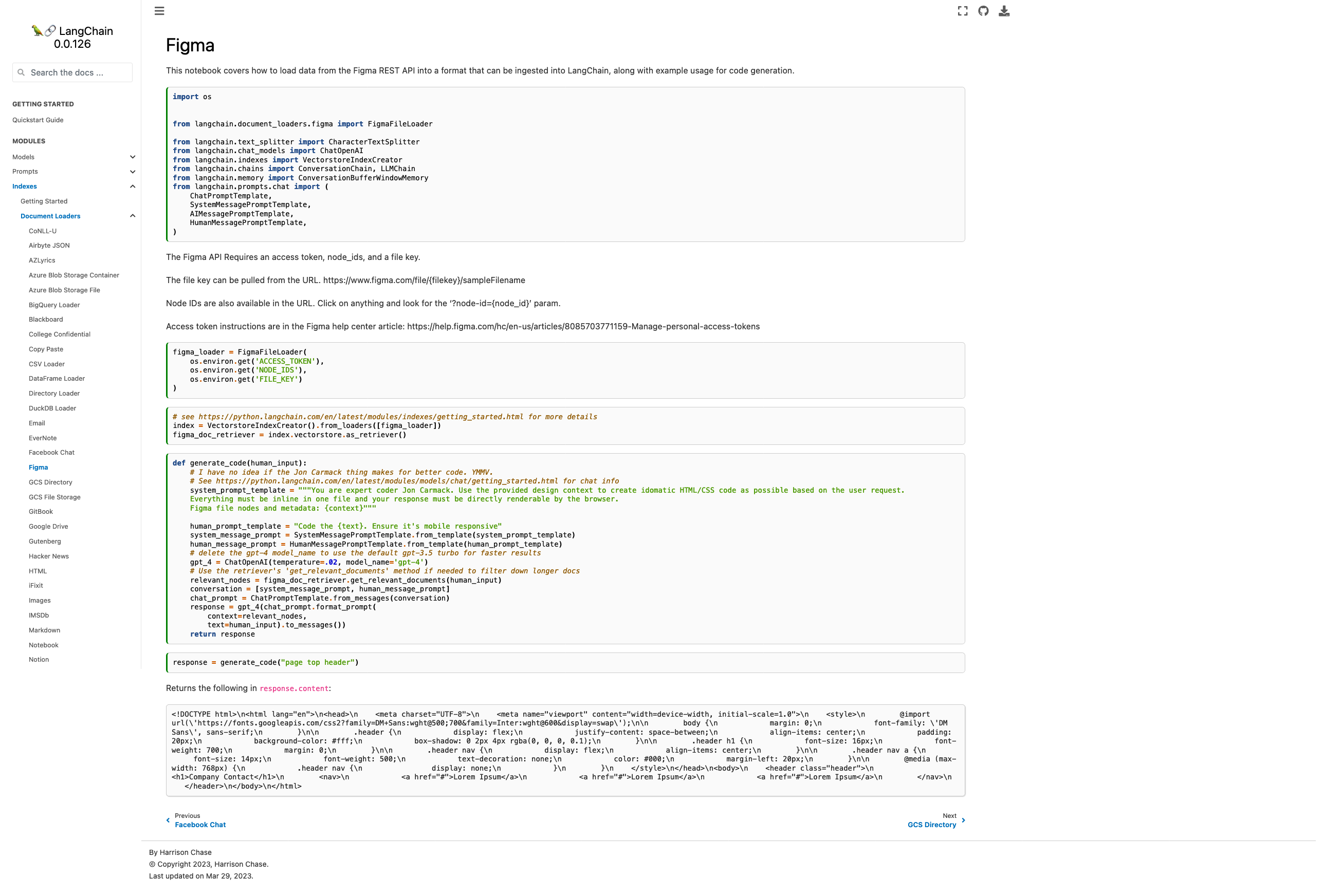

- Current docs are pointing to the wrong module, fixed - Added some explanation on how to find the necessary parameters - Added chat-based codegen example w/ retrievers Picture of the new page:  Please let me know if you'd like any tweaks! I wasn't sure if the example was too heavy for the page or not but decided "hey, I probably would want to see it" and so included it. Co-authored-by: maxtheman <max@maxs-mbp.lan>

This commit is contained in:

parent

5c907d9998

commit

3dc49a04a3

@ -1,13 +1,14 @@

|

||||

{

|

||||

"cells": [

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"id": "33205b12",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"# Figma\n",

|

||||

"\n",

|

||||

"This notebook covers how to load data from the Figma REST API into a format that can be ingested into LangChain."

|

||||

"This notebook covers how to load data from the Figma REST API into a format that can be ingested into LangChain, along with example usage for code generation."

|

||||

]

|

||||

},

|

||||

{

|

||||

@ -19,7 +20,35 @@

|

||||

"source": [

|

||||

"import os\n",

|

||||

"\n",

|

||||

"from langchain.document_loaders import FigmaFileLoader"

|

||||

"\n",

|

||||

"from langchain.document_loaders.figma import FigmaFileLoader\n",

|

||||

"\n",

|

||||

"from langchain.text_splitter import CharacterTextSplitter\n",

|

||||

"from langchain.chat_models import ChatOpenAI\n",

|

||||

"from langchain.indexes import VectorstoreIndexCreator\n",

|

||||

"from langchain.chains import ConversationChain, LLMChain\n",

|

||||

"from langchain.memory import ConversationBufferWindowMemory\n",

|

||||

"from langchain.prompts.chat import (\n",

|

||||

" ChatPromptTemplate,\n",

|

||||

" SystemMessagePromptTemplate,\n",

|

||||

" AIMessagePromptTemplate,\n",

|

||||

" HumanMessagePromptTemplate,\n",

|

||||

")"

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"id": "d809744a",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"The Figma API Requires an access token, node_ids, and a file key.\n",

|

||||

"\n",

|

||||

"The file key can be pulled from the URL. https://www.figma.com/file/{filekey}/sampleFilename\n",

|

||||

"\n",

|

||||

"Node IDs are also available in the URL. Click on anything and look for the '?node-id={node_id}' param.\n",

|

||||

"\n",

|

||||

"Access token instructions are in the Figma help center article: https://help.figma.com/hc/en-us/articles/8085703771159-Manage-personal-access-tokens"

|

||||

]

|

||||

},

|

||||

{

|

||||

@ -29,7 +58,7 @@

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"loader = FigmaFileLoader(\n",

|

||||

"figma_loader = FigmaFileLoader(\n",

|

||||

" os.environ.get('ACCESS_TOKEN'),\n",

|

||||

" os.environ.get('NODE_IDS'),\n",

|

||||

" os.environ.get('FILE_KEY')\n",

|

||||

@ -43,7 +72,9 @@

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"loader.load()"

|

||||

"# see https://python.langchain.com/en/latest/modules/indexes/getting_started.html for more details\n",

|

||||

"index = VectorstoreIndexCreator().from_loaders([figma_loader])\n",

|

||||

"figma_doc_retriever = index.vectorstore.as_retriever()"

|

||||

]

|

||||

},

|

||||

{

|

||||

@ -52,6 +83,55 @@

|

||||

"id": "3e64cac2",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"def generate_code(human_input):\n",

|

||||

" # I have no idea if the Jon Carmack thing makes for better code. YMMV.\n",

|

||||

" # See https://python.langchain.com/en/latest/modules/models/chat/getting_started.html for chat info\n",

|

||||

" system_prompt_template = \"\"\"You are expert coder Jon Carmack. Use the provided design context to create idomatic HTML/CSS code as possible based on the user request.\n",

|

||||

" Everything must be inline in one file and your response must be directly renderable by the browser.\n",

|

||||

" Figma file nodes and metadata: {context}\"\"\"\n",

|

||||

"\n",

|

||||

" human_prompt_template = \"Code the {text}. Ensure it's mobile responsive\"\n",

|

||||

" system_message_prompt = SystemMessagePromptTemplate.from_template(system_prompt_template)\n",

|

||||

" human_message_prompt = HumanMessagePromptTemplate.from_template(human_prompt_template)\n",

|

||||

" # delete the gpt-4 model_name to use the default gpt-3.5 turbo for faster results\n",

|

||||

" gpt_4 = ChatOpenAI(temperature=.02, model_name='gpt-4')\n",

|

||||

" # Use the retriever's 'get_relevant_documents' method if needed to filter down longer docs\n",

|

||||

" relevant_nodes = figma_doc_retriever.get_relevant_documents(human_input)\n",

|

||||

" conversation = [system_message_prompt, human_message_prompt]\n",

|

||||

" chat_prompt = ChatPromptTemplate.from_messages(conversation)\n",

|

||||

" response = gpt_4(chat_prompt.format_prompt( \n",

|

||||

" context=relevant_nodes, \n",

|

||||

" text=human_input).to_messages())\n",

|

||||

" return response"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "code",

|

||||

"execution_count": null,

|

||||

"id": "36a96114",

|

||||

"metadata": {},

|

||||

"outputs": [],

|

||||

"source": [

|

||||

"response = generate_code(\"page top header\")"

|

||||

]

|

||||

},

|

||||

{

|

||||

"attachments": {},

|

||||

"cell_type": "markdown",

|

||||

"id": "baf9b2c9",

|

||||

"metadata": {},

|

||||

"source": [

|

||||

"Returns the following in `response.content`:\n",

|

||||

"```\n",

|

||||

"<!DOCTYPE html>\\n<html lang=\"en\">\\n<head>\\n <meta charset=\"UTF-8\">\\n <meta name=\"viewport\" content=\"width=device-width, initial-scale=1.0\">\\n <style>\\n @import url(\\'https://fonts.googleapis.com/css2?family=DM+Sans:wght@500;700&family=Inter:wght@600&display=swap\\');\\n\\n body {\\n margin: 0;\\n font-family: \\'DM Sans\\', sans-serif;\\n }\\n\\n .header {\\n display: flex;\\n justify-content: space-between;\\n align-items: center;\\n padding: 20px;\\n background-color: #fff;\\n box-shadow: 0 2px 4px rgba(0, 0, 0, 0.1);\\n }\\n\\n .header h1 {\\n font-size: 16px;\\n font-weight: 700;\\n margin: 0;\\n }\\n\\n .header nav {\\n display: flex;\\n align-items: center;\\n }\\n\\n .header nav a {\\n font-size: 14px;\\n font-weight: 500;\\n text-decoration: none;\\n color: #000;\\n margin-left: 20px;\\n }\\n\\n @media (max-width: 768px) {\\n .header nav {\\n display: none;\\n }\\n }\\n </style>\\n</head>\\n<body>\\n <header class=\"header\">\\n <h1>Company Contact</h1>\\n <nav>\\n <a href=\"#\">Lorem Ipsum</a>\\n <a href=\"#\">Lorem Ipsum</a>\\n <a href=\"#\">Lorem Ipsum</a>\\n </nav>\\n </header>\\n</body>\\n</html>\n",

|

||||

"```"

|

||||

]

|

||||

},

|

||||

{

|

||||

"cell_type": "markdown",

|

||||

"id": "38827110",

|

||||

"metadata": {},

|

||||

"source": []

|

||||

}

|

||||

],

|

||||

@ -71,7 +151,7 @@

|

||||

"name": "python",

|

||||

"nbconvert_exporter": "python",

|

||||

"pygments_lexer": "ipython3",

|

||||

"version": "3.9.1"

|

||||

"version": "3.10.10"

|

||||

}

|

||||

},

|

||||

"nbformat": 4,

|

||||

|

||||

Loading…

Reference in New Issue

Block a user